- Home

- /

- SAS Communities Library

- /

- DevOps Applied to SAS Viya 3.5: Run and Test CAS Programs with a Jenki...

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

DevOps Applied to SAS Viya 3.5: Run and Test CAS Programs with a Jenkins Pipeline

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

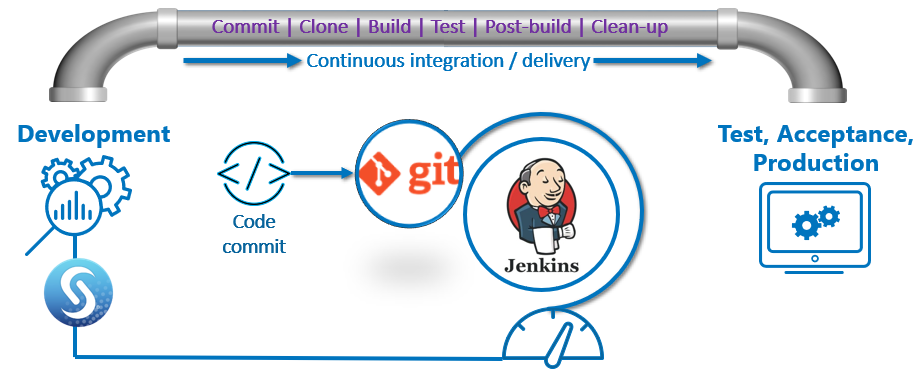

Automate the development, testing and execution of SAS (CAS) code in SAS Viya 3.5 with Git and Jenkins. Learn how to create a Jenkins pipeline for a simple end-to-end scenario: load files in CAS, create a star schema as a CAS view, test the star schema and finally, clean-up.

In a previous post, DevOps Applied to SAS Viya 3.5: Run a SAS Program with a Jenkins Pipeline, we covered the basics of Jenkins, Git and SAS Viya. GitLab will be used in the post as Git management software.

Select any image to see a larger version.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

Required

- SAS (CAS) programs we need to run, pushed in Git (GitLab).

- A Jenkins file containing the Jenkins pipeline definition, stored in Git (GitLab).

- Jenkins running the pipeline with SAS Viya as an agent.

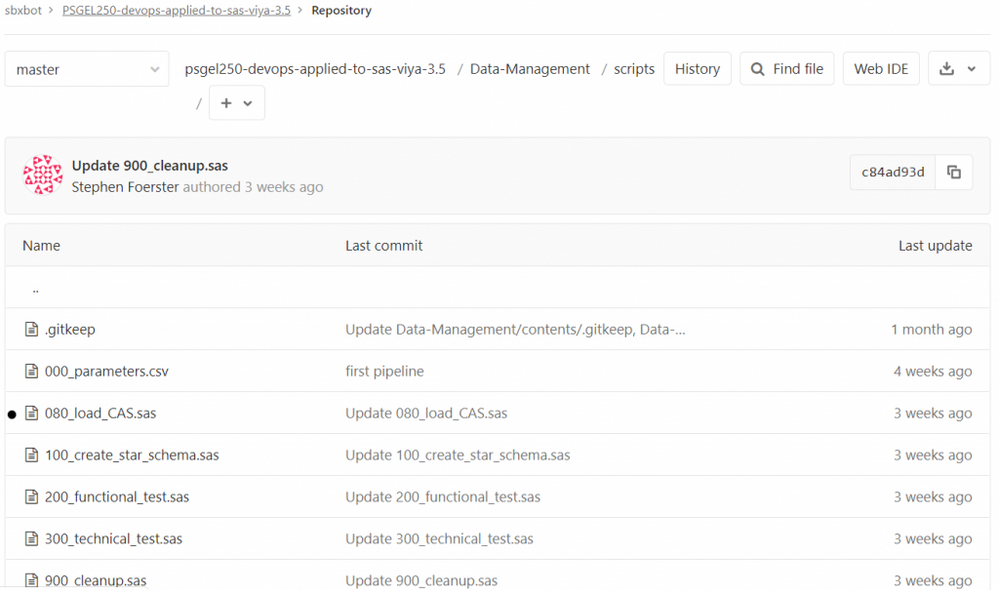

CAS Programs in GitLab

The CAS programs we want in the Jenkins pipeline:

- 080_load_CAS.sas loads all necessary source files in CAS tables.

- 100_create_star_schema.sas creates and queries the star schema, as a CAS view. From a functional perspective, it is the same CAS star schema created in Query Performance? Use a CAS Star Schema in Viya 3.5.

- 200_functional_test.sas queries the star schema with a simple.summary cas action.

- 300_technical_test.sas queries a status code produced by the previous script.

- 900_cleanup.sas drops the tables, deletes source data.

The process to push CAS files in Git (GitLab) was covered in DevOps Applied to SAS Viya 3.5: Top Git Commands with Examples.

The Jenkins File

The Jenkins file is stored in Git (GitLab). Its location is stored in the Jenkins pipeline configuration.

Jenkins builds the pipeline according to the Jenkins file.

Jenkins builds on the SAS Viya machine, defined in the agent label, therefore you need to define an agent first in Jenkins. Please read DevOps Applied to SAS Viya 3.5: Run a SAS Program with a Jenkins Pipeline for more details.

The Jenkins file has several stages:

- Clone GIT on SAS Viya prints a simple message.

- Copy source files copies the sources in a location corresponding to the CASLIB we want to load them in CAS.

- The following stages are shaped as one stage for each CAS program we need to execute: Load in CAS, Create Star Schema in CAS, apply a Functional Test (query the star schema) and a Technical Test (star schema creation status).

- The clean-up stage was ignored on purpose, because we want to visualize the pipeline execution.

- A post message is entirely optional.

pipeline {

agent { label 'intviya01.race.sas.com'}

stages {

stage('Clone GIT on SAS Viya') {

steps {

sh 'echo "Hello " `logname`'

}

}

stage('Copy source files') {

steps {

sh 'cp -n /opt/sas/devops/workspace/{userid}-PSGEL250-devops-applied-to-sas-viya-3.5/Data-Management/source_data/* /gelcontent/demo/DM/data/'

}

}

stage('Load in CAS') {

steps {

sh '/opt/sas/spre/home/SASFoundation/sas -autoexec "/opt/sas/viya/config/etc/workspaceserver/default/autoexec_deployment.sas" /opt/sas/devops/workspace/{userid}-PSGEL250-devops-applied-to-sas-viya-3.5/Data-Management/scripts/080_load_CAS.sas -log /tmp/080_load_CAS.log'

}

}

stage('Create Star Schema in CAS') {

steps {

sh '/opt/sas/spre/home/SASFoundation/sas -autoexec "/opt/sas/viya/config/etc/workspaceserver/default/autoexec_deployment.sas" /opt/sas/devops/workspace/{userid}-PSGEL250-devops-applied-to-sas-viya-3.5/Data-Management/scripts/100_create_star_schema.sas -log /tmp/100_create_star_schema.log'

}

}

stage('Functional Test') {

steps {

sh '/opt/sas/spre/home/SASFoundation/sas -autoexec "/opt/sas/viya/config/etc/workspaceserver/default/autoexec_deployment.sas" /opt/sas/devops/workspace/{userid}-PSGEL250-devops-applied-to-sas-viya-3.5/Data-Management/scripts/200_functional_test.sas -log /tmp/200_functional_test.log'

}

}

stage('Technical Test') {

steps {

sh '/opt/sas/spre/home/SASFoundation/sas -autoexec "/opt/sas/viya/config/etc/workspaceserver/default/autoexec_deployment.sas" /opt/sas/devops/workspace/{userid}-PSGEL250-devops-applied-to-sas-viya-3.5/Data-Management/scripts/300_technical_test.sas -log /tmp/300_technical_test.log'

}

}

}

post {

always {

echo 'Job Done!'

}

}

}

The Jenkins Pipeline

The Jenkins Pipeline from the previous post will be reused as such. The pipeline doesn’t change. What changes is the Jenkins file, the Jenkins pipeline definition. You might choose to store the Jenkins file in GitLab (or any version control system). The advantage is that you can reuse the same pipeline, over and over.

Run the Jenkins pipeline

I am using Blue Ocean, a Jenkins plug-in, to run and visualize the pipelines.

See it in action:

If all was set up correctly, you would see the result, in the latest run.

The Jenkins file has been converted to a pipeline.

You can now consult the individual stages, look at their status, consult the logs, etc.

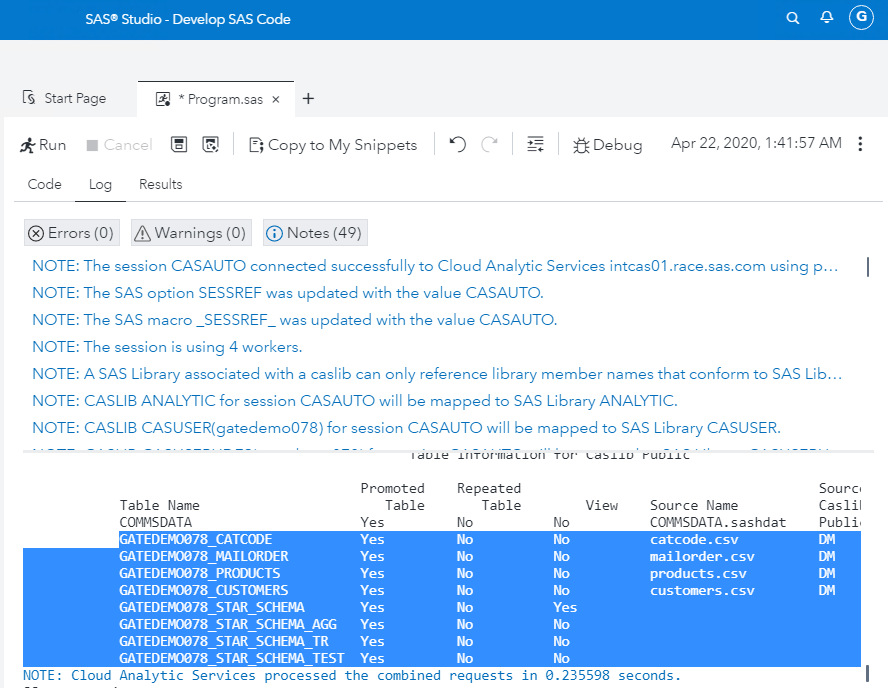

Optional: check the star schema and the test results

With a well-designed automated test, you do not need to perform a visual check. However, let us assume that you need to validate the test.

- Open SAS Studio V on the SAS Viya machine, the Jenkins agent where the pipeline ran, for example: http://intviya01.race.sas.com/SASStudioV/ .

- Write a simple program:

-

cas casauto; caslib _all_ assign; * target caslib; proc casutil incaslib="Public"; list files; list tables; quit; - Run the program.

- Check the log or, expand the target caslib where CAS views and tables were created (Public in this example).

- A CAS view STAR_SCHEMA was created.

- The STAR_SCHEMA_AGG is a functional test, a simple summary on the STAR_SCHEMA.

- The STAR_SCHEMA_TR is a functional test, a transpose of the STAR_SCHEMA_AGG.

- The STAR_SCHEMA_TEST is a technical test on the STAR_SCHEMA.

Clean-up stage

After test validation, you could add a stage to remove the created objects. Insert in the Jenkins file above, just before the post step:

- A stage to unload the CAS tables with a program.

- A stage to remove the physical files and created logs.

stage('Cleanup CAS tables') {

steps {

sh '/opt/sas/spre/home/SASFoundation/sas -autoexec "/opt/sas/viya/config/etc/workspaceserver/default/autoexec_deployment.sas" /opt/sas/devops/workspace/{userid}-PSGEL250-devops-applied-to-sas-viya-3.5/Data-Management/scripts/900_cleanup.sas -log /tmp/900_cleanup.log'

}

}

stage('Cleanup files') {

steps {

sh '''

rm -f /gelcontent/demo/DM/data/mailorder.csv

rm -f /gelcontent/demo/DM/data/customers.csv

rm -f /gelcontent/demo/DM/data/products.csv

rm -f /gelcontent/demo/DM/data/catcode.csv

rm -f /tmp/080_load_CAS.log

rm -f /tmp/100_create_star_schema.log

rm -f /tmp/200_functional_test.log

rm -f /tmp/300_technical_test.log

'''

}

}

Conclusions

We automated a simple end-to-end scenario: load files in CAS, create a star schema as a CAS view, test the star schema then clean-up with SAS Viya 3.5, Jenkins and Git (GitLab). A Jenkins pipeline was defined, with a SAS Viya machine as the agent. The Jenkinsfile containing the Jenkins syntax was stored in Git (GitLab). The CAS programs were also stored in GitLab. Finally, we ran the Jenkins pipeline, analyzed the results and confirmed visually the results in CAS.

More will follow: how to work with parallel stages in Jenkins, how to surface detailed logs, how to import SAS content, such as SAS Data Studio Plans or SAS Visual Analytics reports. Stay tuned.

Acknowledgements

Mark Thomas, Rob Collum, Stephen Foerster.

References

- DevOps Applied to SAS Viya 3.5: Run a SAS Program with a Jenkins Pipeline

- DevOps Applied to SAS Viya 3.5: Top Git Commands with Examples

- Jenkins pipeline syntax

- Jenkins documentation

Want to Learn More about Viya 3.5?

- Query Performance? Use a CAS Star Schema in Viya 3.5

- Automatic Data Loading with a CAS Data Source Star Schema in Viya 3.5

- Go with the Job Flow in SAS Viya 3.5

- How to Load Images in SAS Viya 3.5

- Improve Your Relationships: With a REST API in SAS Viya 3.5

Thank you for your time reading this post. Please comment and share your experience with Jenkins, Git and SAS Viya.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.

- Find more articles tagged with:

- automate tests

- CD

- CI

- continous integration

- continuous delivery