- Home

- /

- SAS Communities Library

- /

- An approach to SAS Portal in Viya: Implementing a backend server

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

An approach to SAS Portal in Viya: Implementing a backend server

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

In the previous article in this series, we have seen how we can create a landing page for all the users. When building that page, we used static files that were stored on the file system like the HTML and JavaScript files. Using the static information is fine for a demo application, but it would be more robust and dynamic to retrieve the KPI information and shortcuts from an API. Using an API will decouple the user interface code and the data. This is good practice especially if you want to adapt the content to a specific user group or change the data without redeploying the application.

In this article, we will see how it can be done using NodeJS with Express and MongoDB.

Other articles in the series are:

- An approach to SAS Portal in Viya: Introduction

- An approach to SAS Portal in Viya: Adding SAS Visual Analytics content

- An approach to SAS Portal in Viya: Adding SAS Content navigation

- An approach to SAS Portal in Viya: Navigating and displaying report

- An approach to SAS Portal in Viya: Adding an application bar and authenticating

- An approach to SAS Portal in Viya: Adding a chatbot to your portal page

- An approach to SAS Portal in Viya: A landing page for everyone

- An approach to SAS Portal in Viya: Implementing a backend server (this article)

The infrastructure

Before we dive into the code, it is important to understand the different components that will be used for the backend server. In this case, we will use NodeJS as the web server to host our backend application. To ease the development of the application, we will add a component called Express.

The Express package is widely used to build web applications and it comes with interesting features like a router. A router comes in handy when building APIs as it associates some code to be executed based on the URL, the method and data passed to the API. Using Express will reduce the number of lines we need to write when building our application which will keep the code concise and easier to maintain. There are other options to build APIs besides NodeJS and Express but as we started building the user interface using JavaScript, it is nice to continue using the same language. Other options are Flask (Python), Django (Python), Go, Ruby on Rails, and all the available PHP frameworks available on the market. They all offer similar functionalities but in different languages.

Besides the web server which will serve the data from our API, we need to store data. When it comes to data storage, we have, of course, the file system and two worlds exist with their benefits and disadvantages: SQL databases and NoSQL databases. You can find a lot of articles on the web about the benefits of each kind of data storage. I decided to use a NoSQL option and more specifically MongoDB.

I chose MongoDB because it stores and returns data in JSON-like format (for more information Wikipedia). This is quite handy in our case as we have JavaScript in the frontend, the backend and in this case even to store the data. This also means that we are building a complete application using MERN (MongoDB Express React NodeJS). If you look for information on the web, you will see that MERN and MEAN (same as MERN but with Angular instead of React) are combos that are often used when building full stack web applications.

To build the application, I decided to use containerized version of MongoDB. It means that I installed Docker Desktop on my machine and started a MongoDB pod using Docker Desktop. To get more information about the MongoDB image and how you can use it, please refer to this documentation. If you prefer to manually install MongoDB on your environment, it can also be done, or you can even use MongoDB Atlas which offers a free subscription.

Building the project

To start the backend project, you need to have NodeJS installed (which should already be the case if you followed along with the other articles in the series).

Here at the steps to create the project:

- Create a new folder named: sasportal_backend

- In a terminal, navigate to the folder you just created

- In the terminal, execute npm init command to initialize the project. When prompted, provide the information that is relevant to your project.

- In the terminal execute the following commands to install the different packages that will be used: cors, express, mongoose and nodemon:

- npm install cors

- npm install express

- npm install mongoose

- npm install nodemon --save-dev

The packages that you install have different purposes:

- Express is a minimal and flexible Node.js web application framework that provides a robust set of features for web and mobile applications.

- CORS is a node.js package for providing a Connect/Express middleware that can be used to enable CORS with diverse options.

- Mongoose is a MongoDB object modeling tool designed to work in an asynchronous environment. Mongoose supports both promises and callbacks.

- Nodemon is a tool that helps develop Node.js based applications by automatically restarting the node application when file changes in the directory are detected.

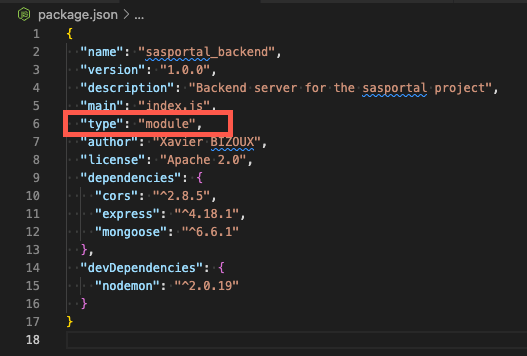

After execution, open the package.json located in the root folder and add the following parameter:

Select any image to see a larger version.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

Adding the type parameter and setting it to module will tell NodeJS, that imports should be done using ES6 modules and not using the default CommonJS packages. NodeJS supports the two approaches but as React uses the ES6 imports, I decided to use the same technique for the backend to be consistent. If you prefer to use CommonJS, it is also possible, but you need to adapt the code that will be used in this article.

Now that you have installed all the components, it is time to create the folder structure of the application. You should create the following structure:

The code

Let me explain the structure of the application.

- The controllersfolder contains the functions that will be executed to Create, Read, Update and Delete (CRUD) preferences and shortcuts.

- The modelsfolder contains information about the data structure. The models will use mongoose to define the data structure which will be stored in MongoDB.

- The last folder is one for the The routes make the wiring between the URL that is called and the functions that are executed.

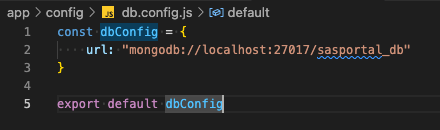

We will start with the db.config.js file. The file contains information about the MongoDB database.

By default, when you start the MongoDB container in Docker Desktop, the database can be reached on localhost:27017. This can be changed but it is good enough for our application. MongoDB and mongoose are flexible enough to create the database for you when needed.

This means that making sure that the Docker image is running is the only requirement. There is no other configuration needed.

You need to export the dbConfig object to be able to import it in other files.

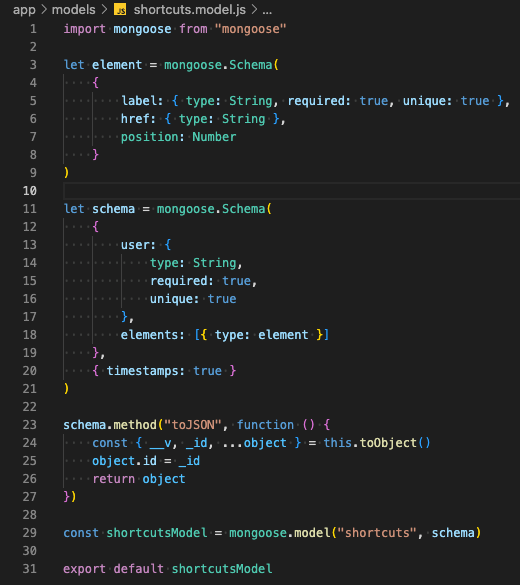

Now that we have defined the connection to the database. We should define the data structures for the different data collections: preferences and shortcuts.

Here are the codes for the models:

The first step is to import mongoose. When done, the code creates an element schema which contains the label, the href and the position like we had in the SHORTCUTS.json file in the React application. The switch between static files and the data API should be as transparent as possible.

The schema variable contains information about the user and an array of elements which are in fact using the element schema as data structure.

The next piece of code is a little trick that you can use to display the id and not _id when exporting the data in JSON format.

The final steps are to create the model using the schema and export it.

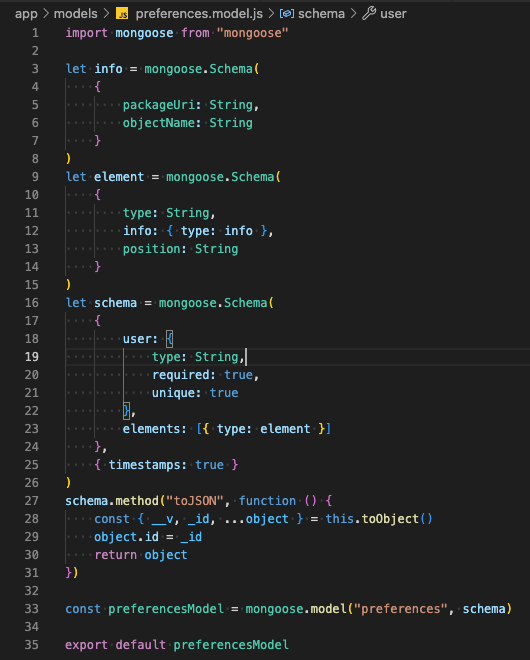

The same logic is applied for the preferences model. The data structure has just an extra layer with the info schema.

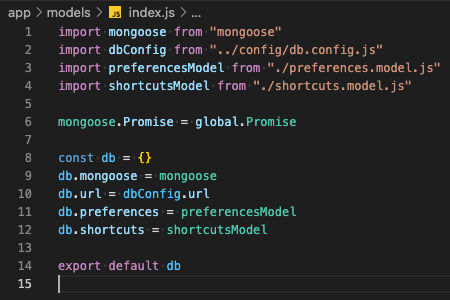

With the models in place, we need to make them available to other components. Therefore, the index.js will act as a wrapper for the database information. It imports the different modules and then creates a db object which can be used by other components.

Defining the database structure is the first step but the application needs to perform CRUD operations on the data. This is the role of the controllers. The two controllers will implement the following methods:

- create to create a new record

- findOne to retrieve information about one specific object

- update to update an existing record

- remove to delete an existing record

The client application will not implement user interfaces for all these functionalities but at least they are available, and they can be used to populate data.

As you can see in the code, the db object containing the models is imported and then used in the different methods. The fact that we are using mongoose eases the development as mongoose provides functions to perform the basic CRUD operations. The code that you must write is to check that the input has the needed information to execute the operation and to write the response that is sent back to the client application.

The same logic is applied to the shortcuts controller.

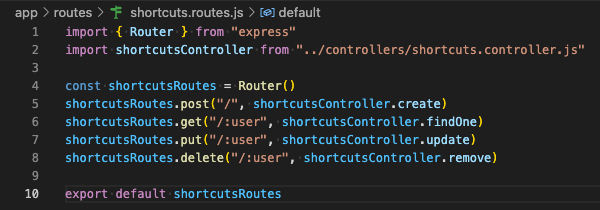

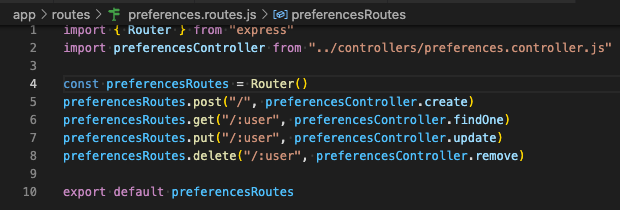

With the models, we have the data structure and with the controllers we have the actions which manipulate the data. The missing piece is the routing. Without the routes, the web server will not know which function should be executed in specific situations.

The routes are easy to build. You are mapping a verb: post, get, put, update to an action in the controller.

Here are the routes:

As you can see in the route's definition, we can pass URL parameters. These parameters are used in the controller to filter the data.

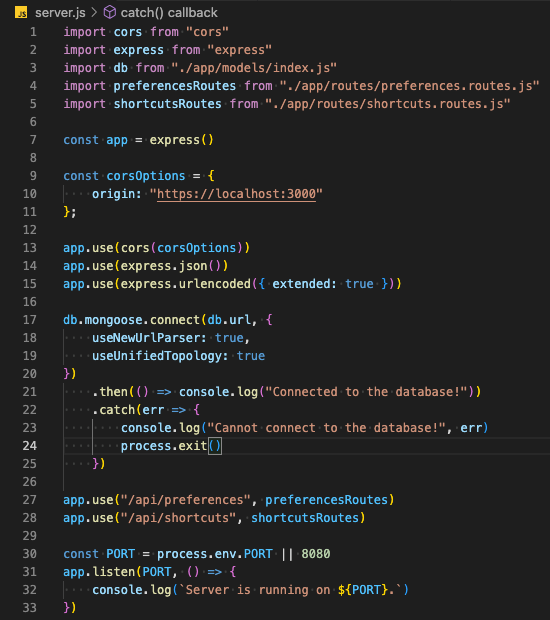

We are now close to a working backend server. We need to adapt the server.js file to use the routes and do some extra configuration for CORS for example.

Here is the content of the server.js file.

As you can see, there is no need to import the controllers or the models. They are hidden in the routes.

On line 9, we are setting the CORS options. Doing so allows connections to the server only from https://localhost:3000 . This way, the sasportal client application can access it.

On line 17, we initiate the connection with the MongoDB database.

On line 27 and 28, we tell the application that when a request is send to "/api/preferences" or "/api/shortcuts" it should use the respective routes to handle the request.

The last piece of code defines the port on which the web server will be listening for requests.

After saving the files, you can start the web server using the following command from a terminal: nodemon start

The server will start, and the following messages will be seen in the terminal:

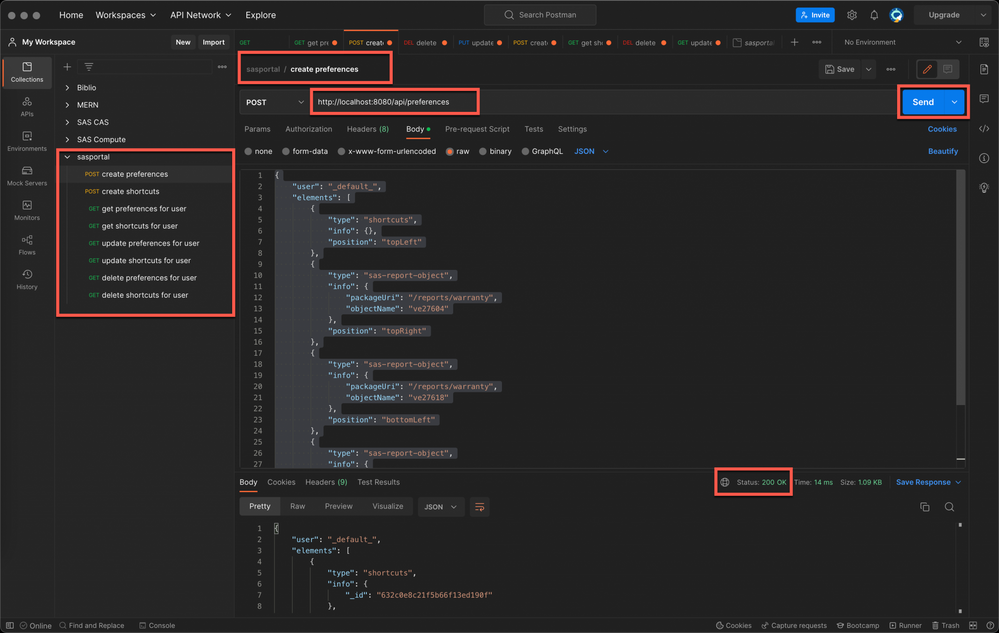

You can now test the application using curl commands or using Postman. If you are using Postman, you can find in the Git repository a file named sasportal.postman_collection.json under the test folder. You can import that file into your Postman installation. After importing the file, the collection will appear in Postman and the queries can be executed.

While testing the API, you will also load data into MongoDB. The data will be used when integrating with the portal application. If you followed along with the articles in the series, you may use other reports objects or shortcuts. You may to adapt the JSON data in Postman to match with your specific configuration.

Adapting the portal frontend

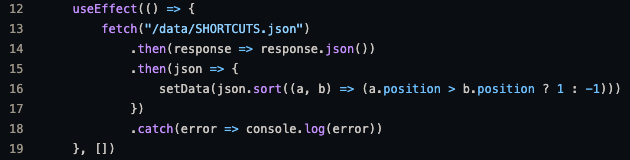

As we have seen earlier, the data that we have loaded in MongoDB are equivalent to the ones which were used in the portal application and which were stored under /public/data/ folder. It means that if we now want to use the data stored in MongoDB, we should adapt two components: LandingPage.js and Shortcuts.js. You don't need to rewrite the complete component to use the API, you will just adapt the way the data is retrieved.

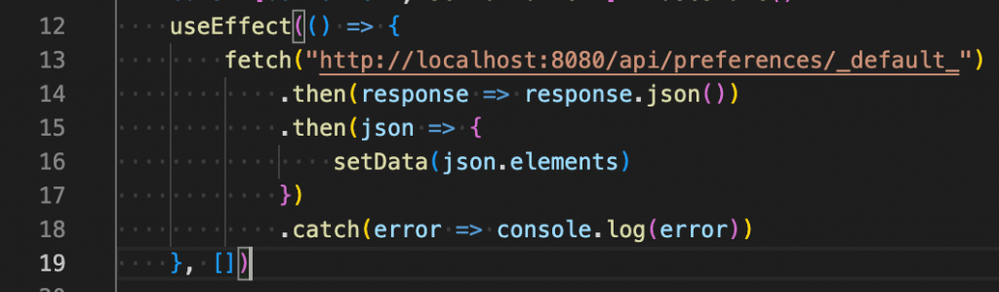

The original function in the LandingPage.js is:

The adapted function is:

As you see, we are accessing the data from http://localhost:8080/api/preferences/_default_ where the API is running. We are retrieving data for the _default_ user. Another difference with the original code is that we need to retrieve the elements property.

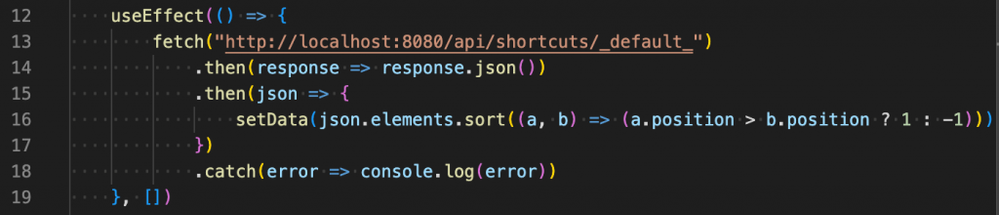

The same kind of modifications should be made in Shortcuts.js.

The adapted function is:

The same changes were made to the URL but also to retrieve the elements property.

After these changes, the application works the same way as before, but the data are now retrieved from an API which collects dynamically data from MongoDB instead of static data stored in the client application.

Conclusion

As we have seen in this article, you can easily create a backend application which uses MongoDB to store data. Using this basic application, you can add more models, more controllers, and more routes to the application to suit your needs. This change is transparent for the end-users, but it provides more flexibility when developing your application.

The backend and frontend are now completely decoupled. It means also that you can switch to another backend technology without impact on the frontend. The application that we have created uses a simplistic configuration. You can of course change the user and password that are used in MongoDB, you can configure an authentication mechanism for the backend server or define specific authorizations. All these considerations are left aside from this application for one good reason: when the complete application will be deployed in Kubernetes, MongoDB and the backend application will only be accessible from the cluster and only the frontend will be accessible from the external world. It means that unless an application is deployed in the same cluster, there will be no way to access the API nor the database. But we will see that in the next article in the series.

- An approach to SAS Portal in Viya: Introduction

- An approach to SAS Portal in Viya: Adding SAS Visual Analytics content

- An approach to SAS Portal in Viya: Adding SAS Content navigation

- An approach to SAS Portal in Viya: Navigating and displaying report

- An approach to SAS Portal in Viya: Adding an application bar and authenticating

- An approach to SAS Portal in Viya: Adding a chatbot to your portal page

- An approach to SAS Portal in Viya: A landing page for everyone

- An approach to SAS Portal in Viya: Implementing a backend server (this article)

Find more articles from SAS Global Enablement and Learning here.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.