- Home

- /

- Programming

- /

- Programming

- /

- How do I import a .json file with headers designation

- RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

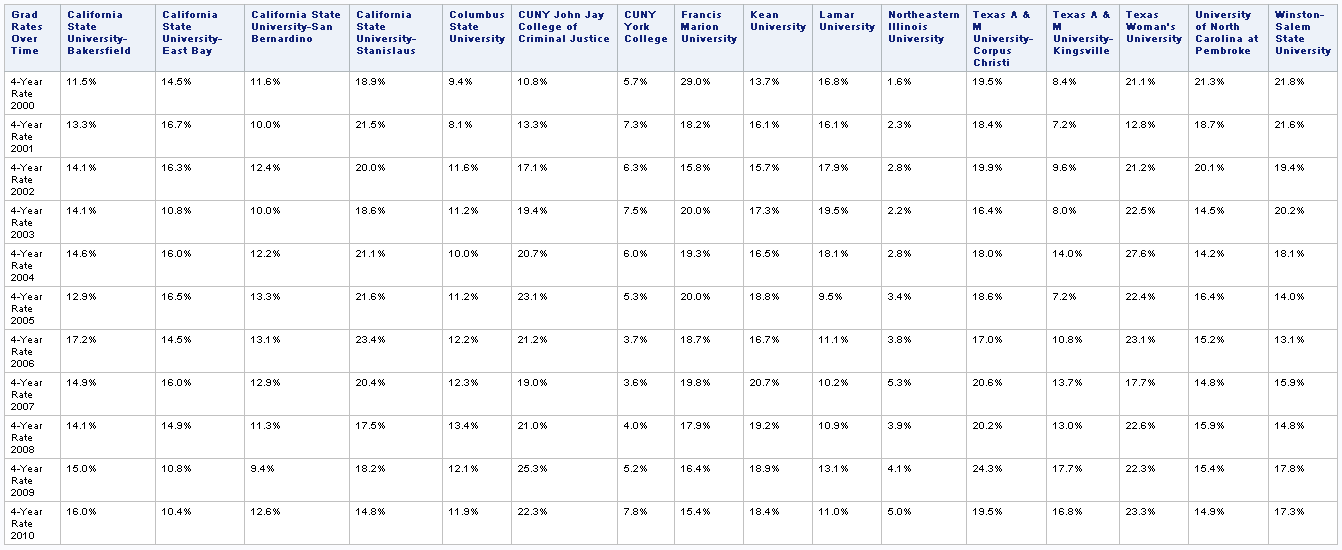

I have a .json file I need to read into sas. This file has a row with headers and then has the rows of data listed below. Can anyone assist in what the sas code to import this data correctly would look like?

{"headers":

[["Grad Rates Over Time","4-Year Rate 2000","4-Year Rate 2001","4-Year Rate 2002","4-Year Rate 2003","4-Year Rate 2004","4-Year Rate 2005","4-Year Rate 2006","4-Year Rate 2007","4-Year Rate 2008","4-Year Rate 2009","4-Year Rate 2010"]],

"rows":

[["California State University-Bakersfield","11.5%","13.3%","14.1%","14.1%","14.6%","12.9%","17.2%","14.9%","14.1%","15.0%","16.0%"],

["California State University-East Bay","14.5%","16.7%","16.3%","10.8%","16.0%","16.5%","14.5%","16.0%","14.9%","10.8%","10.4%"],

["California State University-San Bernardino","11.6%","10.0%","12.4%","10.0%","12.2%","13.3%","13.1%","12.9%","11.3%","9.4%","12.6%"],["California State University-Stanislaus","18.9%","21.5%","20.0%","18.6%","21.1%","21.6%","23.4%","20.4%","17.5%","18.2%","14.8%"],["Columbus State University","9.4%","8.1%","11.6%","11.2%","10.0%","11.2%","12.2%","12.3%","13.4%","12.1%","11.9%"],["CUNY John Jay College of Criminal Justice","10.8%","13.3%","17.1%","19.4%","20.7%","23.1%","21.2%","19.0%","21.0%","25.3%","22.3%"],["CUNY York College","5.7%","7.3%","6.3%","7.5%","6.0%","5.3%","3.7%","3.6%","4.0%","5.2%","7.8%"],["Francis Marion University","29.0%","18.2%","15.8%","20.0%","19.3%","20.0%","18.7%","19.8%","17.9%","16.4%","15.4%"],["Kean University","13.7%","16.1%","15.7%","17.3%","16.5%","18.8%","16.7%","20.7%","19.2%","18.9%","18.4%"],["Lamar University","16.8%","16.1%","17.9%","19.5%","18.1%","9.5%","11.1%","10.2%","10.9%","13.1%","11.0%"],["Northeastern Illinois University","1.6%","2.3%","2.8%","2.2%","2.8%","3.4%","3.8%","5.3%","3.9%","4.1%","5.0%"],["Texas A & M University-Corpus Christi","19.5%","18.4%","19.9%","16.4%","18.0%","18.6%","17.0%","20.6%","20.2%","24.3%","19.5%"],["Texas A & M University-Kingsville","8.4%","7.2%","9.6%","8.0%","14.0%","7.2%","10.8%","13.7%","13.0%","17.7%","16.8%"],["Texas Woman's University","21.1%","12.8%","21.2%","22.5%","27.6%","22.4%","23.1%","17.7%","22.6%","22.3%","23.3%"],["University of North Carolina at Pembroke","21.3%","18.7%","20.1%","14.5%","14.2%","16.4%","15.2%","14.8%","15.9%","15.4%","14.9%"],["Winston-Salem State University","21.8%","21.6%","19.4%","20.2%","18.1%","14.0%","13.1%","15.9%","14.8%","17.8%","17.3%"]]}

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

There is a JSON Package in PROC DS2 . But for me not familiar with it , I would like to use data step:

data have;

infile '/folders/myfolders/have.json' recfm=n dsd dlm='[]{},:';

input temp : $100. @@;

if missing(temp) then group+1;

if findw(temp,'headers') or

find(temp,'"rows"') or

prxmatch('/^\s/',temp) then delete;

run;

proc transpose data=have out=temp(drop=_name_ group rename=(col1=label));

by group;

var temp;

run;

proc transpose data=temp out=want(drop=_name_);

var col:;

id label;

idlabel label;

run;

proc print noobs label;run;

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

@Ksharp Your variable names are truncated to 32.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Chris,

That doesn't matter as long as you got that label and make sure variable name is unique .

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

I would argue it matters to have the name right rather than the label in the vast majority of cases, but to each their own. 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Chris,

If you want make variable name looks better , just change the ID variable.

data have;

infile '/folders/myfolders/have.json' recfm=n dsd dlm='[]{},:';

input temp : $100. @@;

if missing(temp) then group+1;

if prxmatch('/^\s|^headers/',temp) then delete;

run;

proc transpose data=have out=temp(drop=_name_ rename=(col1=label));

by group;

var temp;

run;

proc transpose data=temp out=want(drop=_name_);

var col:;

id group;

idlabel label;

run;

proc print noobs label;run;- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Like this?

data _null_;

file "%sysfunc(pathname(WORK))\t.txt" lrecl=32000;

infile cards pad truncover;

input X $32000.;

L=length(X);

put X $varying32000. L;

cards;

{"headers":

[["Grad Rates Over Time","4-Year Rate 2000","4-Year Rate 2001","4-Year Rate 2002","4-Year Rate 2003","4-Year Rate 2004","4-Year Rate 2005","4-Year Rate 2006","4-Year Rate 2007","4-Year Rate 2008","4-Year Rate 2009","4-Year Rate 2010"]],

"rows":

[["California State University-Bakersfield","11.5%","13.3%","14.1%","14.1%","14.6%","12.9%","17.2%","14.9%","14.1%","15.0%","16.0%"],

["California State University-East Bay","14.5%","16.7%","16.3%","10.8%","16.0%","16.5%","14.5%","16.0%","14.9%","10.8%","10.4%"],

["California State University-San Bernardino","11.6%","10.0%","12.4%","10.0%","12.2%","13.3%","13.1%","12.9%","11.3%","9.4%","12.6%"],["California State University-Stanislaus","18.9%","21.5%","20.0%","18.6%","21.1%","21.6%","23.4%","20.4%","17.5%","18.2%","14.8%"],["Columbus State University","9.4%","8.1%","11.6%","11.2%","10.0%","11.2%","12.2%","12.3%","13.4%","12.1%","11.9%"],["CUNY John Jay College of Criminal Justice","10.8%","13.3%","17.1%","19.4%","20.7%","23.1%","21.2%","19.0%","21.0%","25.3%","22.3%"],["CUNY York College","5.7%","7.3%","6.3%","7.5%","6.0%","5.3%","3.7%","3.6%","4.0%","5.2%","7.8%"],["Francis Marion University","29.0%","18.2%","15.8%","20.0%","19.3%","20.0%","18.7%","19.8%","17.9%","16.4%","15.4%"],["Kean University","13.7%","16.1%","15.7%","17.3%","16.5%","18.8%","16.7%","20.7%","19.2%","18.9%","18.4%"],["Lamar University","16.8%","16.1%","17.9%","19.5%","18.1%","9.5%","11.1%","10.2%","10.9%","13.1%","11.0%"],["Northeastern Illinois University","1.6%","2.3%","2.8%","2.2%","2.8%","3.4%","3.8%","5.3%","3.9%","4.1%","5.0%"],["Texas A & M University-Corpus Christi","19.5%","18.4%","19.9%","16.4%","18.0%","18.6%","17.0%","20.6%","20.2%","24.3%","19.5%"],["Texas A & M University-Kingsville","8.4%","7.2%","9.6%","8.0%","14.0%","7.2%","10.8%","13.7%","13.0%","17.7%","16.8%"],["Texas Woman's University","21.1%","12.8%","21.2%","22.5%","27.6%","22.4%","23.1%","17.7%","22.6%","22.3%","23.3%"],["University of North Carolina at Pembroke","21.3%","18.7%","20.1%","14.5%","14.2%","16.4%","15.2%","14.8%","15.9%","15.4%","14.9%"],["Winston-Salem State University","21.8%","21.6%","19.4%","20.2%","18.1%","14.0%","13.1%","15.9%","14.8%","17.8%","17.3%"]]}

run;

data _null_;

infile "%sysfunc(pathname(WORK))\t.txt" lrecl=32000 firstobs=2 pad truncover;

input X $32000.;

X=tranwrd(X,'",','"n ');

X=tranwrd(X,'"]','"n ');

X=compress(X,'[],');

call symputx('names',X);

stop;

run;

data WANT;

infile "%sysfunc(pathname(WORK))\t.txt" lrecl=32000 firstobs=4 dlm='"},' pad truncover termstr=']';

length &names. $64;

input @'[' &names. ;

putlog _N_= (&names)(=);

if lengthn(cats(of &names.));

run;

proc print noobs;

run;

| Grad Rates Over Time | 4-Year Rate 2000 |

4-Year Rate 2001 |

4-Year Rate 2002 |

4-Year Rate 2003 |

4-Year Rate 2004 |

4-Year Rate 2005 |

4-Year Rate 2006 |

4-Year Rate 2007 |

4-Year Rate 2008 |

4-Year Rate 2009 |

4-Year Rate 2010 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| California State University-East Bay | 14.5% | 16.7% | 16.3% | 10.8% | 16.0% | 16.5% | 14.5% | 16.0% | 14.9% | 10.8% | 10.4% |

| California State University-San Bernardino | 11.6% | 10.0% | 12.4% | 10.0% | 12.2% | 13.3% | 13.1% | 12.9% | 11.3% | 9.4% | 12.6% |

| California State University-Stanislaus | 18.9% | 21.5% | 20.0% | 18.6% | 21.1% | 21.6% | 23.4% | 20.4% | 17.5% | 18.2% | 14.8% |

| Columbus State University | 9.4% | 8.1% | 11.6% | 11.2% | 10.0% | 11.2% | 12.2% | 12.3% | 13.4% | 12.1% | 11.9% |

| CUNY John Jay College of Criminal Justice | 10.8% | 13.3% | 17.1% | 19.4% | 20.7% | 23.1% | 21.2% | 19.0% | 21.0% | 25.3% | 22.3% |

| CUNY York College | 5.7% | 7.3% | 6.3% | 7.5% | 6.0% | 5.3% | 3.7% | 3.6% | 4.0% | 5.2% | 7.8% |

| Francis Marion University | 29.0% | 18.2% | 15.8% | 20.0% | 19.3% | 20.0% | 18.7% | 19.8% | 17.9% | 16.4% | 15.4% |

| Kean University | 13.7% | 16.1% | 15.7% | 17.3% | 16.5% | 18.8% | 16.7% | 20.7% | 19.2% | 18.9% | 18.4% |

| Lamar University | 16.8% | 16.1% | 17.9% | 19.5% | 18.1% | 9.5% | 11.1% | 10.2% | 10.9% | 13.1% | 11.0% |

| Northeastern Illinois University | 1.6% | 2.3% | 2.8% | 2.2% | 2.8% | 3.4% | 3.8% | 5.3% | 3.9% | 4.1% | 5.0% |

| Texas A & M University-Corpus Christi | 19.5% | 18.4% | 19.9% | 16.4% | 18.0% | 18.6% | 17.0% | 20.6% | 20.2% | 24.3% | 19.5% |

| Texas A & M University-Kingsville | 8.4% | 7.2% | 9.6% | 8.0% | 14.0% | 7.2% | 10.8% | 13.7% | 13.0% | 17.7% | 16.8% |

| Texas Woman's University | 21.1% | 12.8% | 21.2% | 22.5% | 27.6% | 22.4% | 23.1% | 17.7% | 22.6% | 22.3% | 23.3% |

| University of North Carolina at Pembroke | 21.3% | 18.7% | 20.1% | 14.5% | 14.2% | 16.4% | 15.2% | 14.8% | 15.9% | 15.4% | 14.9% |

| Winston-Salem State University | 21.8% | 21.6% | 19.4% | 20.2% | 18.1% | 14.0% | 13.1% | 15.9% | 14.8% | 17.8% | 17.3% |

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Proc JSON is only for writing json files. For importing a small (<32k) json file you could transform it into a CSV file first, like this:

/* Temporary file to hold CSV copy of data */

filename dum temp;

/* Extract headers and data rows from json file */

data _null_;

length str $32767 headers row $1000;

if not prx1 then prx1 + prxparse('/"headers":\s*\[\[(.*?)\]\]/i');

if not prx2 then prx2 + prxparse('/"rows":\s*\[(\[.*?\])\]/i');

if not prx3 then prx3 + prxparse('/\[.*?\]/');

/* Load entire file into a single string */

infile "&sasforum.\Datasets\JSON example.txt" end=done;

do until(done);

input;

str = catx(" ", str, _infile_);

end;

/* Get and copy headers */

file dum;

if prxMatch(prx1, str) then do;

headers = prxPosn(prx1, 1, str);

put headers;

end;

/* Get and copy each row */

if prxMatch(prx2, str) then do;

call prxPosn(prx2, 1, pos, len);

start = pos;

stop = pos + len - 1;

call prxNext(prx3, start, stop, str, pos, len);

do while(pos > 0);

row = substr(str, pos+1, len-2);

row = prxChange('s/"([0-9.%-]+?)"/\1/', -1, row);

put row;

call prxNext(prx3, start, stop, str, pos, len);

end;

end;

stop;

run;

/* Import temp file as a CSV file with headers */

proc import datafile="dum" out=test dbms=csv replace;

guessingrows=max;

run;Note that proc import doesn't like the percent character in numeric fields. Numeric fields without percent signs would be imported properly as numeric variables.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →Learn how use the CAT functions in SAS to join values from multiple variables into a single value.

Find more tutorials on the SAS Users YouTube channel.

SAS Training: Just a Click Away

Ready to level-up your skills? Choose your own adventure.