- Home

- /

- SAS Communities Library

- /

- DevOps Applied to SAS 9: SAS DI jobs, the Git Plug-in, GitLab and Jenk...

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

DevOps Applied to SAS 9: SAS DI jobs, the Git Plug-in, GitLab and Jenkins

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Automation is key in the DevOps philosophy. If you plan to cross the "Automation Valley" to Continuous Integration (CI) land with SAS Data Integration (DI), then the Git plug-in, a Git repository and Jenkins will help you get there faster. The SAS Data Integration Studio Git plug-in is managing DI jobs as archives (.spk). It is also handling the git commands (pull, stage, commit, push, etc.) to work with a remote repository.

But from an archived job to Continuous Integration, there is a valley to cross. Let's call it the "Automation Valley".

And to cross that valley, you need to build a bridge. In Continuous Integration land, the bridge must be built on the fly.

The bridge must be made of archived jobs, scripts to import archives, and scripts to create, deploy and run the jobs.

Jenkins builds the bridge with these materials. The building plan is the Jenkins pipeline script.

When you combine SAS DI with the Git plug-in, a Git repository, such as GitLab and an automation server, Jenkins, the Continuous Integration land can be reached.

The post is building on the foundations laid in DevOps Applied to SAS 9: SAS Code, GitLab and Jenkins and SAS DI Developers: Unite! The new GIT plug-in in Data Integration Studio.

Pre-requisites

The following example assumes a SAS 9.4M6 platform on Windows. You will need:

SAS Data Integration Git plug-In

Please note, the Git plug-in is only available as of SAS Data Integration Studio version 4.904.

If you did not setup the Git Plug-in your environment, you might want to see the second part of the post SAS DI Developers: Unite! The new GIT plug-in in Data Integration Studio post.

From Pre-Requisites using the Git Plug-In, complete the steps 1, 2, 4 and 5. Skip step number 3. Git on Windows is needed and is part of step 4.

GitLab (or GitHub) repository

In the above post, in step 3, a GitHub repository was used. The next example assumes a GitLab repository.

GitLab is a web-based DevOps lifecycle tool, using Git technology. You need to set up (or have access to) a GitLab repository:

The GitLab repository has to be accessible from the SAS 9.4 M6 Windows machine. (In my example, it is hosted at https://<server>/gatedemo025/psgel250-devops-applied-to-sas-94m6.git .

Jenkins

Jenkins is the automation server. You would need to create a Jenkins pipeline, have a pipeline script, and configure the Windows machine where SAS 9.4M6 is running, as a Jenkins agent.

Steps

Create folders

On the Windows machine where SAS 9.4 M6 is installed:

- Create a first folder, for the local git repository: C:\temp\DevOps\

- Create a second folder, for the execution results C:\temp\DevOps\batch\

Configure the SAS Data Integration Git Plug-in

In SAS Data Integration, from Tools > Options, configure the Git Plug-in:

Git Repository URL, this is your GitLab project, e.g., https://<server>/gatedemo025/psgel250-devops-applied-to-sas-94m6.git.

Location of the Git repository, the folder you created above: C:\temp\DevOps\

Connection type, depends on how you can access your repository: https, http or ssh

Initialize the repository button clones locally the GitLab repository. By default, the Git plug-in clones into <folder>\gitPlugin\gitRepo\ .

Create a job

Create a new SAS Data Integration job, called List_Job (in my example, it is stored in the /Data Governance/Jobs folder). Add a User Written Code transformation, called List SAS.

In the Code tab, add:

proc printto print="C:\temp\DevOps\batch\SAScodeoutput.txt" new;run;

proc print data=sashelp.birthwgt (obs=10); run; proc printto; run; quit;Save the transformation and the job.

Use the Git plug-in to archive the job

Right click on the job and Archive as a SAS package. Archive will transform the DI job in a SAS package (.spk), stage, commit the changes and push them to the remote repository.

The Git plug-in archives the job, by default, in the /root of the Git repository.

Create a folder for the deployed jobs

To run a SAS DI job in a pipeline, it needs to be deployed first.

Right click the job List_Job > Scheduling > Deploy. Create a New Deployment Directory. Call it JobsDeployed, map it with C:\temp|DevOps\batch folder.

When you are done, you can delete the List_Job from SAS Data Integration folder.

Create a Jenkins pipeline

Optional, you could set a webhook with your GitLab repository. The advantage is that, when new code is pushed to the remote repository, Jenkins will rebuild a new pipeline.

Instruct your pipeline to use the pipeline script in the GitLab repository. You need the repository URL and credentials to access the remote repository.

Need more details in regards to a pipeline definition? Go to DevOps Applied to SAS Viya 3.5: Run a SAS Program with a Jenkins Pipeline (The Jenkins Pipeline paragraph).

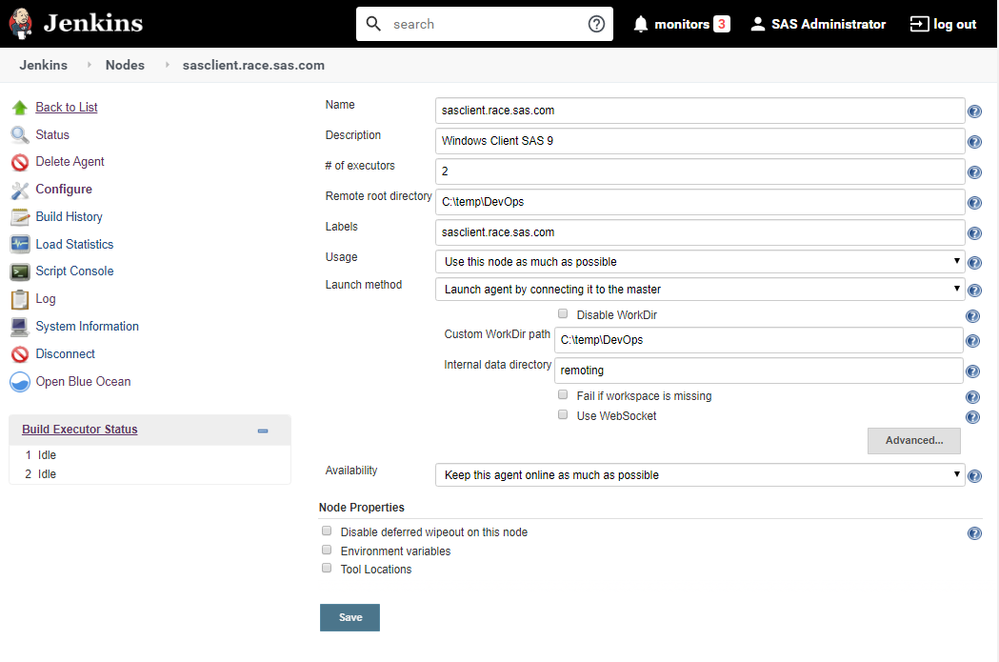

Configure a Jenkins agent

You then need to allow Jenkins to drive the DevOps pipelines on the SAS 9.4 M6 Windows machine. You must set up a Jenkins node, or agent.

On the designated Windows machine, Jenkins will install a node service that allows communication back with the controller.

The agent setup can be tricky, especially for a first time. Search online for "setup a Jenkins Windows agent". There are some excellent videos out there.

The agent must therefore be online before Jenkins can run anything on the SAS Windows agent.

Create a Jenkinsfile pipeline script

You can create the Jenkinsfile directly in the GitLab repository, in the “Data-Management” folder (you specified the location when you created the pipeline).

pipeline {

agent { label 'sasclient.race.sas.com'}

stages {

stage('List Environment Variables') {

steps {

echo "Running ${env.JOB_NAME} in ${env.WORKSPACE} on ${env.JENKINS_URL}"

}

}

stage('Import the SAS DI job from the .spk') {

environment {

UserCredentials = credentials('SAS9Administrator')

}

steps {

withCredentials([usernamePassword(credentialsId: 'SAS9Administrator', passwordVariable: 'SAS9AdminPass', usernameVariable: 'SAS9Admin')]) {

// some block

bat '''

echo "Importing job in SAS DI with Masked Credentials"

@echo on

echo "Passing credentials: user" %SAS9Admin%

echo "Passing credentials: pass" %SAS9AdminPass%

"C:\\Program Files\\SASHome\\SASPlatformObjectFramework\\9.4\\ImportPackage" -host "sasclient.race.sas.com" -port 8561 -user %SAS9Admin% -password %SAS9AdminPass% -package List_Job.spk -target "/Data Governance/Jobs" -log "C:\\temp\\DevOps\\gitPlugin\\gitRepo\\JobImport.log"

'''

}

}

}

stage('Deploy the SAS DI job') {

steps {

withCredentials([usernamePassword(credentialsId: 'SAS9Administrator', passwordVariable: 'SAS9AdminPass', usernameVariable: 'SAS9Admin')]) {

// some block

bat '''

echo "Deploying the SAS DI job as SAS 9.4 code"

@echo on

echo "Passing credentials: user" %SAS9Admin%

echo "Passing credentials: pass" %SAS9AdminPass%

"C:\\Program Files\\SASHome\\SASDataIntegrationStudioServerJARs\\4.8\\DeployJobs.exe" -host "sasclient.race.sas.com" -port 8561 -user %SAS9Admin% -password %SAS9AdminPass% -deploytype deploy -objects "/Data Governance/Jobs/List_Job" -sourcedir "C:\\temp\\DevOps\\batch" -deploymentdir "C:\\temp\\DevOps\\batch" -metarepository Foundation -metaserverid A5CTTMUV.AT000002 -servermachine "sasclient.race.sas.com" -serverport 8591 -serverusername "sasdemo" -serverpassword %SAS9AdminPass% -batchserver "SASApp - SAS DATA Step Batch Server" -folder "JobsDeployed" -log "C:\\temp\\DevOps\\gitPlugin\\gitRepo\\JobDeployment.log"

'''

}

}

}

stage('Run the deployed SAS DI job') {

steps {

bat '''

echo "Running SAS 9.4 code on Windows client"

"C:\\Program Files\\SASHome\\SASFoundation\\9.4\\Sas.exe" -sysin C:\\temp\\DevOps\\batch\\List_Job.sas -log C:\\temp\\DevOps\\batch\\List_Job.log -nosplash -nologo -noicon

type C:\\temp\\DevOps\\batch\\List_Job.log

type C:\\temp\\DevOps\\batch\\SAScodeoutput.txt

'''

}

}

}

post {

success {

echo 'Imported and ran a SAS DI job on a Windows client'

}

}

}

The pipeline syntax explained:

- Instruct the pipeline to run on the Windows machine (now Jenkins agent) where SAS 9.4M6 is installed: agent { label 'sasclient.race.sas.com'}

- Environment variables: recover the workspace path defined in the agent ‘Remote root directory’. This is where the code from the GitLab repository will be pulled by Jenkins, at build time. It corresponds to C:\temp\DevOps\workspace\<Jenkins-pipeline-name>.

- In the syntax you must use ‘\\’ instead of ‘\’ when referring to folders on Windows.

- Import the SAS DI job from the .spk imports the DI job from the .spk generated in DI via 'Archive as a SAS package' operation.

- UserCredentials = credentials('SAS9Administrator') means you want the stage to run with SAS Administrator credentials. These are defined in Jenkins credentials. In the stage the user and password are passed, anonymously, to two system variables SAS9Admin and SAS9AdminPass.

- Deploy the SAS DI job: DevOps is about scripting and batch execution. To run a DI job, the job must be deployed first. The deployment script transforms metadata (the SAS DI job) into SAS code. The deployed code stays on the Windows machine, in the JobsDeployed folder you defined. I used this SAS paper and the SAS documentation to create the job deployment script.

- Run the deployed SAS DI job: the SAS code is then executed in batch. The syntax to run in batch the SAS program is: "C:\\Program Files\\SASHome\\SASFoundation\\9.4\\Sas.exe" -sysin "C:\\temp\\DevOps\\batch\\List_Job.sas”

- The execution log is captured in: -log C:\\temp\\DevOps\\batch\\SAScode.log -nosplash -nologo -icon

- The log is displayed: type C:\\temp\\DevOps\\batch\\SAScode.log

- The SAS program output is printed: type C:\\temp\\DevOps\\batch\\SAScodeoutput.txt (the output file was defined in the User Written Code transformation).

The Jenkins Build Result

If you have setup a webhook, the pipeline will run after each commit in GitLab. Look at each stage result:

Environment variables: Jenkins pulls the code from GitLab in a folder on the Windows machine.

Import the SAS DI job from the .spk:

Deploy the SAS DI job:

Run the deployed SAS DI job:

There are no tests in the pipeline, just to keep the post short, but it is best practice to design and include them. With testing, the "bridge" is complete and fit for purpose.

In Continuous Integration land, everything is short-lived, and bridges are usually destroyed after they are built.

A last step, after testing, might have been to delete the deployed job, the job and the logs on the Windows machine, upon successful pipeline completion. Once you have your pipeline definition and script, you can rebuild anytime.

Conclusions

When you combine SAS DI with the Git plug-in, a Git repository, such as GitLab and Jenkins, Continuous Integration can be achieved.

The benefits of combining these tools:

- If your SAS job changes, you only need to push the changes to the remote repository. The automation server rebuilds with the latest changes.

- If you have a new job to integrate, you need to push the changes and add steps in the pipeline script.

- If you have a new environment and want to execute the jobs there, you just need to define a new agent in your automation server and adapt the pipeline script.

Acknowledgements

Stephen Foerster, Mark Thomas, Rob Collum.

DevOps Applied to SAS 9 Posts

- SAS DI Developers: Unite! The new GIT plug-in in Data Integration Studio

- Automate SAS 9 Code Unit Tests in a DevOps Pipeline with Jenkins and SASUnit Part 1

- Automate SAS 9 Code Unit Tests in a DevOps Pipeline with Jenkins and SASUnit Part 2

Git Posts

DevOps Applied to Viya 3.5 Posts

- DevOps Applied to SAS Viya 3.5: Run a SAS Program with a Jenkins Pipeline

- CAS Programs and Jenkins Pipeline Parallel Stages

- Run and Test CAS Programs with a Jenkins Pipeline

- Integrate a Data Studio Data Plan in a DevOps Pipeline

- Viya Jobs object model (sas-admin cli)

Thank you for your time reading this post. Please comment and share your experience with SAS and DevOps.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.