- Home

- /

- SAS Communities Library

- /

- DataPreparation4DataScience = FeatureEngineering + DQ + DataAssembly |...

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

DataPreparation4DataScience = FeatureEngineering + DQ + DataAssembly | Gerhards' article collection

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

A topic "Close to my heart"

Today, 25 years later my #datapreparation4datascience collection contains 18 SASCommunities articles, 12 articles at medium.com, LinkedIn and other media, 14 webinars on Youtube, 3 SAS Press Books, 4 SAS Global Forum papers, 2 ask-the-expert sessions, 2 SAS tips at support.sas.com, 3 SAS Blogs and more than 60 presentations on data science conferences.

The purpose of this article it to provide an overview over #datapreparation4datascience and a link collection for the contributions by topic.

However before I start with the overview and the list, I want say that this has not only been my own achievement. I have to thank you, the SAS User Community, for your support!

The topic of my customers

I could only develop these topic and write the SAS Press books because I was in conversation with our SAS Customers and SAS Users. The questions you asked me and the challenges you gave me, were often a trigger for new ideas, tips and tricks and solutions. Here are a few examples:

- In long nights of detecting and fixing data quality problems we found out and discussed that the statement "the data quality is in good shape" needs to be differentiated between technical data quality and data quality for analytics. The fact, that data is fine from an IT perspective because the values are between the lower and upper boundary or the categories are in a predefined list, does not mean that data can be used for machine learning models.

- In many machine learning projects we worked on how to improve the predictive power of the models. And some of the challenges you presented to me resulted in creative solutions for feature engineering and deriving customer behavior from transactional data.

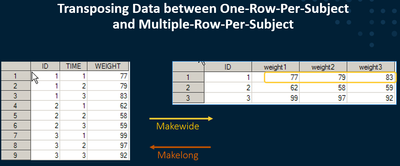

- The above mentioned %MAKEWIDE and %MAKELONG macro for transposing the data have been reworked and augmented over the years. They were a frequent, but not the only, challenge how to structure data for being used in analytical models, SAS procedures and SAS Enterprise Miner or SAS Model Studio.

- Sometimes we had (quite philosophical) discussions about the origin of data and the fact that the final representation of the data not only depends on the values of the analysis subject themselves but also from the intention of the data retrieval system and the process that collects the data. The "story of my old aunt Susanne" was one of the results of these discussion and has been presented at SAS conferences and my academic teaching now for more than 10 years.

- The discussion "what would have happened, if we had more/better data (or also less/inferior data)" in time series forecasting, was the trigger for many simulation studies, that have been performed to quantify the effect of more missing values or a longer time series.

#datapreparation4datascience - Digging one level deeper

Data Science methods have specific requirements on analysis data. Occurrences of missing values have to be investigated and handled, the distribution of the values need to be checked, and a sufficient amount of analysis objects is needed. Data Science methods also require certain data structures like the one-row-per subject structure or the timeseries dataset. In return these methods however also enhance the data preparation work and can also be used to verify and improve data quality and allow you to perform powerful feature engineering.

"Data Preparation" and "Data Quality" are therefore much more than just joining tables and checking the possible list of values. Data Preparation for Data Science is a discipline to create and quality check the analytic base tables and to derive the relevant features that shall be used in the analysis. In my publications I categorize my articles into the following three main topics:

1. Feature Engineering

Feature Engineering is an important tool for (supervised) machine learning. Model accuracy and interpretability benefits from variables that precisely describe the behavior of the analysis subjects. In many cases these features are derived from transactional data which are recorded, e.g. over time. In some cases, simple descriptive measures like the mean or the sum provide a very good picture of the analysis subjects.

Often it is important to dig deeper to adequately describe the behavior. Analytic methods help to calculate KPIs that measure the trend over time per customer, the accordance with predefined pattern or the correlation of individual customer with the average customer. For powerful analytical models it is however not enough to "replicate" the original data just in another format, e.g. by transposing. You want to describe the behavior of your analysis subjects. You can to this by calculating different analytic measures.

Feature Engineering 1 - Using Correlation Analysis to Describe Behavior over Time | +Youtube Webinar

- Feature Engineering #2 - Accordance with predefined pattern | +Youtube Webinar

- Feature Engineering #3 – Describing the Trend over Time

- SAS Sample 31582: Creating measures for the course over time

- 3 ways to consider movable holidays in SAS

- Forecasting challenges the Easter Bunny brings (LinkedIn)

- SUGI2006: Efficient “One-Row-per-Subject” Data Mart Construction for Data Mining

2. Data Quality for Analytics

Analytical methods have specific requirements on the analysis data. These data quality requirements often go beyond classic requirements of basic reporting and descriptive statistics. Missing values for example can quickly reduce the available set of records with complete data.

The data quantity aspect has influence whether statistically significant results can be generated or if certain machine learnings methods can be applied at all. The availability of data in general for the analysis decide whether you are able to perform your analysis or whether you have to reformulate your business questions or postpone the analysis at all.

Analytical methods however not only have requirements on the data. These methods can also be used to profile and improve the quality of the data.

2.1 Conceptual considerations

These articles discuss the conceptual background and the motivation of "Data Quality for Analytics".

- Is your data ready for data science? — Motivating this topic from a sail-race-analysis example medium.com + linkedin + IT-Briefcase

- You just found out that the data quality of your analysis data is poor — What are your options? (medium.com)

- The origin, detection, treatment and consequences of missing values in analytics (article at informs.com)

- “Rosetta Stone” — The most important text sample in history and the role of labeled data in machine ... (medium.com)

- Explaining Sign Inversion of Parameter Estimates in Multiple Regression Models — A Story-Telling App... (medium.com)

- "The hungry statistician" – or why we never can get enough data (linkedin)

2.2 Profiling and improving data quality

Data Science methods not only demand a certain data quality level, they also allow you to profile and improve the quality of your analysis data.

General Data Quality Profiling

- Sailing and the art of data quality assessmen (JMP-Blog by Bernd Heinen)

- Youtube: Verifying Data Quality Using Interactive Data Analysis of GPS-Data of a Sail Race

- SGF2015: Early Picture of the Data Quality Status of Your Analysis Data? SAS® Visual Analytics Shows You How

Cross Sectional Data

- Missing Values in Machine Learning: Why my old aunt Susanne gives data scientists a hard time (Youtube)

- The structure of MISSING VALUES in your data - get a clearer picture with the %MV_PROFILING macro

Time Series Data

- Using the TIMESERIES procedure to check the continuity of your timeseries data

- Replace MISSING VALUES in TIMESERIES DATA using PROC EXPAND and PROC TIMESERIES

- Youtube: Detecting and Treating Missing Values in Longitudinal data

- Have a look at your TIMESERIES data from a bird's-eye view - Profile their missing value structure + Youtube Webinar

- Youtube: Detecting Structural Changes and Outliers in Longitudinal Data

Using Individual Reference Limits for Data Quality Checks

- Youtube: Providing a reference value that considers all available co-information

- Youtube: Self Service Analytics using SAS Viya - Calculating Individual Reference Limits

- Using the GLMSELECT procedure to calculate individual reference values in outlier detection

2.3 Simulation case studies

Simulation case studies have been performed to quantify the effect of bad data quality on the performance of supervised machine learning models and on time series forecasting models.

- The effect of (bad) Data Quality on Model Accuracy in Supervised Machine Learning — Results from Simulation Studies Medium + Youtube Webinar

- Quantifying the Effect of Missing Values on Model Accuracy in Supervised Machine Learning Models Medium + Youtube Webinar

- Determining the best length of the history of your timeseries data for timeseries forecasting SAS Communities + Medium + Youtube Webinar

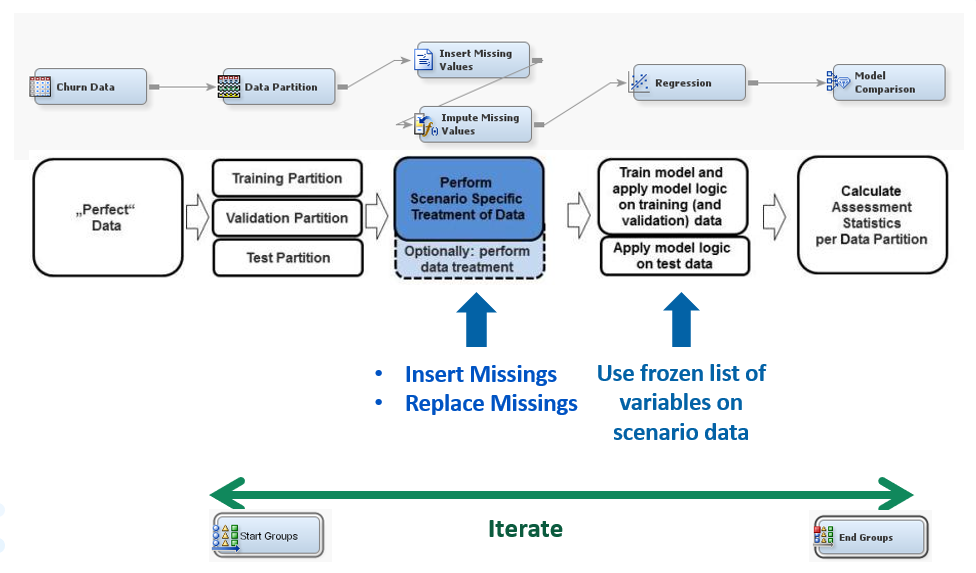

- Using SAS Enterprise Miner for Predictive Modeling Simulation Studies

3. Data Assembly

In order to be able to analyze the data with data science methods, the data needs to be structured in a appropriate form. The one-row-per-subject data structure and the longitudinal data structure are the two most prominent data structures in data science and machine learning. Data Assembly here refers to the exercise of joining tables together, aggregating data across hierarchies like time or product group, transposing data from one structure into another.

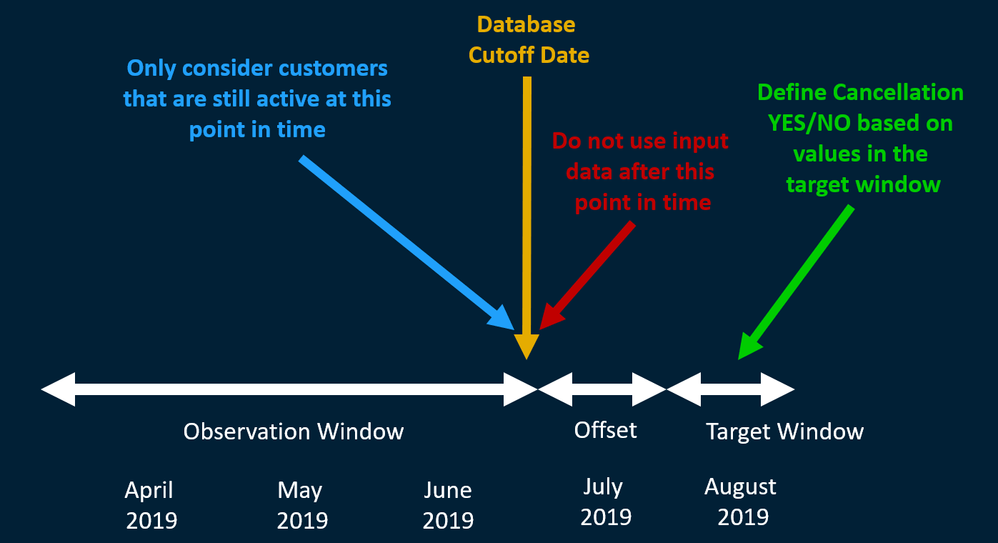

However this is not only a technical task of "programming the data management exercise". It also involves business considerations like the selection of the right data sources to answer the business question, the alignment of data at the time axis for predictive modeling, and the discussion which input variables can be used for the analysis.

- Youtube: Conceptual Considerations when Preparing Data

- Transpose your analysis data with the %MAKELONG and %MAKEWIDE macro

- SGF2018: An Easier and Faster Way to Untranspose a Wide File (co-authorship with A. Tabachneck, M. Kastin, J. Matise)

- Youtube: SAS Tips and Tricks #1: Using Data from a Lookup Table

- Encoding of CLASS Variables in Regression Analysis - Better understand the ORDINAL encoding

- SAS Sample 31584: Creating a Key-Value-Table

- SUGI2006: Efficient “One-Row-per-Subject” Data Mart Construction for Data Mining

SAS Global Forum Papers

- SUGI2006: Efficient “One-Row-per-Subject” Data Mart Construction for Data Mining

- SGF2015: Early Picture of the Data Quality Status of Your Analysis Data? SAS® Visual Analytics Shows You How

- SGF2018: Getting More Insight into Your Forecast Errors with the GLMSELECT and QUANTSELECT Procedures

- SGF2018: An Easier and Faster Way to Untranspose a Wide File (co-authorship with A. Tabachneck, M. Kastin, J. Matise)

SAS Press books

- Data Preparation for Analytics Using SAS (2007)

- Data Quality for Analytics Using SAS (2012)

- Applying Data Science - Business Case Studies Using SAS (2017)

Further References

Ask-the-expert Sessions (in German)

- Womit Sie „DATA=“ in den analytischen Procedures von SAS am besten füttern - Teil 1

- Womit Sie „DATA=“ in den analytischen Procedures von SAS am besten füttern - Teil 2

Articles

Github

SAS Communities: Article Overivew

Youtube Playlists

Gerhard Svolba, April 2022

(18°12'45" - 16°19'26"E)

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.