- Home

- /

- Programming

- /

- SAS Procedures

- /

- MACROs, loops or arrays to automate rates calculated from multiple col...

- RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

I'm not sure what the best approach to this would be, but I have a data set with columns named 'c21-c80' that contain denominators and numerators of certain measures of quality ('efficiency','timeliness','cost', etc).

A measure could have multiple components depending on age group for example:

Efficiency (age 18-25): c22/c21

Efficiency (age 26-34): c24/c23

Efficiency (all ages): c22+c24/c21+c23

Cost (no age stratification): c26/c25

There's no pattern to which measures have multiple components and which are just one column/another column, so I was calculating measures separately by listing the exact columns. However updates to the source data might render certain columns into different measures, so it gets confusing/inefficient changing each column manually.

data msr_results;

infile DATALINES;

input company $ c21 c22 c23 c24 c25 c26;

datalines;

0001 100 89 220 180 1000 756

0002 403 322 800 650 5000 3000

0003 26 15 50 45 350 180

0004 30 11 60 57 290 90

;

I am using something like this:

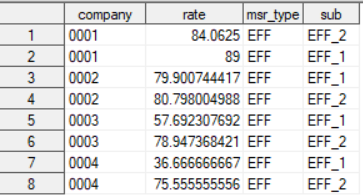

proc sql;

create table msr_results2 as

select company, (c22/c21*100) as rate, 'EFF' as msr_type, 'EFF_1' as sub from msr_results

union

select company, ((c22+c24)/(c21+c23)*100) as rate, 'EFF' as msr_type , 'EFF_2' as sub from msr_results

; quit;

This gives a basic summary data set with the rates and type of measure, and sub measure flags, grouped by company ID. I'm wondering if there was a better/more efficient way to do this, given that the measure calculations aren't simply c22/21, c24/c23, etc, etc.

I thought of using a separate data set as a 'key' like this and turning some of the values into MACRO variables and then looping over the whole data set but am not sure how to approach that some measures have multiple columns in the calculations, so I thought of setting a level and then having separate statements for each level but that could be inefficient, if there were up to 10 columns, for example.

data msr_key;

infile DATALINES;

input level msr_type $ sub $ order num_key $ num_key2 $ den_key $ den_key2 $;

datalines;

1 EFF EFF_1 1 c22 NA c21 NA

2 EFF EFF_2 1 c22 c24 c21 c23

1 COS COS_1 2 c26 NA c25 NA

;

run;

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

I've had to deal with that and chose to make my data in a long format.

I added in the denominator factor as a Parent column so I could look it up via a join

I added in a GROUP variable to group variables together.

This made it much easier to streamline my analysis and automating it going forward.

This amount of work was only worth it because this data will be used multiple times over the next 6 years.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

It DOES make sense to convert the data into long format, I will try that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

c22/21, c24/c23

You stated those were some formulas

In this case for the records with C22, I have a column called ParentID which is the total, which would be C21

Ie

| MeasureID | MeasureDesc | ParentID1 | ParentID2 |

| 1 | Total Population | ||

| 2 | Population 0 to 14 | 1 | |

| 3 | Population 0 to 4 | 1 | 2 |

| 4 | Population 5 to 10 | 1 | 2 |

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

I'm having a bit of trouble comprehending how ParentID would correlate to the formulas based on values of 1 and 2.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

It means when I'm joining I don' t have to think about which records to join with.

I can do something like (untested), which means the denominator is joined in automatically. You're moving the knowledge of the joins/lookup to a table rather than the code, which makes it infinitely easier to process, IMO.

proc sql;

create table want as

select t1.*, t2.value as denom, t1.value/t2.value as rate

from table1 as t1

left join table2 as t2

on t1.parentID=t2.ID

order by ID;

quit;This is an educated guess based on what you've shown. It could not be appropriate, but without more information this is the best answer I can give you at this point in time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

I'm not used to thinking in that way, so bear with me and thanks for being patient. So my original data is setup like this:

|

Entity_ID |

C50 |

C51 |

C52 |

C53 |

C54 |

C55 |

C56 |

C57 |

|

001 |

100 |

50 |

100 |

50 |

100 |

50 |

100 |

50 |

|

002 |

100 |

50 |

100 |

50 |

100 |

50 |

100 |

50 |

C50 - C57 columns are all one measure.

For ease, all the 100 values are the denominators and 50 are the numerators. There are thousands of entities who have these denominators and numerators. Basically, your suggestion is convert this table to long format right? And based on your code example, one table for the numerators and one table for the denominators with factors that link how the measure could be calculated.

So table 1 would look something like this:

|

Entity |

Column |

Value |

Measure |

Type |

Measure_Desc |

ParentID |

ParentID2 |

|

001 |

C51 |

50 |

SPC |

Num |

Males 21-75, Category 1 |

|

1 |

|

001 |

C53 |

50 |

SPC |

Num |

Males 21-75, Category 2 |

|

2 |

|

001 |

C55 |

50 |

SPC |

Num |

Females 40-75, Category 1 |

|

1 |

|

001 |

C57 |

50 |

SPC |

Num |

Females 40-75, Category 2 |

|

2 |

Table 2 would look something like this:

|

Entity |

Column |

Value |

Measure |

Type |

Measure_Desc |

ID |

ID2 |

|

001 |

C50 |

100 |

SPC |

Den |

Males 21-75, Category 1 |

|

1 |

|

001 |

C52 |

100 |

SPC |

Den |

Males 21-75, Category 2 |

|

2 |

|

001 |

C54 |

100 |

SPC |

Den |

Females 40-75, Category 1 |

|

1 |

|

001 |

C56 |

100 |

SPC |

Den |

Females 40-75, Category 2 |

|

2 |

So the blank ParentIDs/IDs would correlate to a separate rate for each sub-measure, and the corresponding factors would correlate to a combined category 1 rate and combined category 2 rate (to calculate that, I would sum the denominators and numerators and group by ParentID/ID).

Given that the original data is entirely lacking in any identifiers and I have no reference table, in your experience, did you end up converting the data to long format and then manually adding in the reference description and factors?

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Yes, you convert your table to a long format.

Given that the original data is entirely lacking in any identifiers and I have no reference table, in your experience, did you end up converting the data to long format and then manually adding in the reference description and factors?

You have to add that logic in somewhere. In your current approach you're basically manually coding all the relationships. And if you make a mistake in one place it's hard to find and debug. And modifying is a nightmare, especially 6 months later. This approach moves the logic to a table, albeit one you manually create initially, but if the logic is only in your head, then that's a problem as well.

I don't think your table 1 and table 2 are correct. It should be two tables

Table 1:

Measure ID - C50

Description -

Measure Denominator

Table 2:

Measure ID

Measure Value

You link the two based on the relationships in table 1.

TBH I haven't done this with the multiple column concept, ie C50+C51/ T50+ T51 but usually those totals are also in the data in another form and that's the approach I'd likely take. Ie C50+C51 is usually equal to C49 or C52 for example

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

And definitely, I had made a mistake manually adding up the columns so I wanted to find out a way to do this without having to edit the columns whenever the original columns change out of my control.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand in the Innovate Hub.

Watch Now →Learn the difference between classical and Bayesian statistical approaches and see a few PROC examples to perform Bayesian analysis in this video.

Find more tutorials on the SAS Users YouTube channel.

SAS Training: Just a Click Away

Ready to level-up your skills? Choose your own adventure.