Mixture experiments are certainly unique. The constraint that the sum of the ingredients must add to 1 causes multicollinearity that will always be present. That multicollinearity will necessarily lower the power of any experimental design. My

previous post showed how to create a mixture design but did not discuss the power. This post will explain how to determine the power of a mixture experiment as well as why the power values may be extremely low.

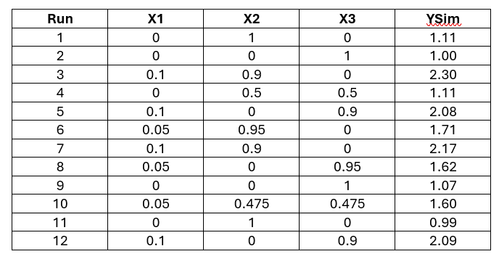

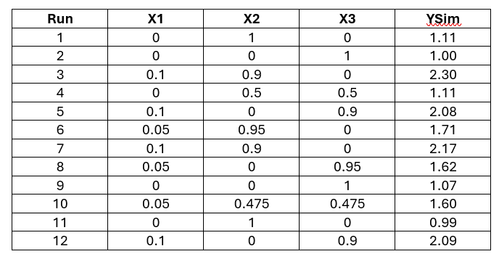

Let’s start by looking at an example that we can use to understand power for a mixture design. For this example we have three components (X1, X2, and X3) with their associated ranges: X1: 0 – 0.10, X2: 0 – 1, X3: 0 – 1. Using the approach outlined in a

previous post, an experiment can be created that might result in a 12-run design (in sorted order):

Select any image to see a larger version.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

The twelve points cover the design space quite well and contains some replicated points.

Although the design looks good, there is no indication of power. To calculate power, an estimate of the error is needed. One way to obtain this estimate is to use subject matter knowledge of what the expected responses might be for each design point. With the expected responses, a preliminary analysis can be completed to obtain the error estimate. Since this is a hypothetical example, I will use a formula to generate the expected responses.

YSim = 6*X1 + X2 + X3 + 8*X1*X2 + 8*X1*X3 + Error

This model is a quadratic model as indicated by the cross-product terms. This fact will be important a bit later. The error term is a bit of random error from a normal distribution. Applying the formula yields these realized values:

Using these values, we can fit a model to estimate the error. Recall in an earlier post that we can use a noint option to fit the Scheffe mixture model.

data dsgn;

input run x1 x2 x3 ysim;

datalines;

1 0.0 1.0 0.0 1.16

2 0.0 0.0 1.0 1.08

3 0.1 0.9 0.0 2.30

4 0.0 0.5 0.5 1.06

5 0.1 0.0 0.9 2.13

6 0.05 0.95 0.0 1.75

7 0.1 0.9 0.0 2.17

8 0.05 0.0 0.95 1.69

9 0.0 0.0 1.0 0.95

10 0.05 0.475 0.475 1.63

11 0.0 1.0 0.0 1.08

12 0.1 0.0 0.9 2.21

;

/* Fitting Mixture Model to Assess Std. Dev. */

title "Regression Analysis to Assess Error";

proc glm data=dsgn;

model ysim = x1 x2 x3 x1*x2 x1*x3 x2*x3/noint;

run;

The results of the analysis show an error standard deviation (labeled as Root MSE) of 0.066187.

Now we can turn our attention to the power of this design. The PROC GLMPOWER procedure will provide the power of the design.

/* Full Mixture Model */

title "Full Mixture Model";

proc glmpower data=dsgn;

model ysim=x1 x2 x3 x1*x2 x1*x3 x2*x3;

power

alpha = 0.05

ntotal = 12

stddev=0.066

power = .;

run;

PROC GLMPOWER requires the model statement where we get to specify our full model. We need to add the POWER statement with options (for this example) for alpha, number of observations, standard deviation, and power. I specified an alpha of 0.05 for the typical 95% confidence level. The design had 12 runs, so ntotal is 12. The standard deviation is 0.066 as we noted earlier, so that is the value that is entered on the STDDEV option. Finally, the power is set equal to a missing value which tells the procedure to solve for power. Unfortunately PROC GLMPOWER does not allow a noint option to truly fit the Scheffe model. This will become apparent on the results.

Because of the inherent singularity in the design matrix, the power for the main effects, X1, X2, and X3, cannot be calculated. The power for all the quadratic/cross-product terms is fairly low. We will explore why this is a bit later. Rather, I would like to get a power estimate for the main effects. This is where the prior post on analyzing a mixture experiment comes into play again. We can estimate a Scheffe mixture model by removing one of the main effects. I will start by removing the X3 term. All other aspects of the PROC GLMPOWER procedure will remain the same.

/* Mixture Model with X3 removed */

title "Mixture Model with X3 Effect Removed";

proc glmpower data=dsgn;

model ysim = x1 x2 x1*x2 x1*x3 x2*x3;

power

alpha = 0.05

ntotal=12

stddev=0.066

power=.;

run;

The results will now display the power for the X1 and X2 main effects along with the power estimates of the cross-product terms as before.

We can see that the power for X1 is very low, only 0.095. Why is this so low? The power for X2 is 0.27, which is quite a bit better, but still very low. And how can we determine the power for the X3 term?

The X3 power can be determined by repeating the same approach, but removing the X2 term (or X1) and putting X3 back into the model.

/* Mixture Model with X2 removed */

title "Mixture Model with X2 Effect Removed";

proc glmpower data=dsgn;

model ysim = x1 x3 x1*x2 x1*x3 x2*x3;

power

alpha = 0.05

ntotal=12

stddev=0.066

power=.;

run;

The results are the same as before, but with the X3 power displayed, which is 0.27, instead of the power for X2.

Now we can finally turn our attention to why the power is so low.Since we know what this model is, we can explore the low power. Certainly some of the low power is due to the multicollinearity found in the data, but there is more. The narrow range for the X1 component also causes some difficulties. Look at the plot of the YSim response and X1 with an overlaid linear regression line and the actual quadratic relationship that was used to generate the response.

The curves are certainly very different. However, X1 only ranges from 0 to 0.1. If we zoom in on just that portion of the X1 range we see a very different picture.

Over the range where the data will be collected the difference between the linear fit and the quadratic fit is almost indistinguishable. The model is not really able to distinguish between the line and a quadratic fit due to the very narrow range. For this reason it is important to have the ranges for your components be as wide as possible in order to magnify the differences between the different possible models.

Find more articles from SAS Global Enablement and Learning here.