- Home

- /

- SAS Communities Library

- /

- What's different in a SAS Viya Deployment on Google Kubernetes Engine ...

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

What's different in a SAS Viya Deployment on Google Kubernetes Engine ? Part 2 : Deployment

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Since the new SAS Viya LTS version (2021.1), two new Cloud platforms are supported for the SAS Viya deployment : Google and Amazon (in addition of Azure).

In this series , I am sharing some of our findings when deploying SAS Viya on the Google Kubernetes Engine (GKE) and highlight some of the specifics of the Google Cloud Platform.

Those specifics can affect either provisioning GKE or the SAS Viya deployment itself. We talked about the infrastructure and provisioning aspects in the first part.

In this second part of the article, we will look at what makes a deployment of SAS Viya on GCP "special" and different from Azure which was the first supported Cloud Provider for SAS Viya.

How do I deploy SAS Viya 4 in GCP ?

That is probably the first question you’ll ask yourself.

Well, just like with any supported Kubernetes Platform, you still have plenty of choices regarding the deployment methods : manual, with the deployment operator or with the "sassoftware/viya4-deployment" GitHub project...

See this previous article for an overview of the methods and refer to the official documentation for additional guidance.

But no matter which method you choose, there are a number of specifics points for a deployment of SAS Viya in GKE in GCP that you should be aware of 😊

GCP Ingress quota issue

The issue

In GCP, some quotas (or limits) are automatically created and enforced on the GKE resources.

You might not be aware of that but if you experience a deployment failure with an error message like the following, then you are hitting the "GKE ingress quota” issue...

"ingresses.extensions \"sas-cas-server-default\" is forbidden: exceeded quota: gke-resource-quotas, requested: count/ingresses.extensions=1, used: count/ingresses.extensions=100, limited: count/

As an example, if you use the manual deployment method and execute "kubectl apply" command to apply your SAS Viya manifest (site.yaml), here is what you will see :

Select any image to see a larger version.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

The error message is clear 😊 : You cannot create more ingresses objects in your GKE cluster than the configured limit of 100…Which, obviously is an issue when your SAS Viya deployment needs to create 150 or more Ingress objects…

According to Google : “The gke-resource-quotas protects the control plane from being accidentally overloaded by the applications deployed in the cluster that creates an excessive amount of kubernetes resources.”

You can also check the resource quotas with a simple command as shown below.

[cloud-user@rext03-0129 ~]$ kubectl describe quota gke-resource-quotas -n gcptest Name: gke-resource-quotas Namespace: gcptest Resource Used Hard -------- ---- ---- count/ingresses.extensions 101 100 count/ingresses.networking.k8s.io 101 100 count/jobs.batch 1 5k pods 111 1500 services 123 500

You might think : “OK that’s easy to fix, let’s just bump up the quota value and we’ll be good to go !”

Well...the challenge is that it is not that easy…

The Solution Workaround

As explained in the very short section dedicated to this topic in the official Google documentation, these quotas are “automatically applied to clusters with 100 nodes or fewer” and “cannot be removed”. Well actually, these quotas cannot be changed at all, they are immutable !

So, the only way to influence the Ingress quota, to ensure our SAS Viya deployments (that may require more than 100 Ingress definitions) is successful, is to understand how Google allocates them.

From the very light documentation publicly available on this topic, all we know is that it applies to clusters with less than 100 nodes, but is it always 100 for the ingresses ? or does it change ? What drives their value?

The only way to know was to test various combinations and basically, what we have noticed is that the quota depends on the number of nodes in the cluster and can be automatically increased as autoscaling brings more nodes online.

Yet, when using or starting with a small number of nodes (counting on the autoscaler to later increase the number of nodes when needed), the limit of 100 ingress is active initially and causes any SAS deployment with more than 100 Ingresses to fail.

We’ve seen in our various tests that when provisioning 6 nodes, the ingress quota value was set to 5000 (which allows us to deploy). Adding nodes dynamically triggers the increase of the quota values. However, a colleague recently had a situation where 6 nodes did not trigger the quota value to increase from 100 to 5k. So it is possible that the VM size also plays a role...

Unfortunately, the algorithm that GCP uses to set the ingress quota is not publicly available information. The SAS RnD has requested additional details and it as of today it was simply confirmed that the "ingresses quotas values limits are calculated based on the cluster size and other factors.".

The workaround (that is still Work in progress in the viya4-iac-gcp GitHub project) will set the initial_node_counts of the node pools so that they add up to at least 6. The user's min/max values would remain in place, so the autoscaling would remove those initial nodes after a while, if they remain unused.

That should alleviate the situation in most cases, at least in combination with the node_pool values in our example tfvars files. However it might not completely solve all the situations given that we still have several unknowns in the equation since we don't really know how the Google Algorithms determine these quotas.

Cloud SQL proxy (for external PostgreSQL)

What is it ?

As shown in the GCP architecture diagram from the SAS official documentation, the Google Cloud SQL for Postgres SQL (Cloud Managed Postgres Instances) can be a key part of your SAS Viya Environment, when you choose it as the external instance of PostgreSQL for your SAS Infrastructure Data Server..

There are two ways to enable connections from GKE to Google Cloud SQL Postgres: either using Cloud SQL Proxy, or a private IP address.

As explained in the Google’s official documentation : “The Cloud SQL Auth proxy is the recommended way to connect to Cloud SQL, even when using private IP. This is because the Cloud SQL Auth proxy provides strong encryption and authentication using IAM, which can help keep your database secure.”

SAS also requires that you access your Cloud SQL for PostgreSQL server via the Cloud SQL Proxy.

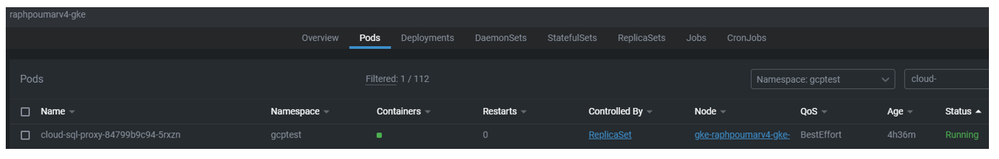

This proxy is implemented as a “cloud-sql-proxy” pod running in the SAS Viya GKE cluster.

Here is an extract from the “Configure PostgreSQL” SAS SAS Viya README file*:

"All SAS Viya database communication should be directed to a Cloud SQL Proxy client that is deployed in the same namespace that hosts SAS Viya. This ensures that data is encrypted prior to traveling outside the virtual network in the namespace."

(*) Tips and tricks : The README files are provided in the deployment assets however don’t forget that you can also read you order’s READMEs files directly from the my.sas.com portal.

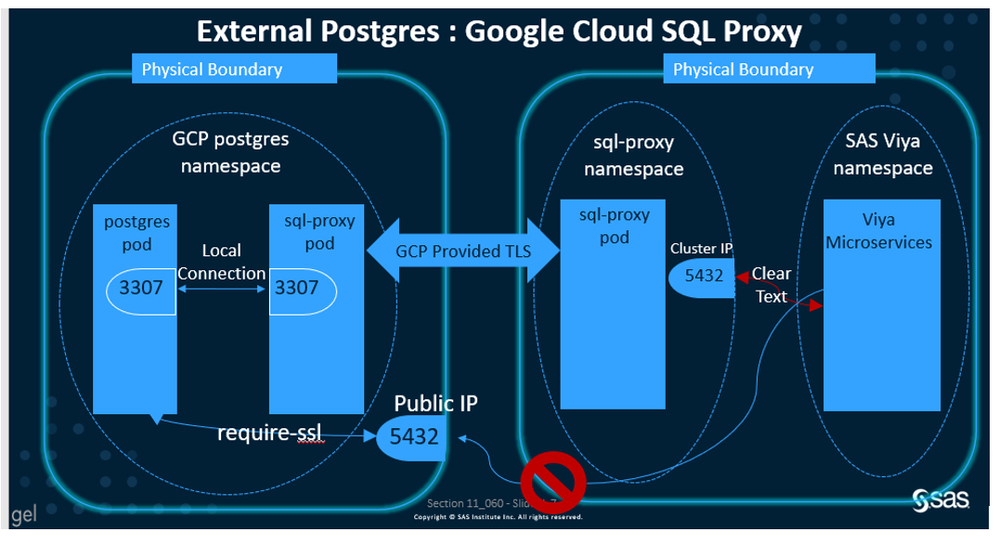

The topology diagram below (that was taken from a JIRA) shows how the connection is done from the SAS Viya Environment to the external PostgreSQL instance when using the cloud-sql-proxy.

Impact on the SAS Viya deployment

In terms of deployment there are 3 points to look at :

- Installation of the cloud-sql-proxy application in your GKE cluster

If you are using the viya4-deployment project, the ansible playbook can do it automatically for you (if you set the V4_CFG_POSTGRES_SERVERS.Internal value to "false" and provide a value for V4_CFG_CLOUD_SERVICE_ACCOUNT_NAME) – see this page for additional details.

Once deployed it would look like this in Lens :

If you don’t have Lens you can run the kubectl command below to get the same information.

$ kubectl get svc -n gcptest | grep cloud-sql-proxy cloud-sql-proxy ClusterIP 10.1.0.211 <none> 5432/TCP 36m

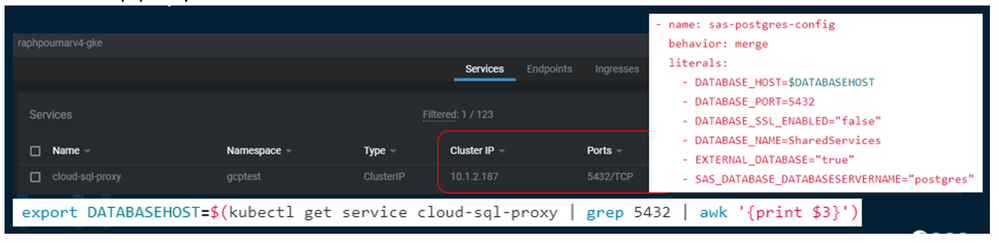

- Kustomize configuration with the cloud-sql-proxy Cluster IP

When using the External postgres, you must set the external Database Server Host and port in the kustomization.yaml file.

While you would generally use the external database server instance physical or virtual IP address, with the Cloud SQL proxy it is different. You need to use the service name (or associated cluster IP address) and port number of the Cloud SQL Proxy in a form that makes them reachable from within the SAS Viya namespace.

As illustrated below, you can get the information by running a kubectl command to inspect the cloud-sql-proxy service.

- Full TLS support

As explained in the SAS Viya pre-requisites documentation : “Additional steps are required in order to support full TLS. For more information, see the README file titled "Configure an External PostgreSQL Instance for SAS Viya" at $deploy/sas-bases/overlays/configure-postgres/README.md.”

Basically, you must just reference the following PatchTransformer :

in the transformers block of your base kustomization.yaml, it will ensure that the sas-postgres-config ConfigMap has the right value.

It must be added after all the security transformers.

Working with the Google Container Registry (GCR)

Basics

Using a private container registry to store the SAS Viya images for your deployment is a recommended practice. It allows strict control of the image versions and can improve image download times (with a closer physical location for the images that needs to be very often download on the Kubernetes nodes).

So, if you are deploying SAS Viya in GCP, a good idea would be to use the Google Container Registry service to build a mirror of your image registry.

In GCP the Container registry service (GCR) is included by default, it is already there for any given project. Just type : https://gcr.io/<your project> to see the available repositories.

Impact on the deployment

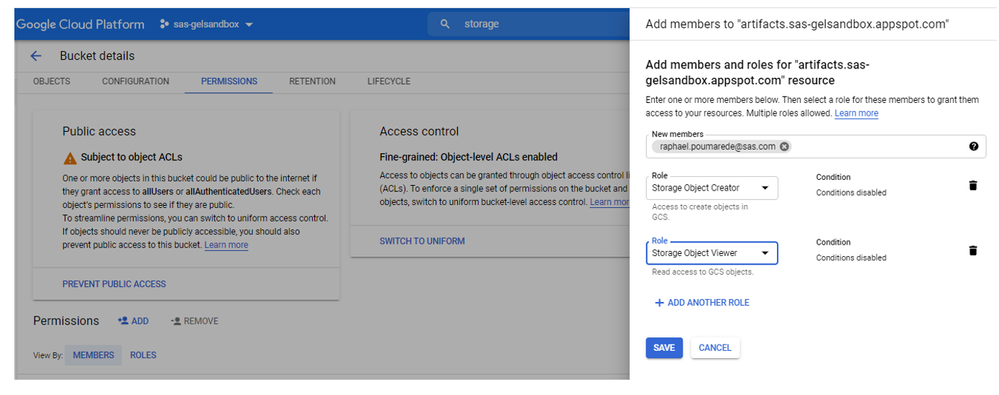

Once you have determined the GCR URL, you can use the mirror manager to pull the viya images from cr.sas.com (the SAS Public registry) and then push them into your GCP project’s Registry. The SAS official documentation provides guidance on how to use the mirror manager with the Google Container Registry.

You have to go in "Cloud Storage", select the artifacts.<YOUR PROJECT>.appspot.com bucket and, in the permissions, add your GCP account with required role ("Storage Object Viewer" and "Storage Object Creator"). It is illustrated in the screenshot below.

After that, you should be able to use the SAS Viya mirror manager with the configured account to push the image into the Google Container registry.

Finally, for the deployment, you have to follow the “common customization” instructions from the SAS Official documentation to add the required Transformer (a PatchTransfomer example is provided in the mirror.yaml file from sas-bases/examples/mirror) and reference it in your Kustomization.yaml.

An "ImagePullSecret" is also required to pull images from a Private Registry, so you will need one for GCR.

You will, then be in position build a Kubernetes manifest that references the images from your Google Container Registry, so the GCP nodes can pull the images from GCR when the site.yaml manifest is applied in GCP.

The trick to make it work

The reason why we store the images in GCR is that we want to be able to pull our SAS Viya images on our GKE node pools.

But to allow that, specific permissions are required for the service account associated to the GKE Node Pools.

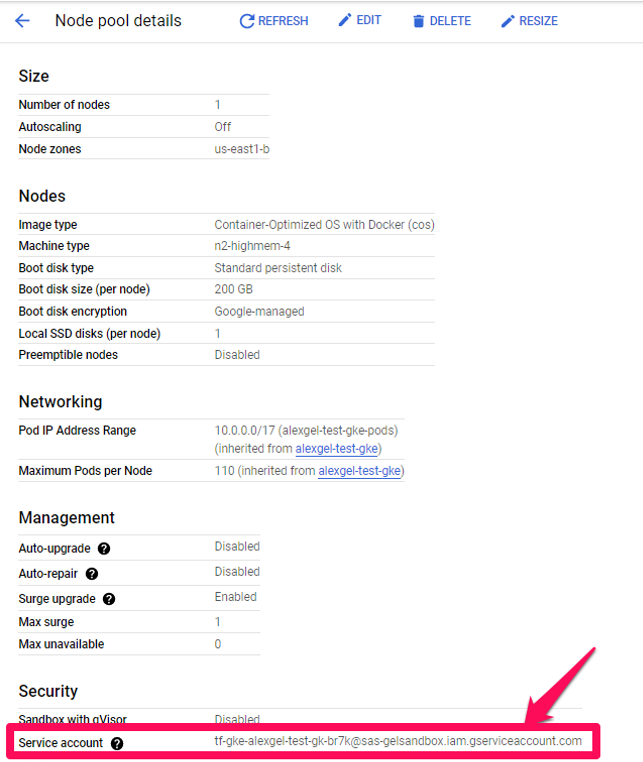

First, you have to identify which service account is associated to each node pool (you can see that in the GCP web console, as shown below).

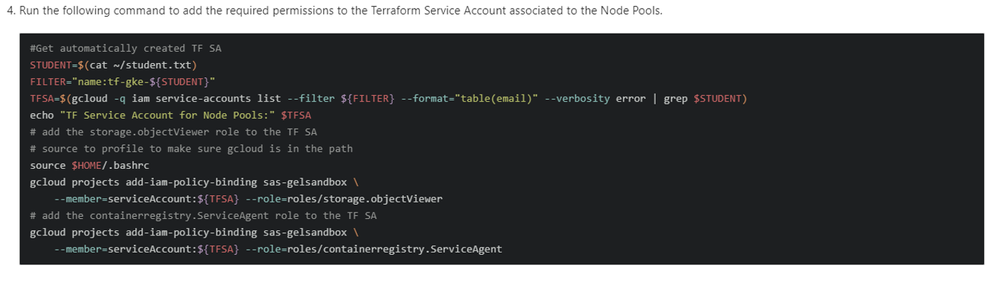

Then you have to associate the required permission to the Service account.

Here is an example on how to add the required permissions with the GCP CLI (gcloud ) :

Be aware that these permissions may vary. Today it is also recommended by Google to use the “Artifact registry” instead of the “Container registry” to manage the container images in GCP (But we haven’t tested it yet).

Finally, work is also in progress in the viya-iac-gcp project (See the recent Pull Request – and current workaround) to automatically enable the required permissions and privileges just by setting a variable in the IaC Terraform variable file.

Conclusion

As we’ve seen in this article, deploying SAS Viya 4 in the Google Cloud Platform Infrastructure could present some specific challenges related to the Cloud provider specifics.

Most SAS consultants are not expert level on GCP or GKE, but having this kind of knowledge will help consultants involved in architecting, deployment and administration of SAS Viya on GKE. It can help them avoid potential pitfalls and will be useful when planning for or deploying SAS Viya 4 in GKE.

Hopefully having read the 2 articles (part 1 and part 2) and trying the VLE hands-on will help you to get ready for your next "Viya in Google Cloud" customer project.

Thanks for reading !

Find more articles from SAS Global Enablement and Learning here.

Ready to see what SAS Viya Copilot can do?

Visit the Tips & Tricks page for setup guidance, demos, and practical examples that show how Copilot supports your workflows.

SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.

- Find more articles tagged with:

- GEL

- Google Kubernetes Engine