- Home

- /

- SAS Communities Library

- /

- Using NFS Premium shares in Azure Files for SAS Viya on Kubernetes

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Using NFS Premium shares in Azure Files for SAS Viya on Kubernetes

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

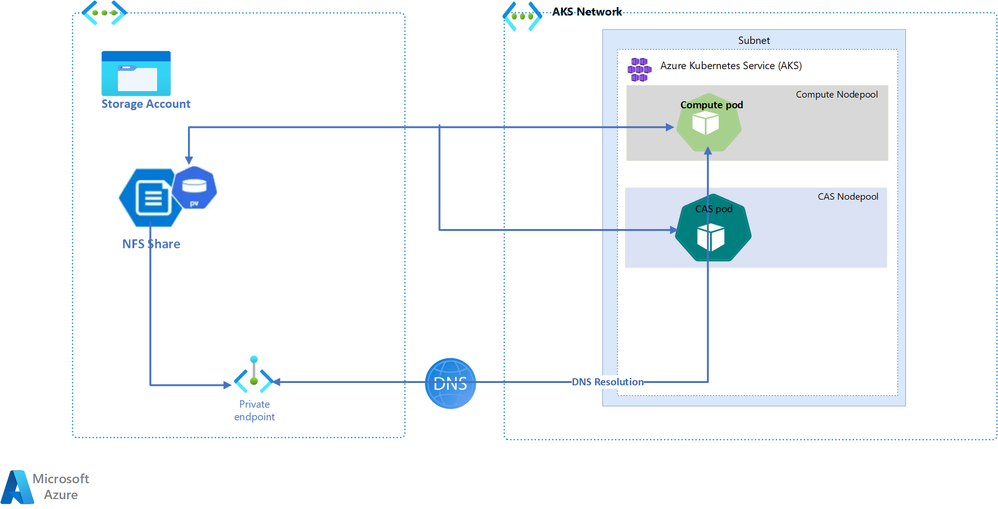

Azure Files is a distributed file service which supports the Server Message Block (SMB), REST & NFS protocols. Using a POSIX compliant filesystem for SAS Viya on the Azure Kubernetes service (AKS) is a system requirement for the deployment and it is also a common choice for customers who need to store business data in SAS Datasets. Microsoft Azure supports fully POSIX complaint NFS based storage for Premium and NetApp File storage. In November 2021, Microsoft announced Azure File Support for the NFS4.1 protocol in general availability. NFS shares can be seamlessly mounted on Azure Virtual Machines, virtual machine scale sets & containers running in AKS. The below figure shows an overview of the usage of Azure Files in SAS Viya4 running on AKS :

This article describes the options for getting optimal performance while using NFS shares in the Azure Files service with SAS Viya.

Introduction

All data stored in Azure Files is encrypted at rest using Azure storage service encryption (SSE), which means that the data is transparently en - and decrypted “below” application level. For encryption in transit, Azure provides a layer of encryption for all data in transit between Azure datacenters using MACSec. Through this, encryption exists when data is transferred between Azure datacenters.

File shares using the NFS protocol don't offer user-based authentication. Authentication for NFS shares is based on the configured network security rules. Due to this, to ensure only secure connections are established tor NFS share, we must set up either a private endpoint or a service endpoint to link the storage account to the AKS cluster.

Azure NFS file shares are only offered on premium file shares, which store data on solid-state drives (SSD). The IOPS and throughput of NFS shares scale with the provisioned capacity. The below table summarizes the IOPS and throughput of Azure Premium shares and compares them to Azure Netapp shares, which is another popular storage choice on the Azure cloud.

|

Sl. No |

Capacity (GB) |

Throughput (MiB/s) |

|||

|

Azure Files Premium |

Netapp Files - |

Netapp Files - |

Netapp Files - |

||

|

1 |

500 |

150 |

8 |

32 |

64 |

|

2 |

1000 |

200 |

16 |

64 |

128 |

|

3 |

1500 |

250 |

24 |

96 |

192 |

|

4 |

2000 |

300 |

32 |

128 |

256 |

|

5 |

4000 |

500 |

64 |

256 |

512 |

(see: https://learn.microsoft.com/en-us/azure/storage/files/understand-performance)

As shown in the above table, the throughput for the File shares increases linearly based on the size of the share. For shares up to 4TB, the throughput of Azure Files Premium shares outperforms the Netapp shares.

Please note: The effective file share performance is subject to machine network limits, available network bandwidth, IO sizes, and parallelism, among many other factors.

A quick check on the price lists will show you that the cloud consumption costs for the Azure Files service are significantly lower than the costs for Netapp storage. So - why think twice? Click-click and you’re done, isn’t it? Well, yes, but read on …

Performance caveats

It is very important to tune the NFS mount options for optimum performance with SAS Viya compute and CAS workloads. One of the major parameters for NFS performance is the “read-ahead” kernel setting which predictively requests blocks from a file in advance of I/O requests by the application. It is designed to improve client sequential read throughput.

Unlike other mount options - which we will discuss as well further down -, the “read-ahead” parameter has to be increased on the OS level and not at the file share level, which makes configuring this setting a bit more complicated in the Kubernetes world. The default value of “read-ahead” is 128kb. The Microsoft recommendation is to have 1:15 ratio between the mount option “rsize” and the “read-ahead”. In our testing, we used an “rsize” value of “1024KiB” and therefore we set the read-ahead parameter to 15MB. As you can see in the below table, which summarizes the performance enhancement for SAS procedures reading from an NFS-backed SAS library, the extra effort is well worth the invested time.

|

Sl. No |

Storage Type |

Share Size |

Table Size (GB) |

Data Step |

Proc Freq |

Proc Summary |

|

1 |

Azure Files NFS |

4TB |

60 |

18:32 |

16:24 |

16:54 |

|

2 |

125 |

29:17 |

27:50 |

27:27 |

||

|

3 |

235 |

62:00 |

57:28 |

57:35 |

||

|

4 |

350G |

93:00 |

87:09 |

86:31 |

||

|

5 |

Azure Files NFS |

4TB |

60 |

03:57 |

03:55 |

3:55 |

|

6 |

125 |

07:49 |

5:54 |

3:56 |

||

|

7 |

235 |

18:49 |

8:02 |

7:50 |

||

|

8 |

350G |

23:33 |

11:48 |

11:47 |

In our tests, we used a 4TB Premium NFS FileShare and tested the performance for reading & writing SAS datasets (sas7bdat) of various sizes. We compared the results with and without “read-ahead” enabled on the Fileshare.

Additionally, the below table summarizes the performance enhancements for load time of various SAS datasets to CAS memory:

|

Sl. No |

Storage Type |

Share Size |

Table Size(G) |

CAS Load Time |

|

1 |

Azure Files NFS |

4TB |

60 |

19:11 |

|

2 |

125 |

38:49 |

||

|

3 |

235 |

85:05 |

||

|

4 |

350 |

129:09 |

||

|

5 |

Azure Files NFS |

4TB |

60 |

3:54 |

|

6 |

125 |

8:24 |

||

|

7 |

235 |

16:48 |

||

|

8 |

350 |

25:42 |

Configuring the read-ahead parameter for NFS shares

Obviously, you’ll need to start with creating the storage account (make sure to select the Premium performance tier) and add a file share using NFS mode. Next create a private endpoint in the virtual network (vnet) used by AKS to allow connecting to the file share.

With the basic service established and the connectivity configuration in place, we can proceed to deal with the more complicated issue of increasing the “read-ahead” kernel setting. This is a step which you really do not want to skip, as the NFS I/O performance will be quite low without increasing it. Remember that it needs to be enabled on all AKS worker nodes where NFS mounts are expected, i.e. on all hosts in the compute and CAS nodepools.

In this blog, we’re describing how to set the kernel parameter using a daemonset with elevated privileges. Much of our work is based in this article from Microsoft. Although it is not explicitly discussing Kubernetes and the Azure Files service, the steps described in it are still correct. The approach we’re using is to add a udev rule to the Kubernetes worker nodes which will be triggered whenever a NFS mount occurs. This may happen at any time as Kubernetes allows you to directly specify NFS mounts in the pod definition. For example, this snippet might be part of the PodTemplate manifest describing a SAS compute session:

volumes:

- name: shared-volume

nfs:

server: mystorageaccount.file.core.windows.net

path: "/mystorageaccount/myshare"

With this configuration, whenever a SAS compute session is started, a new NFS mount will be created on the worker node and we need to make sure that the read-ahead parameter is applied to it. For that purpose we’re leveraging the udev subsystem, which is the Linux Kernel subsystem that is responsible for sending device events:

In plain English, that means it's the code that detects when you have things plugged into your computer, like a network card, external hard drives (including USB thumb drives), mouses, keyboards, joysticks and gamepads, DVD-ROM drives, and so on.

(https://opensource.com/article/18/11/udev)

However, udev not only sends out event notifications – the udev daemon can also run scripts when specific events occur. These scripts (called “rules”) need to be stored in either the /usr/lib/udev/rules.d/*.rules or in the /etc/udev/rules.d/*.rules directory for the daemon to load them.

We’ve attached the full deployment manifest to this blog, so we can focus on the most relevant parts in this text. Let’s first investigate the udev rule which we store as a ConfigMap in our cluster:

apiVersion: v1

kind: ConfigMap

metadata:

name: set-nfs-readahead-rule-config

namespace: default

data:

99-nfs.rules: |

SUBSYSTEM=="bdi", ACTION=="add", PROGRAM="/usr/bin/awk -v bdi=$kernel 'BEGIN{ret=1} {if ($4 == bdi) {ret=0}} END{exit ret}' /proc/fs/nfsfs/volumes", ATTR{read_ahead_kb}="15380"

In this example, we’re setting the read_ahead_kb parameter to the value of 15380 (which means a read ahead value of 15MB of data) and we’re also limiting the rule to NFS volumes.

As the next step, we need to copy the rule file to all Kubernetes worker nodes and make the udev daemon on these nodes aware of it. For that purpose we’ll be using a daemonset with the appropriate nodeAffinity and tolerations configuration:

# use this to match _all_ worker nodes

# nodeSelector:

# kubernetes.io/os: linux

# kubernetes.azure.com/mode: user

# OR use this to only deploy the daemonset to the nodes

# in the compute and cas nodepools

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: workload.sas.com/class

operator: In

values:

- cas

- compute

# this allows the pods to be scheduled on nodes

# with “workload.sas.com/class” taints

tolerations:

- key: workload.sas.com/class

operator: Equal

value: compute

effect: NoSchedule

- key: workload.sas.com/class

operator: Equal

value: cas

effect: NoSchedule

Changing a Linux kernel parameter requires root permissions, so our daemonset needs to run in privileged mode. Obviously this is a security risk and should be avoided. Luckily, the task we’re trying to accomplish is a “one-time affair” – it only needs to happen once whenever a new worker node comes online. Hence, we can run the commands requiring elevated privileges in an initContainer, which will stop after execution while the main container is just idling to keep the daemonset alive. So, to summarize, there will be no active container using root privileges in our daemonset:

initContainers:

- name: init-privileged

command:

- (more about this further below)

...

securityContext:

privileged: true

containers:

- name: main-container

image: k8s.gcr.io/pause:3.1

Moving on to the next challenge: how can we copy a file from a pod to the filesystem of our Kubernetes worker nodes? Remember that the udev rule file needs to be stored in specific directories (/usr/lib/udev/rules.d/ or /etc/udev/rules.d) for the udev daemon to load it. That’s fairly easy: by using a hostPath volume. As mentioned above, we have stored the rule in a ConfigMap, so we can use the approach sketched out below:

volumes:

# this is where we have stored the udev rule

- name: config-volume

configMap:

name: set-nfs-readahead-rule-config

# this directory is monitored by the udev daemon

- name: host-mount

hostPath:

path: /etc/udev/rules.d

initContainers:

- name: init-privileged

# the rule file can be found here

volumeMounts:

- name: config-volume

mountPath: /tmp

# the host filesystem is mounted here

- name: host-mount

mountPath: /host

command:

- /bin/sh

- -c

- |

#!/bin/sh

# copy the file from the pod FS to the host FS

cp /tmp/99-nfs.rules /host/99-nfs.rules

Of course, the /host directory is only the pod’s view on the node’s filesystem. Seen from “outside” of Kubernetes, the rule file can be found as /etc/udev/rules.d/99-nfs.rules and this is exactly where we (actually the udev daemon, to be precise) want it to be.

That’s almost it – only one last step is missing: how can we update the udev daemon so that it loads the new rule into memory? This command needs to be run on the OS and it requires root privileges as well. The Kubernetes solution for this is to use the nsenter command. Here’s the how the man page explains what nsenter does:

NAME

nsenter - run program in different namespaces

DESCRIPTION

The nsenter command executes program in the namespace(s)

that are specified in the command-line options

(https://man7.org/linux/man-pages/man1/nsenter.1.html)

To better understand how nsenter works, you should recall that Kubernetes pods are not virtual machines, but simply isolated processes running in separate Kernel namespaces (don’t confuse Linux kernel namespaces with Kubernetes namespaces). Given the right permissions, we can use nsenter to switch to a different namespace to execute the reload command on the udev daemon. The process we’re interested in has the Process ID 1, which is also known as the “init” process in Linux. init is the first user-mode process created when Linux boots up and it runs until the system shuts down. init obviously is owned by the root user and it manages the system daemons (like udev).

Now, with all pieces coming together, this is how our initContainer command finally looks like:

initContainers:

- name: init-privileged

command:

- /bin/sh

- -c

- |

#!/bin/sh

# copy the file from the pod FS to the host FS

cp /tmp/99-nfs.rules /host/99-nfs.rules

# reload the udev daemon on the host

/usr/bin/nsenter -m/proc/1/ns/mnt -- udevadm control --reload

Remember that we have attached the full manifest for the daemonset to this blog. We have also attached a second daemonset which you can use to validate that your read-ahead settings are really working as you intended (it’s mainly useful for debugging purposes and does not need to run all the time).

And that’s it? Really?

Well, yes and no. Setting the read-ahead parameter certainly was the challenging part, but there are some additional performance settings you should consider. Luckily, these settings are much easier to configure.

The list of parameters we are presenting here have been worked out together with Microsoft Engineering & Product Management for a specific customer case, so your mileage might vary regarding the actual values. However, this list should give you a good starting point. We will not go into details explaining every single parameter – they are easy to look up by doing a Google search. We’d just like to call out one specific parameter, the nconnect= setting, which sets the number of parallel TCP connections between client and NFS server.

The parameters we’re discussing here can all be set in the mountOptions of your Kubernetes PersistentVolume manifests (for static provisioning) or in the configuration of your NFS-backed StorageClass in case you have defined one as your RWX storage provider for dynamic provisioning. Be aware however that you cannot set mount options in the volumes section of your Pod manifests.

Here’s an example of how to statically provision NFS-backed volumes:

apiVersion: v1

kind: PersistentVolume

metadata:

name: <pv-name>

namespace: <namespace>

spec:

capacity: <size of share>

storage: 10Gi

accessModes:

- ReadWriteMany

nfs:

server: <IP address of the private end point>

path: <Path mentioned in NFS share>

mountOptions:

- rsize=1048576

- wsize=1048576

- bg

- rw

- hard

- noatime

- nodiratime

- rdirplus

- actimeo=30

- tcp

- _netdev

- nconnect=4

And here is an example of how your NFS-backed storageclass could be defined when the additional mount options are included:

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: azurefile-premium-nfs

provisioner: file.csi.azure.com

allowVolumeExpansion: true

parameters:

resourceGroup: <your-resource-group>

storageAccount: <your-storage-account>

server: <your-server>.file.core.windows.net

protocol: nfs

skuName: Premium_LRS

reclaimPolicy: Delete

volumeBindingMode: Immediate

mountOptions:

- rsize=1048576

- wsize=1048576

- bg

- rw

- hard

- noatime

- nodiratime

- rdirplus

- actimeo=30

- tcp

- _netdev

- nconnect=4

Conclusion

In this blog we have discussed how to improve the I/O performance when using NFS shares with the Azure Files service for SAS on Azure Kubernetes. There are two “types” of performance parameters and we highly recommend that you use both when configuring your infrastructure:

- The read-ahead kernel setting which needs to be enabled on the Linux OS level of your Kubernetes worker nodes

- A number of mount options which can be specified when creating PersistentVolumes or when defining a StorageClass.

With all options in place, our tests have shown that NFS file shares using the Azure Files service can be a good and cost-effective alternative for providing shared (RWX) storage to your Viya workload in the Azure cloud.

Thanks for following us through this blog. We hope you found it helpful and don’t hesitate to reach out in case you have any question! Happy to help with more details.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi @AbhilashPA ,

The article is quite interesting and could potentially offer a good alternative to using an external NFS server or Azure NetApp Files.

One significant issue is that Microsoft doesn't fully support the NFS 4.1 protocol, especially ACLs for NFS file shares in Azure Files, as outlined in Microsoft Learn. This limitation could become problematic in more complex scenarios involving multiple projects, where basic POSIX file permissions might not be sufficient to meet the necessary requirements.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.