- Home

- /

- SAS Communities Library

- /

- Thinking about CAS resource management

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Thinking about CAS resource management

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The ability to configure Cloud Analytic Services (CAS) resource management (from within the product) has been evolving since Viya 3.1. So, while there are details on how to configure the CAS resource management in the SAS documentation, in this blog I want to focus more broadly on CAS resource management and some options for multi-tenancy deployments.

When getting serious about resource and/or workload management you need to consider factors like: What level of user resource management is possible? Is it better to share a large CAS server or use multiple CAS servers? Do you understand the processing workload?

It is important to understand what controls are available and how they can be applied to implement a resource management strategy for the CAS server.

But first some background…

This discussion ONLY applies to Linux deployments. Multiple CAS servers and multi-tenancy are not configuration options for a Windows deployment. But CAS resource management use of quotas is applicable on both Linux and Windows.

The ability to configure CAS resource management has evolved as follows:

- cas.MEMORYSIZE was introduced with Viya 3.1

- cas.MAXSESSIONS was introduced with Viya 3.2

- Viya 3.4 introduced:

- cas.CPUSHARES

- cas.MAXCORES

- Priority-Level Policies.

CAS resource management is achieved on the CAS server, with a policy or server options that apply to a specific server. If you have additional CAS servers on which you want to manage resources, then you must create a unique policy or server option definition for each. No steps need to be performed on the client side.

On Linux platforms, CAS has resource management capabilities that enable administrators to control CAS table size and CPU consumption. CAS relies on the Linux kernel feature, cgroups, to provide the CPU and memory consumption control. You must enable cgroups before you can fully use CAS resource management.

You can implement resource management broadly through the use of the cas.MEMORYSIZE and cas.CPUSHARES server configuration options, or more specifically through policies that you create with the SAS Viya command line interface.

It is also possible to limit the maximum number of concurrent CAS sessions using the cas.MAXSESSIONS option, the default is 5,000.

The cas.MAXCORES option can also be used to limit a CAS server’s CPU consumption, as well as a mechanism to help comply with any core based licensing.

All these options are set in the casconfig_usermods.lua file, you also need to add the following to enable resource management policies:

env.CAS_ENABLE_CONSUL_RESOURCE_MANAGEMENT=true

The casconfig_usermods.lua file is located in the directory path '/opt/sas/viya/config/etc/cas/default'

If you have multiple CAS servers the configuration directories will be

'/opt/sas/viya/config/etc/cas/[InstanceName]/'

In a multi-tenancy deployment the configuration directories will be

'/opt/sas/[tenantID]/config/etc/cas/default'

In Viya 3.4 and 3.5, CAS Resource Management creates the required cgroups at CAS server startup, based on policies rules stored in the SAS Configuration Server (Consul). The cgroups are removed at CAS server shutdown.

For more information about CAS resource management, see SAS Cloud Analytic Services: CAS Resource Management in SAS Viya Administration Guide.

It is also possible to set priorities using the following policy types:

- priorityLevels

Up to five policies per CAS server that place a disk cache space quota on personal caslibs and a CPU consumption limit on CAS sessions. Priority levels are numbered from 1 (highest) to 5 (lowest). - globalCaslibs

One policy per CAS server that places a disk cache space quota on global caslibs. - priorityAssignments

One policy per CAS server to force the priority level for specific users.

CAS Resource Management

As discussed above, CAS offers several options for managing the physical resources it uses, such as CPU, RAM, and more.

CAS also offers other workload management features, such as limiting the total number of sessions that a CAS server will host at a time (cas.MAXSESSIONS). Individual sessions can also be limited to run on a subset of CAS workers (using the cas.NWORKERS option).

However, CAS takes a different approach to workload management than you might otherwise be familiar with. CAS relies on data distribution as its primary mechanism for managing workloads. The CAS server will typically distribute data loaded into memory evenly across its workers – and why we often stress the importance of keeping CAS worker hosts equal in terms of overall resource availability.

This allows the processing to be scaled across multiple machines and provides a highly scalable architecture.

Though, there are several important considerations to keep an eye out for. For example, partitioning data in CAS forces each complete partition to reside on a single CAS worker. If your partitioning is poor, then some CAS workers will have much larger tasks to perform than others, reducing overall CAS efficiency.

Understanding how to combine these various resource and workload management concepts together in your environment is a crucial skill to ensure maximum performance and efficiency.

Additionally, it is important to understand what controls are available when thinking about the need and/or benefits from having multiple CAS servers and how best to achieve the overall processing requirements for CAS.

In the following we will discuss some multi-tenancy deployment patterns for CAS servers when sharing and dedicating resources.

Multiple CAS Servers within a Viya deployment – what is this the best approach?

With the Viya 3.4 release it became possible to have multiple CAS Servers even with a non multi-tenancy deployment. A non multi-tenancy deployment is sometimes referred to as a single-tenant deployment, the phrase ‘single-tenant deployment’ will be used for this discussion.

In terms of the deployment process, you always start with a single CAS Server (SMP or MPP), “cas-shared-default”, but then you can add new servers where you can install additional CAS Server(s) (SMP or MPP).

Note, as of Viya 3.4 (19w34) it is only possible to configure 2 or more CAS Servers that share host machines (physical or virtual) when a multi-tenancy environment is defined. With a single-tenant deployment you can have multiple CAS Servers, but they MUST be installed on separate dedicated host machines.

So, a key decision can be do I separate the workload onto separate CAS servers, or should all users share a single, possibly larger, CAS server.

If you are using a multi-tenancy deployment, when multiple CAS Servers are running on the same machine you have to rely on setting policies and using the cas.MEMORYSIZE option and the cas.CPUSHARES or cas.MAXCORES options for each CAS Server to manage the resource allocations.

This allows for hard limits to be set on the amount of physical memory that is available to each CAS server and to limit the CPU consumption using CPU shares or by restricting the number of cores available to each CAS server.

However, this approach may not provide the desired result when there are users and user groups that require access to multiple CAS Servers. Additionally, this configuration is just restricting the CPU and memory consumed by each CAS Server. It is still possible to end up with one CAS Server affecting another CAS Server’s resources when sharing the same host machine.

For example, in a multi-tenancy environment where you have multiple CAS servers running on the same host machine the CAS options are not across the whole platform, they apply individually to each CAS server. So, it is still possible to over commit resources at the operating system level.

So what approach is best for running multiple CAS Servers?

I wish I could answer that question for you, as it depends on many factors. But to answer this question you need a deep understanding of the processing workload, usage patterns (across the platform) and the outcome that you are trying to achieve.

Using cas.MAXCORES with shared host machines

Rather than setting CPU shares (cas.CPUSHARES) to restrict CPU usage by CAS, is there a better way to set CPU limits for CAS when sharing host machines?

To illustrate the approach of using cas.MAXCORES let’s discuss the following scenario.

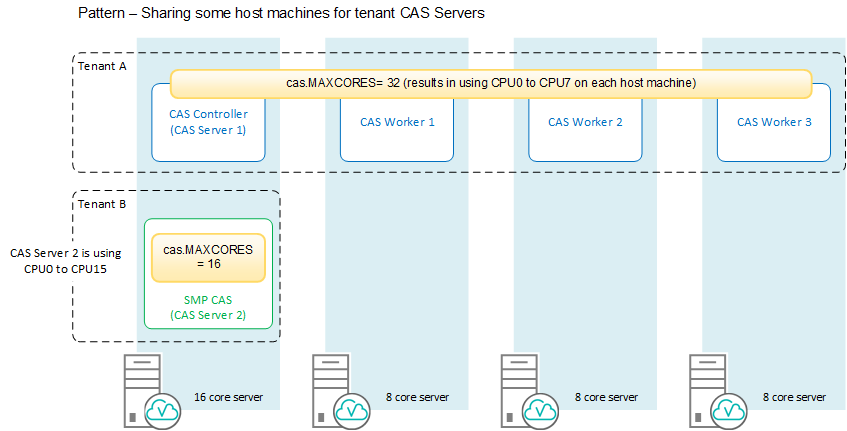

The organisation is using multi-tenancy to implement a shared platform for production and discovery. The production environment (Tenant A) has a capacity of 32 cores, while the discovery environment (Tenant B) is to be a smaller environment for the data scientists and should have a capacity of 16 cores.

When thinking about sharing the host machines for CAS servers, the first thing to understand is that Viya will always try to evenly distribute the cores being used across all the servers (host machines) defined for CAS (controller and workers).

The second consideration is that at the operating system (host) level CAS will always start using cores sequentially from CPU0.

Figure 1 illustrates using two MPP CAS servers and shows how the CPU cores will be allocated to each CAS Server. If we assume we are using 8 core servers, you can see that Tenant A (CAS Server 1) will use all available cores (CPU0 to CPU7) on each host, while Tenant B (CAS Server 2) will only use 4 cores (CPU0 to CPU3) on each server. Both CAS servers are sharing CPU0 to CPU3 on each host.

Rather than using an MPP CAS server for both tenants, it might be better to use a SMP CAS server for the smaller environment.

For example, Figure 2 illustrates using an MPP CAS server for Tenant A and a SMP CAS server for Tenant B. This time if we assume that the shared server has 16 cores and the workers are using 8 core servers. In this configuration we get the following CPU usage:

- Tenant A (CAS Server 1) will use 8 cores on each sever.

- Tenant B (CAS Server 2) will make use of 16 cores on a single host.

While this separates the CAS worker processing, as Tenant A has dedicated workers, it might mean that the Tenant B processing could impact the CAS controller for CAS server 1.

As can be seen from these examples, there will always be some overlap of the CPUs being used on each server. So, the use of cas.MAXCORES is a tool that can help with keeping within the licensing capacity, it may only have a limited role to play in resource management when sharing the host machines.

If the capacity definition is the same for both CAS servers, in this case the use of cas.CPUSHARES maybe the best option.

Finally, sharing host machines (CPUs) could be seen as antithetic to (clashes with or cancels) the goals of workload management. Because in this scenario, as all tenants are sharing the same limited number of cores, there is no intrinsic way to prioritize a tenant over another, or to dedicate cores to a single tenant.

Therefore, if both tenants have high processing workloads the best approach would be to have dedicated servers. This is shown in Figure 3.

Conclusion

Today, we have discussed some of the controls that you have for CAS processing. Before setting any policies or resource constraints you need to have a clear understanding of the outcome you are trying to achieve, and you need to understand the processing workload and usage patterns (across the platform).

While it is possible to share host machines for CAS servers when using a multi-tenancy deployment, with some level of control. For true workload separation, using multiple CAS servers on dedicated host machines is currently the best approach (Figure 3), this provides better control over CAS resource allocation and usage, but can have an impact on licensing.

I hope this is useful and thanks for reading.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.

- Find more articles tagged with:

- GEL

- Multi-tenancy

- resource management

- SAS Viya