- Home

- /

- SAS Communities Library

- /

- SAS Viya/CAS accessing Azure HDInsight

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

SAS Viya/CAS accessing Azure HDInsight

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Azure HDInsight is a cloud distribution of Hadoop components. A SAS user may wonder how to connect an application with an HDI cluster. The SAS/ACCESS Interface to Hadoop and SAS Data connector to Hadoop technology enables SAS users to connect and access data from the HDInsight cluster.

This post is about the components required for SAS 9.4 (M6/7) and CAS to connect and access the Hive tables from the Azure HDInsight cluster.

Azure HDInsight

Azure HDInsight is a managed, open-source Hadoop cluster service in the cloud. Azure HDInsight enables a user to create a global and optimized cluster with open-source frameworks such as Apache Hadoop, Spark, Hive, Interactive Query, Kafka, etc. . It provides a scalable Hadoop cluster that enables users to scale up or down based on workload. HDInsight integrates seamlessly with the most popular big data solutions

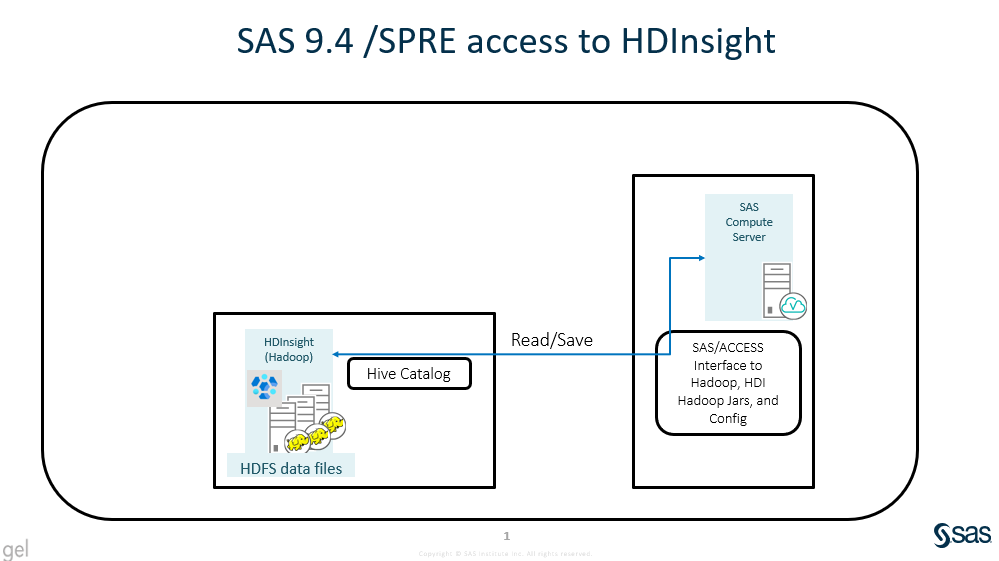

Data access from SAS 9.4

The SAS 9.4 (M6/7) can access hive tables from the HDInsight Hadoop cluster using SAS/ACCESS Interface to Hadoop technology. The following diagram describes the data path and components required to access the HDInsight Hadoop cluster.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

Pre-requisites

- SAS/ACCESS Interface to Hadoop at SAS Compute server.

- HDInsight Hadoop cluster with Hive Catalog.

- HDI Hadoop Jars and Configuration files at SAS Compute server.

- JAVA_HOME path in LD_LIBRARY_PATH at SAS Compute server.

The HDInsight Hadoop cluster allows ssh to login to the master node server. A valid user can log in to the HDI master node and execute the SAS-Hadoop-Tracer script to collect required Hadoop Jars and config files.

The SAS Embedded Process ( SAS EP) for Hadoop is not supported at the HDInsight Hadoop cluster, hence the in-database processing components (Code Accelerator, Data Quality Accelerator, etc.) are not supported.

Sample code to read/write data into HDInsight:

option set = SAS_HADOOP_CONFIG_PATH = "/opt/sas/viya/config/data/HDIHadoopData/conf";

option set = SAS_HADOOP_JAR_PATH = "/opt/sas/viya/config/data/HDIHadoopData/lib";

libname hdilib HADOOP

uri='jdbc:hive2://hdiutkuma5.azurehdinsight.net:443/default;ssl=true?hive.server2.transport.mode=http;hive.server2.thrift.http.path=hive2'

server='hdiutkuma5.azurehdinsight.net'

user="admin" pw=“XXXXXXXXX" schema="default";

data hdilib.cars_test;

set sashelp.cars;

run ;

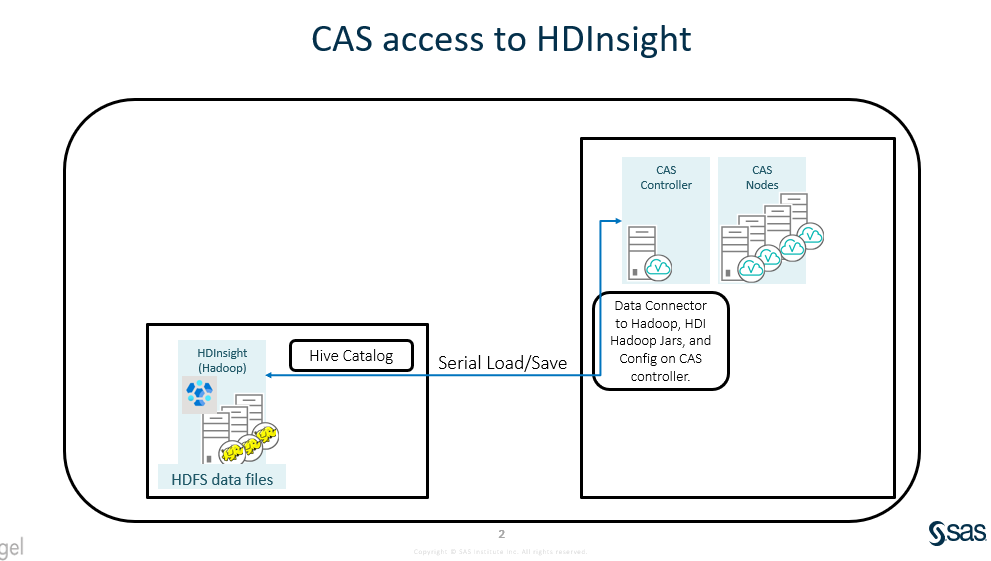

Data access from CAS

CAS supports the Serial mechanism to load and save data to Azure HDInsight Hive table using SAS Data Connector to Hadoop. The following diagram describes the data path and components requires to access the Azure HDInsight.

Pre-requisites

- SAS Data Connector to Hadoop at CAS Controller.

- HDInsight Hadoop cluster with Hive Catalog.

- HDI Hadoop Jars and Configuration files at CAS Controller

- JAVA_HOME path in LD_LIBRARY_PATH at CAS configuration setting.

The HDInsight Hadoop cluster allows ssh to log in to the master node server. A valid user can log in to the HDI master node and execute the SAS-Hadoop-Tracer script to collect required Hadoop Jars and config files.

The SAS Embedded Process ( SAS EP) for Hadoop is not yet supported at the HDInsight Hadoop cluster. Hence, the parallel data load to CAS is not supported. There is a known bug with the Multi-Node load method, the SAS data connector for Hadoop does not work well with specific hive numeric data column to create the split query. Hence, the Multi-Node data load/save to CAS is not supported.

Sample code to read/write data into HDInsight :

CAS mySession SESSOPTS=(CASLIB=casuser TIMEOUT=99 LOCALE="en_US" metrics=true);

caslib cashive datasource=(srctype="hadoop",

server="hdiutkuma8.azurehdinsight.net",

username="admin", pwd=“XXXXXXXXXX", schema=default,

uri='jdbc:hive2://hdiutkuma8.azurehdinsight.net:443/default;ssl=true?hive.server2.transport.mode=http;hive.server2.thrift.http.path=hive2',

hadoopconfigdir="/opt/sas/viya/config/data/HDIHadoopData/conf",

hadoopjarpath="/opt/sas/viya/config/data/HDIHadoopData/lib");

proc casutil incaslib="cashive" outcaslib="cashive" ;

load data=sashelp.prdsal3 casout="prdsal3" replace;

save casdata="prdsal3" casout="prdsal3" replace;

quit;

proc casutil incaslib="cashive" outcaslib="cashive";

load casdata="prdsal3" casout="prdsal3_new" replace;

list tables;

quit;

CAS mySession TERMINATE;

Stay tuned for the next article on "SAS and CAS reading ADL2 files (Parquet, Avro, Jason, XML, etc.) via the HDInsight cluster".

Additional Resources

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.