- Home

- /

- SAS Communities Library

- /

- SAS Customer Intelligence 360: Model management for competitive differ...

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

SAS Customer Intelligence 360: Model management for competitive differentiation

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

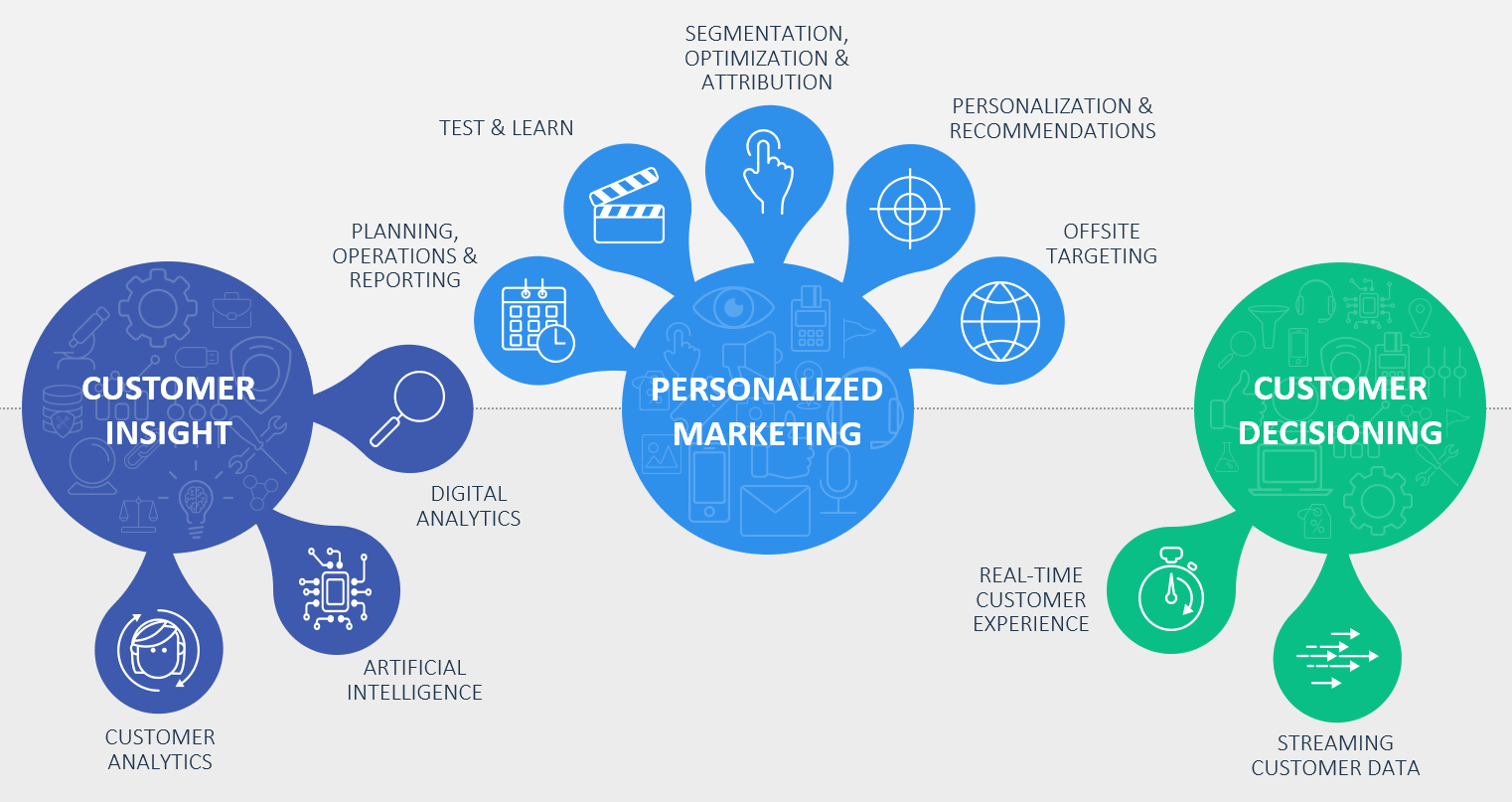

The universe of customer experiences, digital analytics, personalization and decisioning is massive. At times, it can seem as complicated and vast as the galaxy itself. With intricate subjects underneath this umbrella, you can lose direction, wander aimlessly, or feel a misleading sense of success or failure. When you lose vision, your appetite for predictive clarity increases.

From tactical to strategic, there are categories of analysis that range from foundational to advanced for every brand. Consider the unique altitudes of dependency for various metrics and key performance indicators by organizational role:

Analysts and managers:

- Click-through rate

- Cost-per-acquisition

- Checkout abandon rate

- Journey path length

Directors:

- Gross profit

- Conversion rate

- Likelihood to recommend

- Micro outcomes

Vice presidents and c-suite executives

- Lifetime value

- Incremental profit per channel

- Per visit goal value

- Profit per customer

Altitude defines the scope and significance of decisions. It also influences the frequency at which data is received, and the associated depth of insights. For example, managers are typically required to make tactical decisions – impacting say tens of thousands of dollars. At the other end of the spectrum, vice presidents shoulder the responsibility for making wide sweeping strategic decisions – impacting tens of millions of dollars.

When offered delicious data, everyone wants more.

In my encounters with marketing professionals across industries, I frequently hear that more data can lead to better results. Just take a look at the martech space in the last five years. The rise of DMPs and CDPs have been hard to ignore, but somehow topics like predictive analytics and decision management are optional.

Wow! Insert confused emoji image here please.

If more data simply equaled smarter decisions, there would be peace on earth. According to SAS COO and CTO Oliver Schabenberger:

“Data without analytics is value not yet realized.”

We can do so much better. A core part of our job, as individual contributors or leaders within the analytics practice, is to ensure that prescriptive insights reaches the right individual at the appropriate time. Machine learning and artificial intelligence represent all the rage right now. For the metrics listed above, there could be a model to help for each one. I recently wrote about how users of SAS Customer Intelligence 360 can efficiently perform end-to-end machine learning projects. For readers seeking a primer, please check it out.

What happens when a brand develops an inventory of models ready for action?

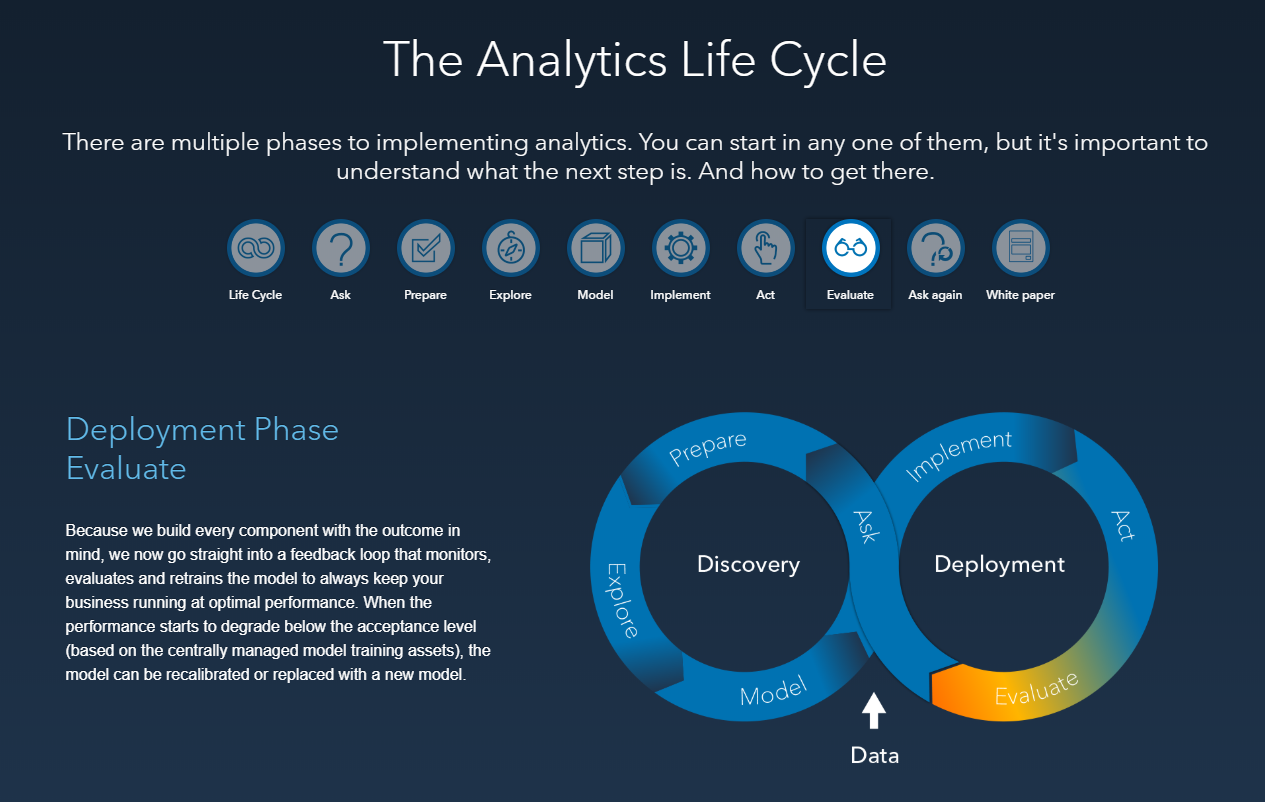

Today’s machine learning techniques allow analysts to quickly train and create more models faster than ever. As efficiency increases, authoring models is only one aspect of the analytical lifecycle that brands need to consider. As the number of models increases to support more business objectives, so does the requirement to manage these assets as valuable competitive differentiators.

Model management is not a one-time activity, but an essential business process. Models must be well developed and validated to demonstrate that they are working as expected. Outcome analysis is also necessary to:

- Ensure that the scores derived from applying the model to new data are accurate.

- Verify that model performance over time remains satisfactory.

Other aspects include cataloging and tracking this growing inventory of analytical assets, while providing support for the governance of these models using version control through repeatable and traceable workflows.

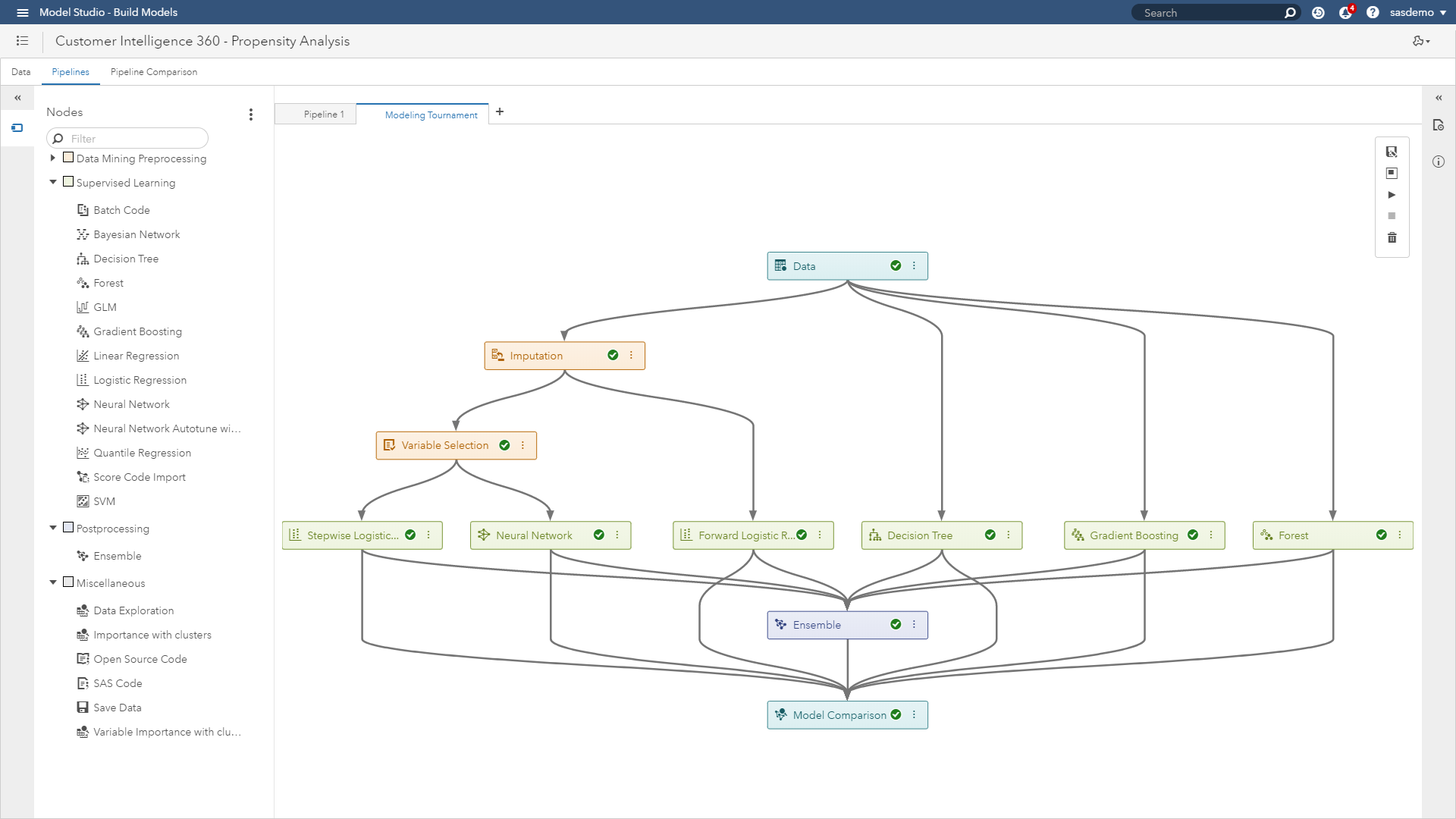

Here’s an example using multiple models constructed to address propensity targeting on sas.com. Leveraging structured views of web visitor data captured by SAS Customer Intelligence 360, users can leverage model studio within SAS Visual Data Mining and Machine Learning to construct machine learning pipelines.

(Figure 3: SAS Customer Intelligence 360 & SAS Visual Data Mining & Machine Learning - Model studio pipeline project)

In the figure above, the pipeline project is using seven different algorithmic approaches to identify the approach that will maximize scoring precision and minimize error:

- Stepwise logistic regression

- Neural network

- Forward step logistic regression

- Decision tree

- Gradient boosting

- Forest

- Ensemble

In other words, predictive accuracy. For those of you interested in technical documentation of these modeling procedures, please go here.

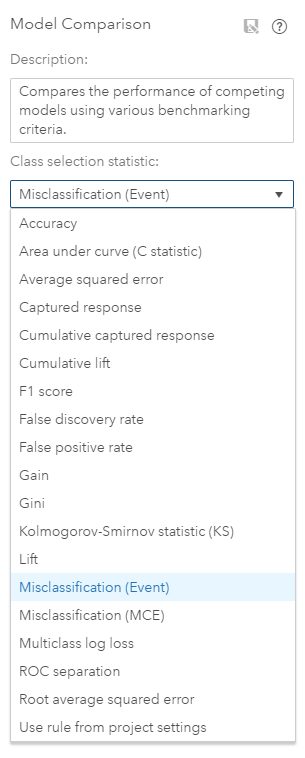

(Figure 4: SAS Customer Intelligence 360 & SAS Visual Data Mining & Machine Learning - Model comparison)

Figure 4 highlights the menu of options available for selection criteria of the champion model. In this example, you would select the misclassification event rate because you want to maximize the accuracy to predict who is likely to convert to help your marketing team achieve higher returns.

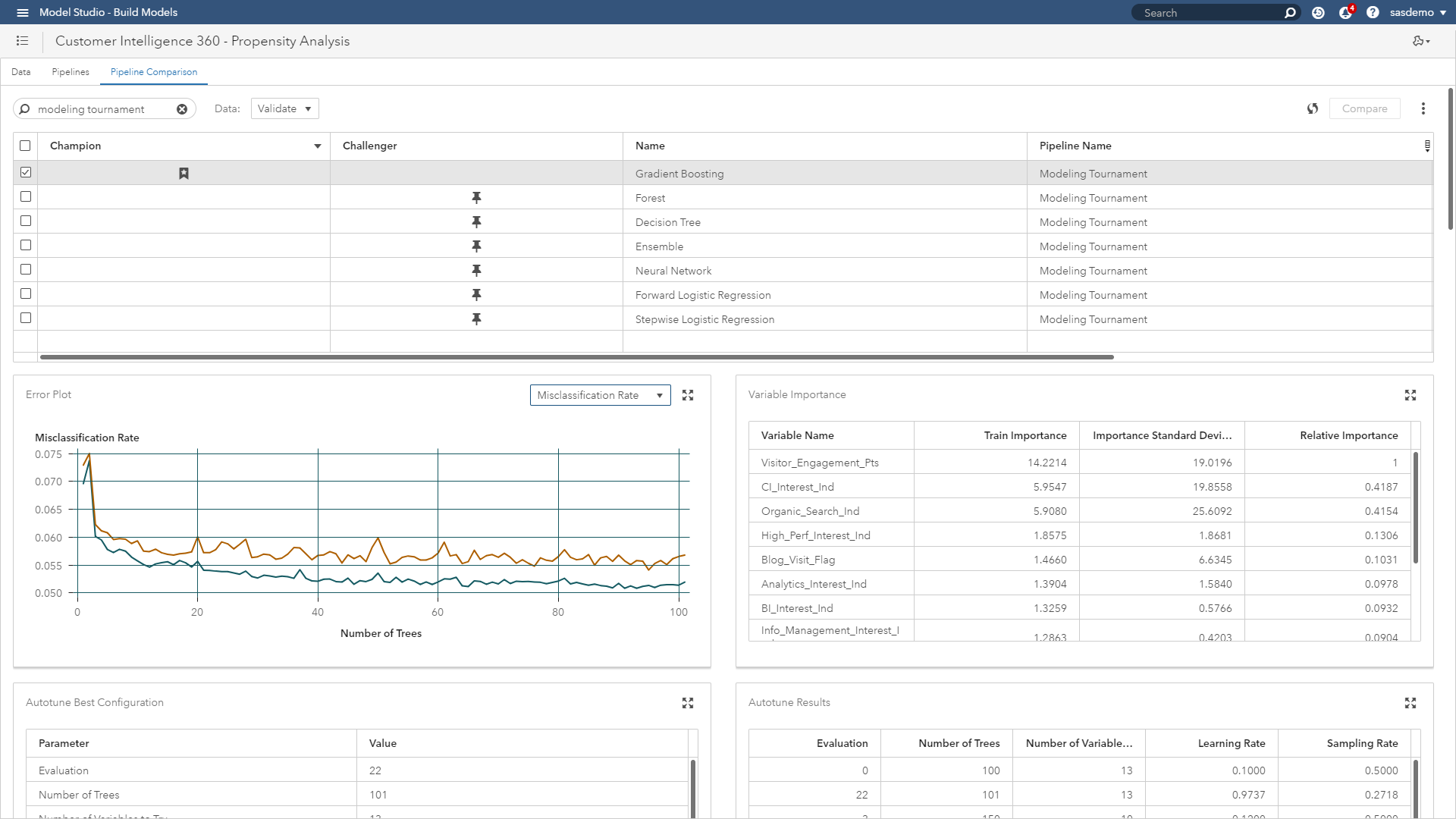

This results in an auto-tuned gradient boosting model outperforming all other challengers. The pipeline comparison dashboard shown below provides a deep set of model interpretability visualizations, diagnostics and scoring logic.

(Figure 5: SAS Customer Intelligence 360 & SAS Visual Data Mining & Machine Learning - Modeling pipeline project dashboard)

Demonstrating how brands manage actionable analytical assets

Now that you have an analysis selected worthy of addressing your marketing team’s business problem, you can begin managing the models for deployment. Options include:

- Publishing of models for scoring directly in-databases.

- Score holdout data.

- Import scoring of other models to compare.

- Download score code in multiple languages.

- Download scoring API for invoking models as web services.

(Figure 6: SAS Customer Intelligence 360 & SAS Visual Data Mining & Machine Learning - Making models available to SAS Model Manager)

Users can store models in a common model repository and organize them within projects and folders. A project consists of the models, attributes, tests and other resources that you use to:

- Evaluate models for champion model selection.

- Monitor model performance to minimize exposure to decaying predictions.

- Publish models to other areas of the SAS platform or third-party technologies.

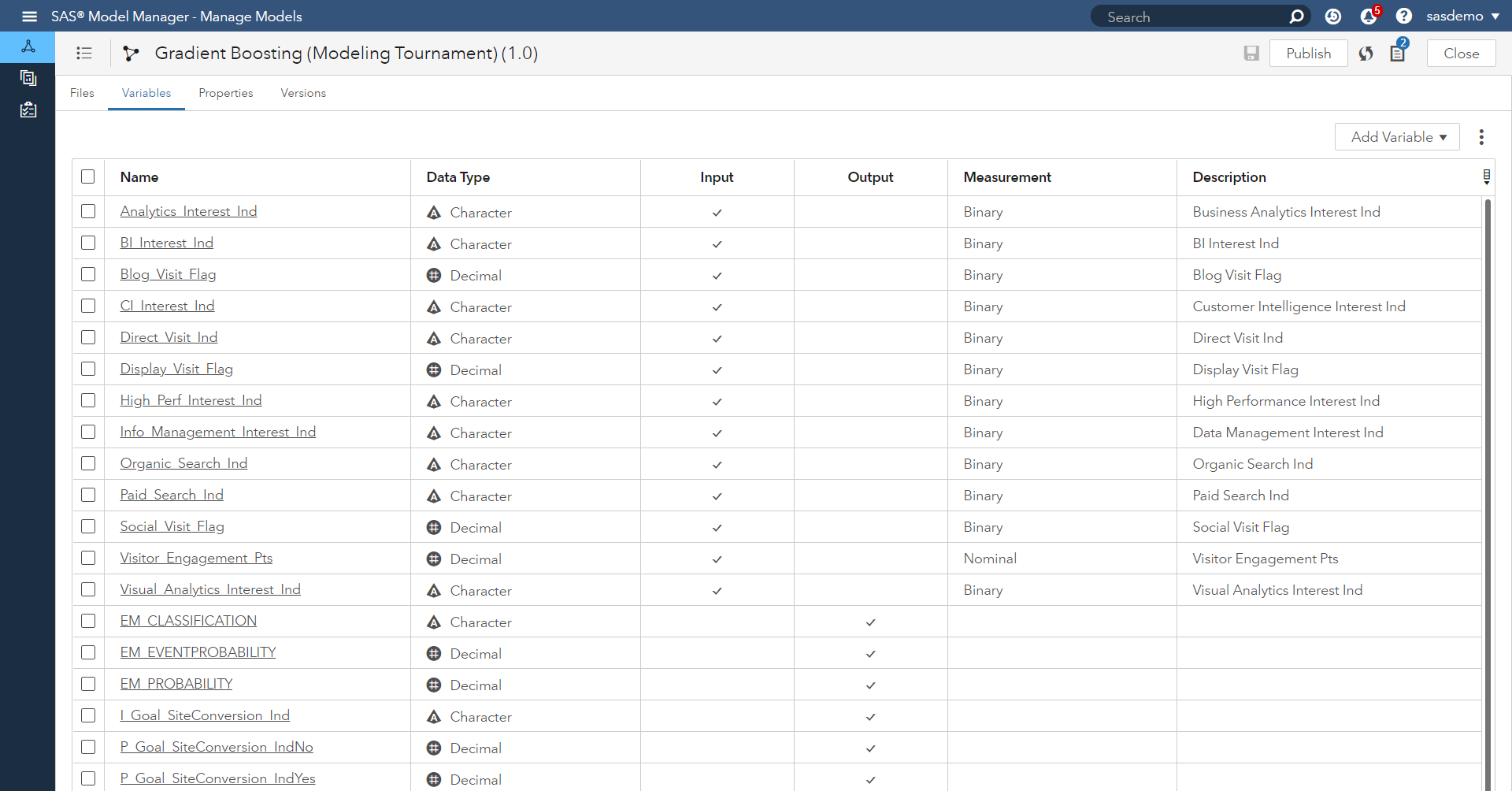

All model development and maintenance personnel, including data modelers, validation testers, scoring officers and analysts, can use and benefit from these features. We begin by opening the gradient boosting model in Figure 7 to highlight how users can add, review and customize the model’s input and output variables.

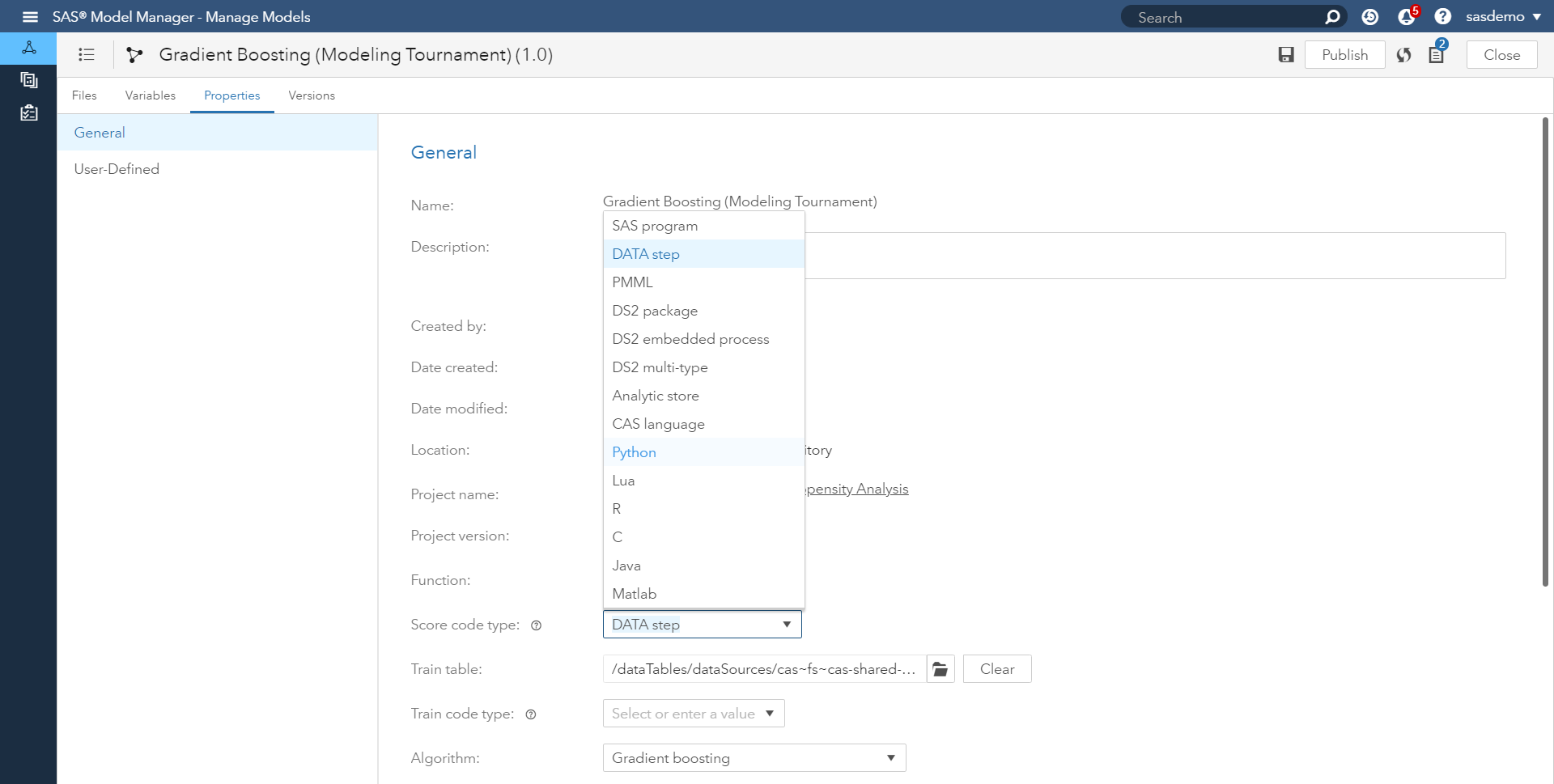

The project’s metadata includes information such as the name of the project, the type of model function (classification, clustering, prediction, forecasting, etc.), the project owner, the project location and the variables that are used by project processes. Want to deploy model scoring code in SAS, CAS, R, Python or another language within the model’s properties?

Figure 8 below highlights your options.

Model validation processes can vary over time. One thing that is consistent is that every step of the validation process needs to be logged. For example:

- Who imported what model when?

- Who selected what model as the champion model, when and why?

- Who reviewed the champion model for compliance purposes?

- Who published the champion to where and when?

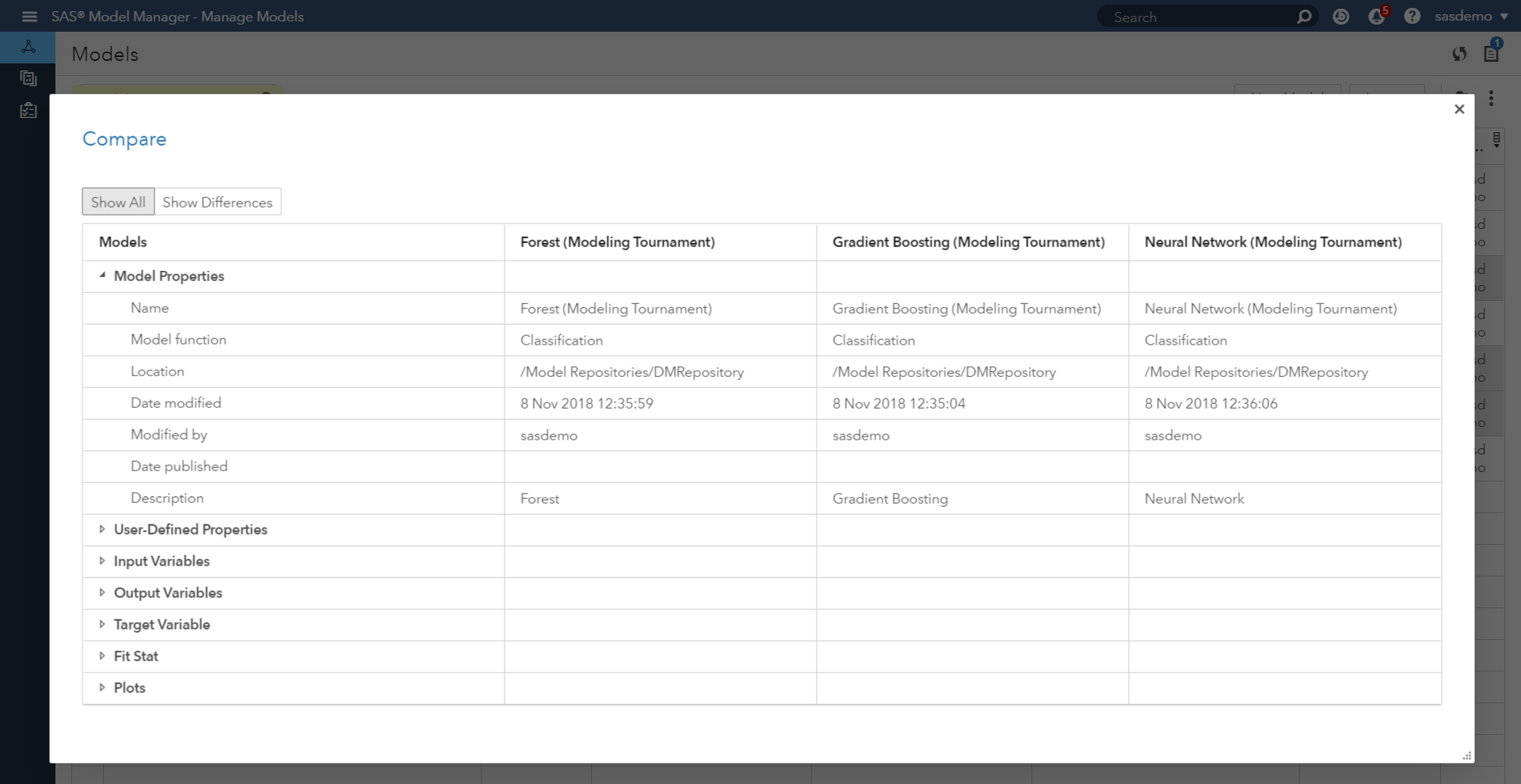

As displayed in Figure 9, users can assess and compare across models. When comparing models, the model comparison output includes model properties, user-defined properties, variables, fit statistics, and plots for the models.

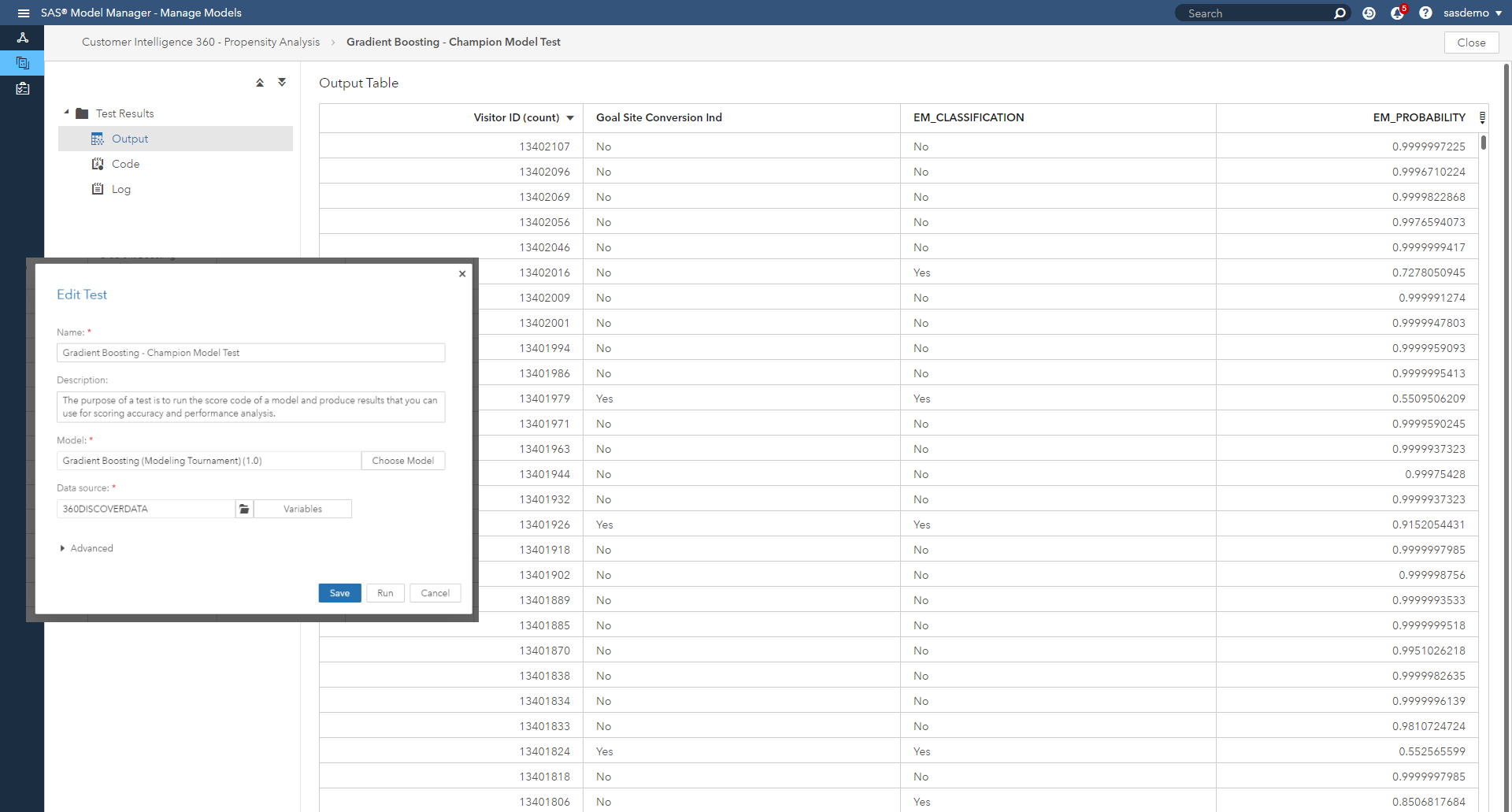

Before a new champion model is published for production deployment, an organization might want to test the model for operational errors. This type of pre-deployment check is important especially when the model is to be deployed in real-time scoring use cases. The purpose of a test is to run the score code of a model and produce results that can be used for scoring accuracy and performance analysis.

Figure 10 showcases a snapshot of the champion model’s scoring logic successfully assigning propensities to sas.com web visitors, as well as predicted classifications of yes/no on whether the prospect is likely to meet the defined conversion goal.

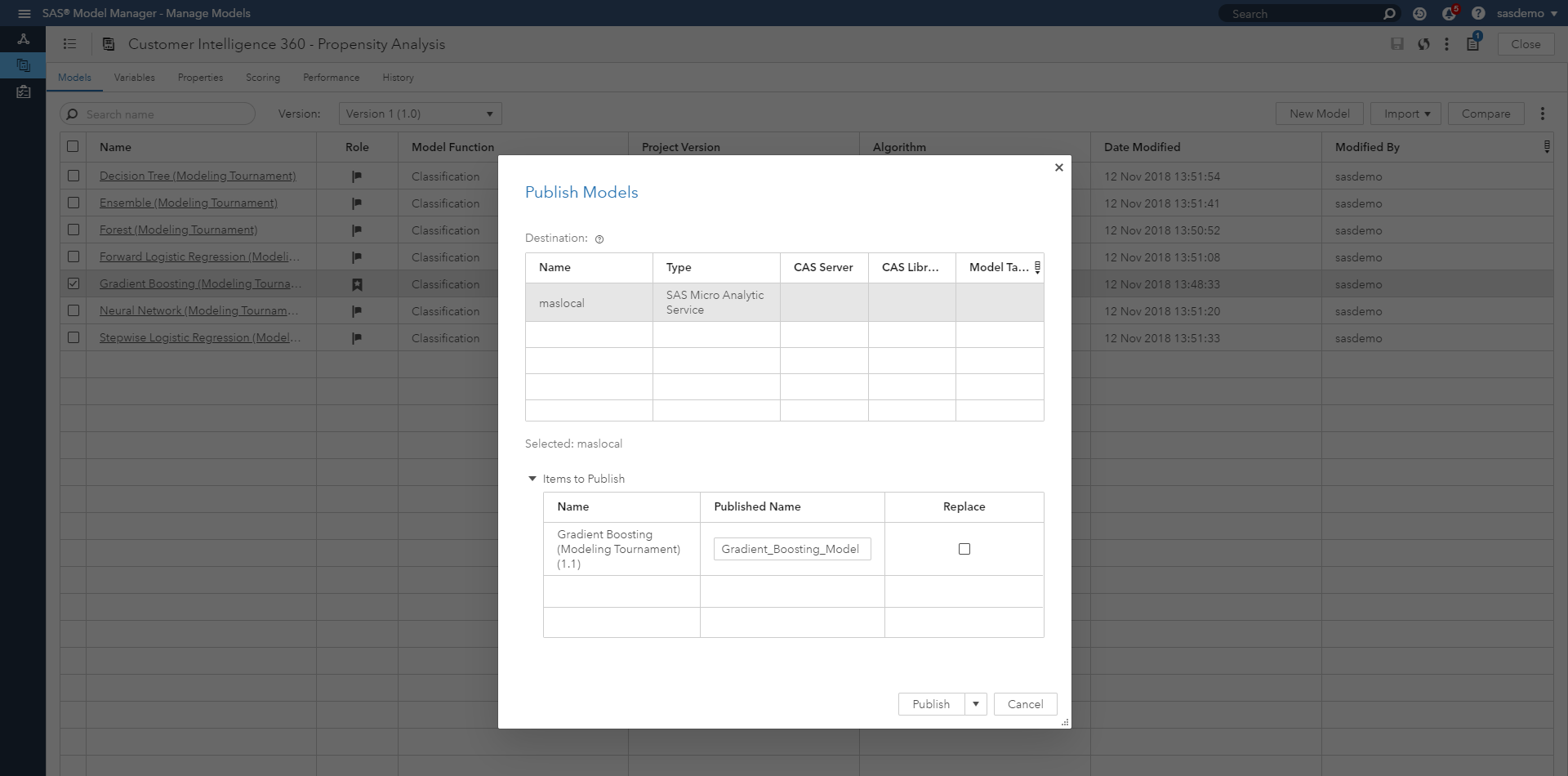

When a champion model is ready for production scoring, users set the model as the champion. The project version that contains the champion model becomes the champion version for the project. Users can leverage challenger models to test the strength of champion models over time.

To ensure that a champion model in a production environment is performing efficiently, users can collect performance data that has been created by the model at intervals that are determined by your brand. Performance data is used to assess model prediction accuracy. For example, users might want to assess performance weekly, monthly or quarterly. Monitoring can be performed on champion and challenger models, and as data trends change over time, the champion model can be improved by:

- Replacement by a challenger (another algorithm within the project starts fitting the data more accurately).

- Tuning or refitting the model performed by an analyst.

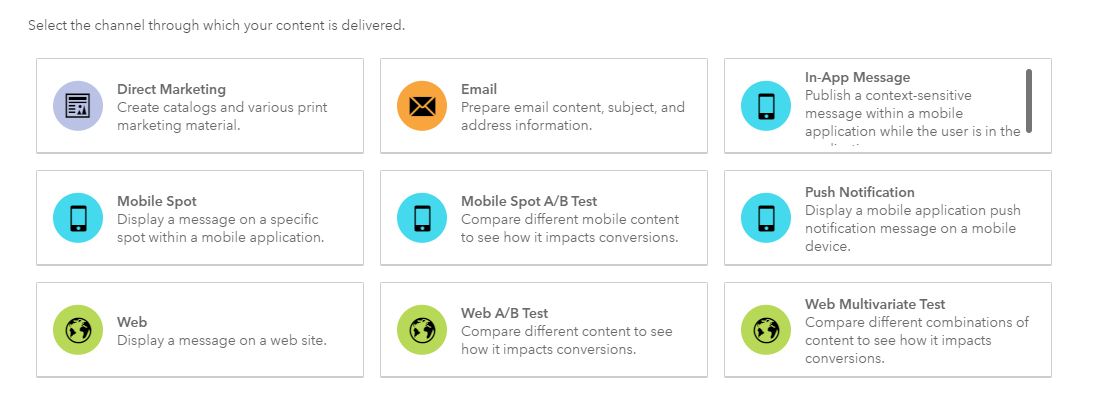

Users can publish models, so that it can be used by other applications for tasks such as predictive scoring. Models can be published to destinations that are defined for CAS, Hadoop, SAS Micro Analytic Service, and defined databases.

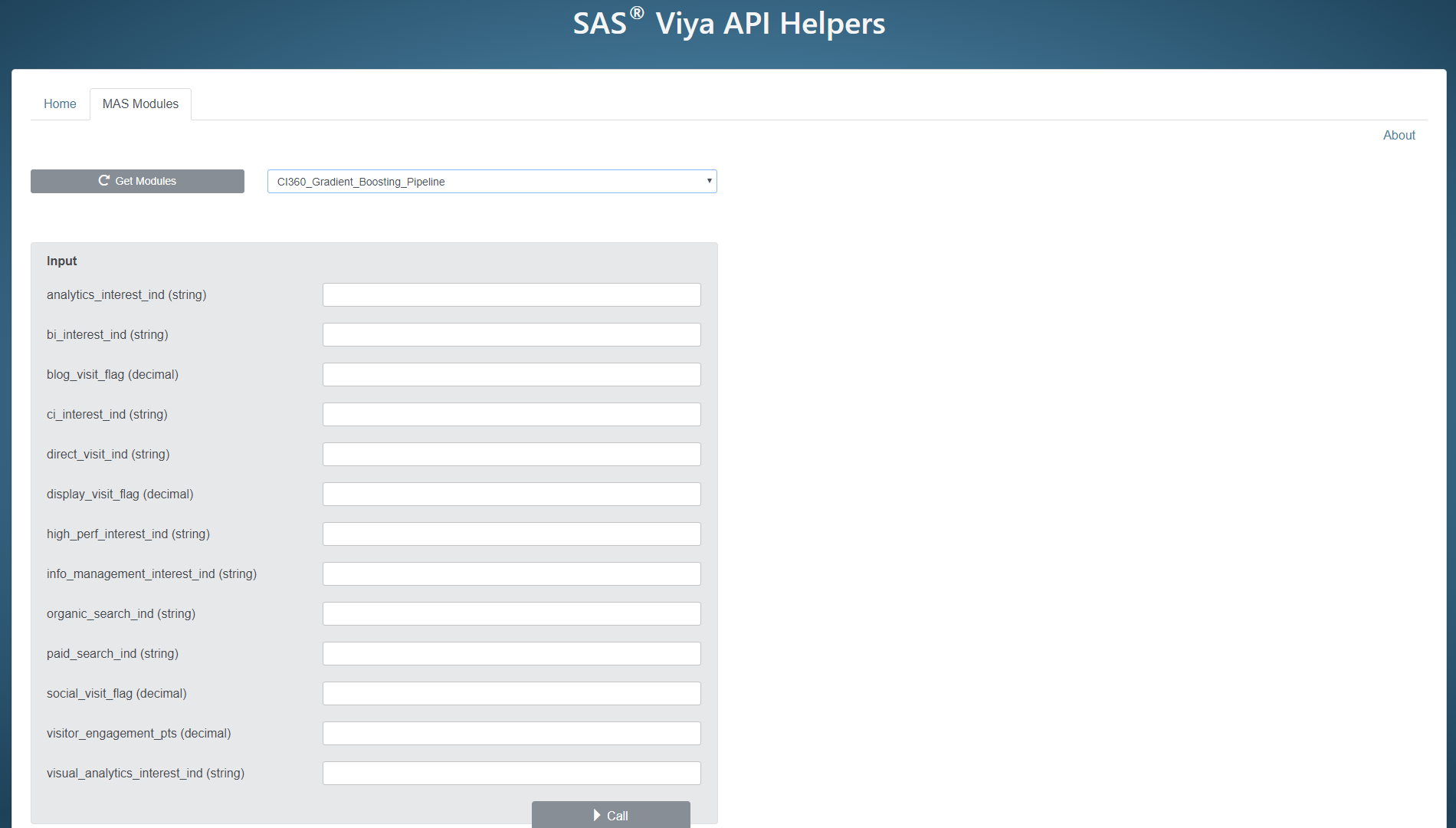

Specific to analytically-charged digital marketing, the SAS Micro Analytic Service is a powerful mechanism. For example, it can be called as a web application with a REST interface by SAS and other client applications. Envision a scenario where a visitor clicks on your website or mobile app, meets an event definition, and a machine learning model runs to provide a fresh propensity score to personalize the next page of that digital experience. The REST interface (known as the SAS micro analytic score service) provides easy integration with client applications, and adds persistence and clustering for scalability and high availability. For more technical details on using the SAS micro analytic score service, check this out.

To bring this to life, let’s demonstrate how it works.

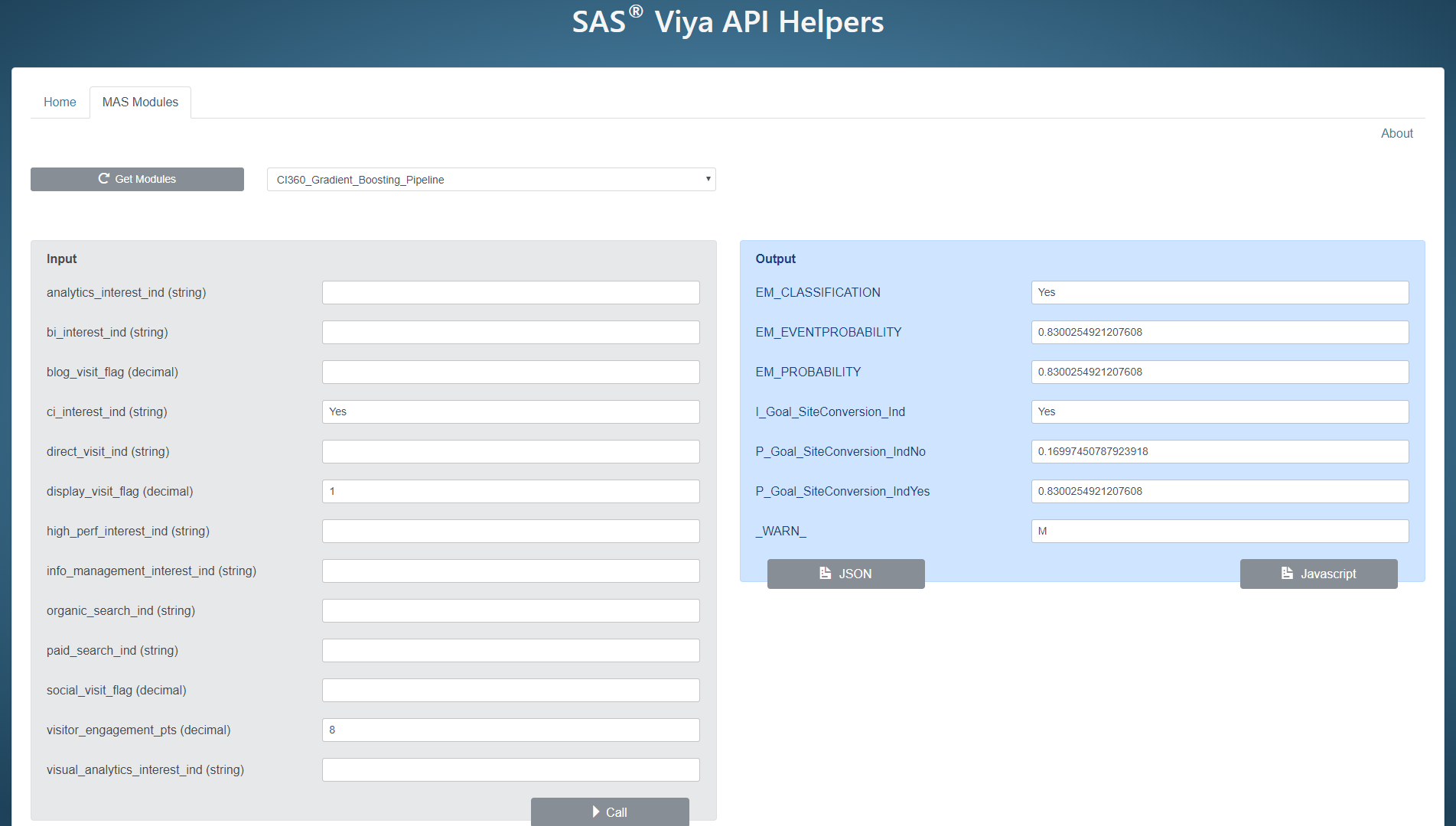

The figure above provides a non-technical method to show how SAS Customer Intelligence 360 can call the champion gradient boosting model to run. Input parameter values can be inserted to simulate different scenarios. For this example, I will provide values for:

- Visitor engagement score: 8.

- Viewing of the Customer Intelligence web page: Yes.

- Display media interactions: 1 or more.

These values represent visitor behaviors to sas.com that can be used for scoring.

Figure 13 shares what occurs after the model is called with those specific input values. For a visitor to sas.com with those specific data points, the model predicts this visitor will convert with a probability of 83 percent. As you can see, I can run other simulations to assess how other visitors will behave, as well as confirm that my model can successfully produce the actionable scoring when called.

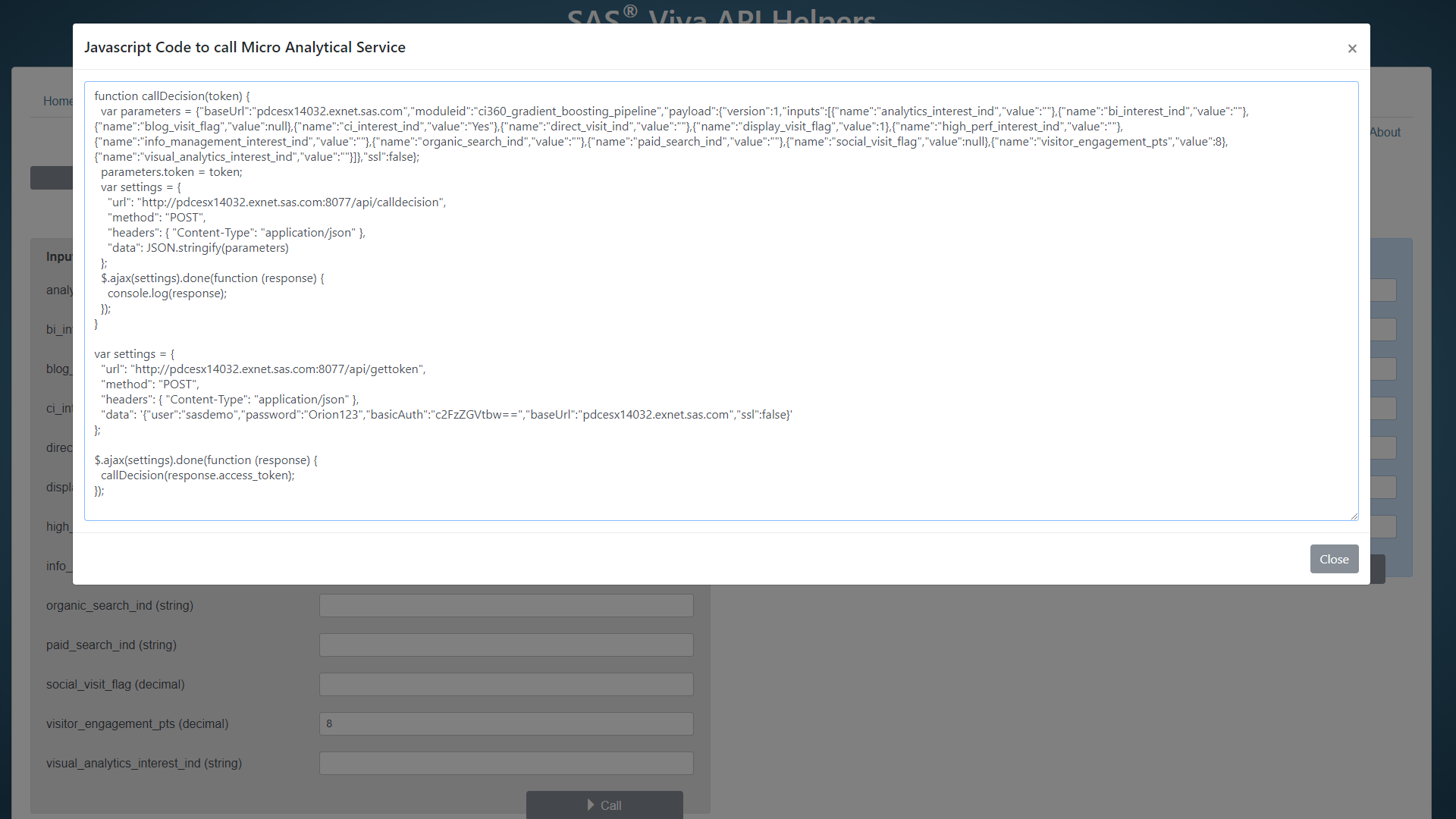

For technical readers who want to view the program that calls the model, here is a JavaScript view.

SAS Customer Intelligence 360 enables brands to use first-party data to make better decisions using predictive analytics and machine learning in conjunction with business rules across a hub of channel touch points. As your journey into analytical marketing use cases progresses, usage of your modeling intellectual property cannot be under-exploited. It’s competitive differentiation awaiting to be deployed.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.