- Home

- /

- SAS Communities Library

- /

- NLP Just Became Easier! A look at the Text Classifier custom steps.

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

NLP Just Became Easier! A look at the Text Classifier custom steps.

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Interfaces matter. User and Developer Experiences matter.

That state-of-the-art robot vacuum cleaner (or home voice assistant device, or self-driving car) is much less enjoyable if you can only interact with them using complex programming interfaces. For the last two years, SAS Viya customers have had access to low-code, accessible custom steps within SAS Studio, which wrap and abstract complex code and provide developers faster time to value.

Recent text classification actions, based on deep learning (BERT) architecture, have augmented the hybrid NLP (Natural Language Processing) approach that SAS advocates. A hybrid NLP approach provides you better chances to design accurate and performant models, and incorporates machine learning (of which deep learning forms a part) in addition to rules. BERT (Bidirectional Encoder Representations from Transformers), a deep learning architecture based on transformers, is well suited for analysing labelled, sequential input data (such as text) with a high degree of accuracy. At this time, SAS Visual Text Analytics on SAS Viya offers these actions only through programming interfaces (i.e. code) such as the CAS language (CASL), Python, R & Lua, which may limit the range of potential analytics users who can benefit from these actions.

Enter the SAS Studio Custom Steps GitHub repository. Provided as a community contribution, these custom steps hugely simplify the whole task of training and scoring deep learning-based text classification models in little to no time, putting you on the fast track to higher value!

Here are the READMEs and the folder contents for the two custom steps.

| README | Repository Folder |

Access the Custom Steps

We first start with the question of accessing these steps from within a SAS Viya environment. A recommendation is to follow instructions to upload a selected custom step to SAS Viya. Another alternative is to make use of Git integration functionality already available in SAS Studio. Clone the SAS Studio Custom Steps GitHub repository and make a copy of required custom steps in your SAS Content folders. The below animated GIF shows you how. In this case, the folders to move / copy are "NLP - Train Text Classifier" and "NLP - Score Text Classifier".

Train a Text Classifier

Deep learning based classifiers require labelled data. Identify a dataset which contains, at a minimum, the following two components. One is, for obvious reasons, a text column which contains the text on which you may like to generate a classifier upon. Examples include customer reviews, customer complaints, newspaper articles, random Wikipedia pages you have pulled from the internet (because, like me, you may have run out of ideas for data) etc.

The other is a label, (also called a category or a target), which represents a category under which text needs to be classified. Referring the text examples again,

- Customer reviews could be classified under a star rating , or a Like / Dislike flag.

- Customer complaints could be classified as per the department / workflow they need to be assigned to.

- Newspaper articles could be classified under sections (politics, sports, entertainment, classifieds etc.) or location for easy search and retrieval.

- Random Wikipedia articles can be classified as per ..... well, random begets random, so it's likely you don't have a predefined label. So maybe you may like to manually label these articles (which surely, somebody must have done at some point of time in the past). This will be a useful way to appreciate the yeoman work done by original data labellers, at any rate :).

Training a classifier is extremely easy. Connect an input table (refer README for more details) and enter (or change) parameters within the UI. We've already talked about the text variable and the target variable, but in addition to those, here are other considerations

i) Use a GPU (Graphical Processing Unit) or not? The choice is yours to make, but in my personal opinion, it's hardly a choice. If you are one of those lucky people who have access to an environment with GPUs (I exaggerate; they are actually a bit more common nowadays), I would recommend checking this box. GPUs prove very useful in shortening training time which is appreciated. As experienced data scientists can attest, more training time forces you to take up hobbies such as watching paint dry. Of course, I'm sympathetic to those who have only CPUs at their disposal, but please be prepared for longer training times before you get a model with decent accuracy.

ii) Hyperparameters : The custom step carries certain default values for the hyperparameters governing the training process, which is convenient for beginners who are planning to try this action for the first time. These hyper parameters are editable and can be used to configure the training process, which may give you better accuracy than the default. Here are some of the hyperparameters you may like to play around with.

(a) Chunk size : the number of tokens which the action breaks the original text column into, for purposes of training. The default value is 256. Smaller chunk sizes might mean that you have smaller "chunks" (collection of tokens) which go into the training process, which may make for a faster training process. But, when you think about it, you may actually be compromising on accuracy because of a loss of possible context in the chunk. Therefore, experiment and choose wisely.

(b) Maximum number of epochs : The number of times the training process will take a pass at the training dataset. Consider increasing the epochs in order to provide your process a better chance of fitting a more accurate model. However, this definitely comes at the cost of time, since there will have to be that many more additional epochs that have to be run through.

In addition, feel free to also change other settings, which may help you control aspects such as memory usage and (to an extent) ensuring similar results.

A convenient feature is that at a minimum, the action expects only a training table as an input table. You have an option to also provide validation and test datasets, which are always recommended in terms of establishing robust results. However, if you fail (or forget) to provide either a validation or test table, the custom step doubles up and uses the training table for validation and test purposes. Additional configuration such as the validationPartitionFraction come into play, in such a case.

At this point, you will obtain a model (located within a CAS table) as output from the trainTextClassifier action. So far, so good. If you happen to be satisfy with the results, it's time to go ahead and use this model in a scoring process.

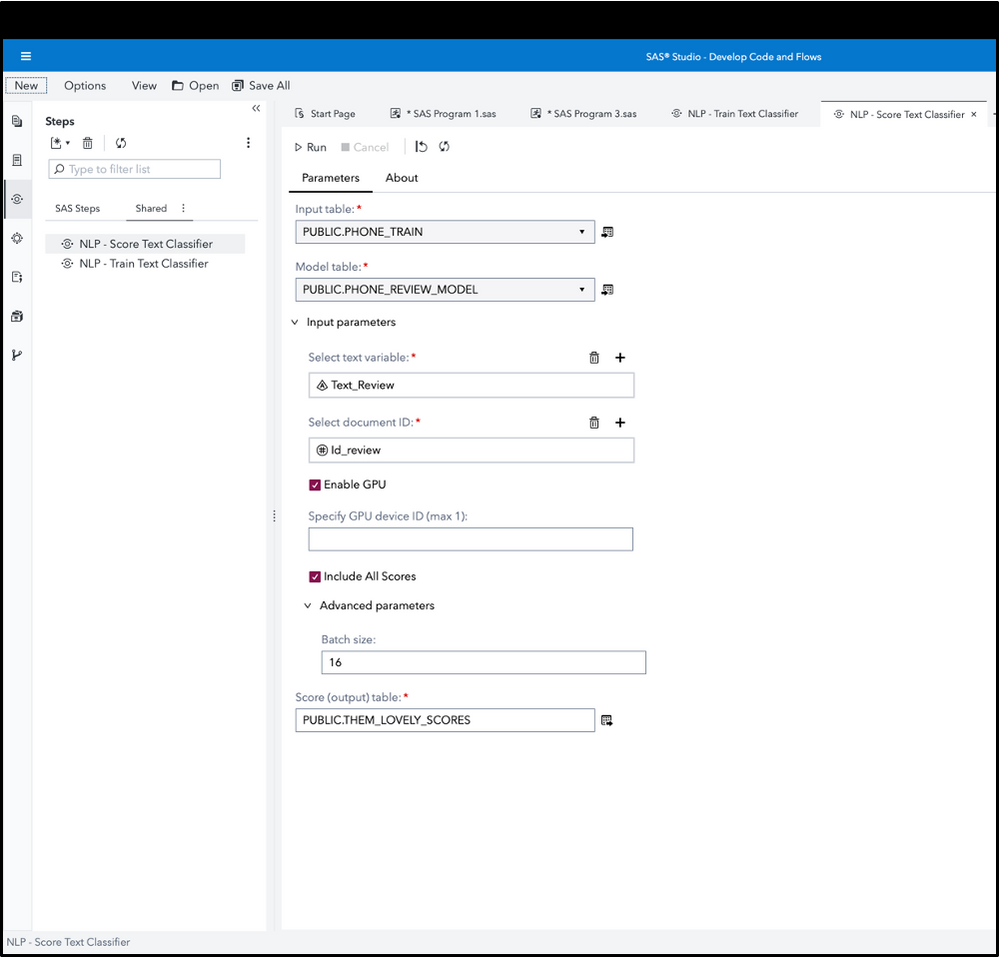

Score a Text Classifier

The nitpicky may rephrase this "score data using a text classifier" which is exactly what the second custom step does. In this particular case, the input data may contain just the text to be classified, and need not be labelled (given that this is fresh data on which we are carrying out an inference). The custom step does, however, require the model table (obtained from the step above) to be used as a parameter, since this contains the logic which is executed on fresh data.

Your analytics process now looks like the above diagram. It's wonderful when you are able to obtain a bird's eye view of these low-code components in one shot, understand and monitor the entire process, instead of having to manually associate pieces of code together. After specifying the model table and the input table, you specify the text variables, document ID, and are good to write results to an output table. A special feature accessed from within this custom step is the "Include All Scores" option, which is quite relevant to text classification problems. Just like Juliet opines in a totally unrelated context, a rose is called by many other names, and (doubtless) smells just as sweet in text classification. A complaint, for example could be classified as per its problem domain (such as account-related, transaction-related), but also as per the product (checking account versus savings). Checking the "Include All Scores" option not only provides you the capability to look at the final prediction, but also at similar high scores which may pertain to different areas, and get classified accordingly. It also proves useful in cases when (as happens so often nowadays), the text classification acts as an input in a larger model, such as predicting whether the customer will churn or not.

In Summary

It has become much more easier to access Natural Language Processing tasks, especially those which are concerned with deep learning / machine learning. Low-code analytics components, such as custom steps, have facilitated better design, execution and management of building text classifier models, which were recently introduced capabilities. This is also a testament to how it's possible to use the unified SAS Viya analytics platform to our advantage in order to ensure easy access.

Have fun with the custom steps to train and score text classifiers, and feel free to drop me an email in case of any questions.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Very big news indeed. Thank you for sharing, Sundaresh!

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.

- Find more articles tagged with:

- SAS Studio Custom Steps