- Home

- /

- SAS Communities Library

- /

- Getting Started: Write SAS data to a Parquet file on Amazon S3 - Usin...

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Getting Started: Write SAS data to a Parquet file on Amazon S3 - Using 3 easy steps in SAS Viya

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Recently a friend asked me to help him write some SAS data onto Amazon S3 in Parquet file format.

It is easy to do this using SAS Viya 3.5, which has capabilities for reading/writing Parquet files on S3.

Here is the process I used to get it done.

Step 1 - Create your Amazon bucket

Step 2 - Get your credentials to access the bucket

Step 3 - Submit the SAS code

Step 1 - Create an Amazon bucket

Go to Amazon S3 console

https://s3.console.aws.amazon.com/s3/home?region=us-east-1

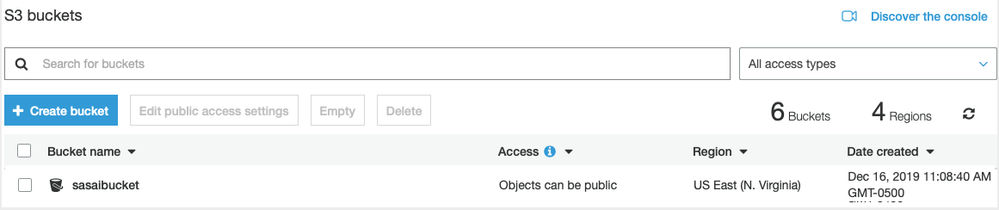

Create a bucket. I will call mine “sasaibucket” and click “Create”

You can see the bucket “sasaibucket” has been created.

Step 2 - Get your credentials to access the bucket

Go to Identity and Access Management (IAM) in Amazon.

https://console.aws.amazon.com/iam/home?region=us-east-1#/home

Click on “Users” on the left panel.

Click “Add user.”

Provide a user name.

In this example, I used “sasjst”

Select Access Type “Programmatic access”

Click “Next: Permissions”

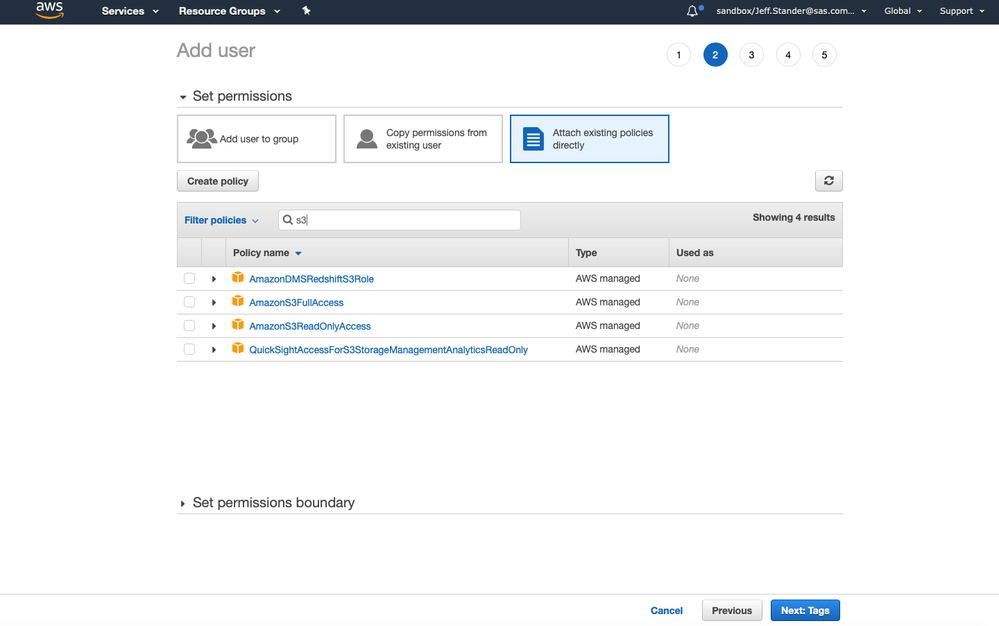

Next, I search for S3 policies.

I check the policies to access Amazon S3.

In my case, I selected “AmazonS3FullAccess” and “AmazonS3ReadOnlyAccess”

Click “Next”

Click “Create User”

The user is now created.

On this screen, you are now provided two important items.

- The Access key ID

- The Secret Access Key

Copy both the Access key ID, and the Secret access key.

They will be needed for the SAS libname.

Step 3 - Submit the SAS code

Using SAS Studio on SAS Viya, I created some simple SAS code.

The CAS statement starts a CAS Session.

The caslib statement defines the data connection in CAS to S3.

The Libref= option creates a SAS library in SAS Studio as well.

In this code, I inserted the Access key ID, and the Secret Access Key from the previous step.

cas casauto;

caslib "001_Amazon S3 Bucket" datasource=(

srctype="s3"

accessKeyId='AKIAY7ONEHNKGCRG6OF4'

secretAccessKey='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'

region="US_East"

bucket="sasaibucket"

)

subdirs

global

libref=S3

;

After submitting the SAS code, you can see the log shows the caslib has been added.

Using SAS Data Explorer on SAS Viya, I can the available data sources including S3.

My S3 bucket is currently empty, so I will first load some SAS data

To do this, I can select an existing SAS dataset, in this case cars.sashdat.

I import it to the target location called “001_Amazon S3 Bucket”

I name the target table: cars.

I specify a format of parquet.

I then Click “Import” to begin the import process”

The file is read into memory. And the file is copied to S3 as a parquet file.

If I refresh the data sources, you can see now the file CARS.parquet was written.

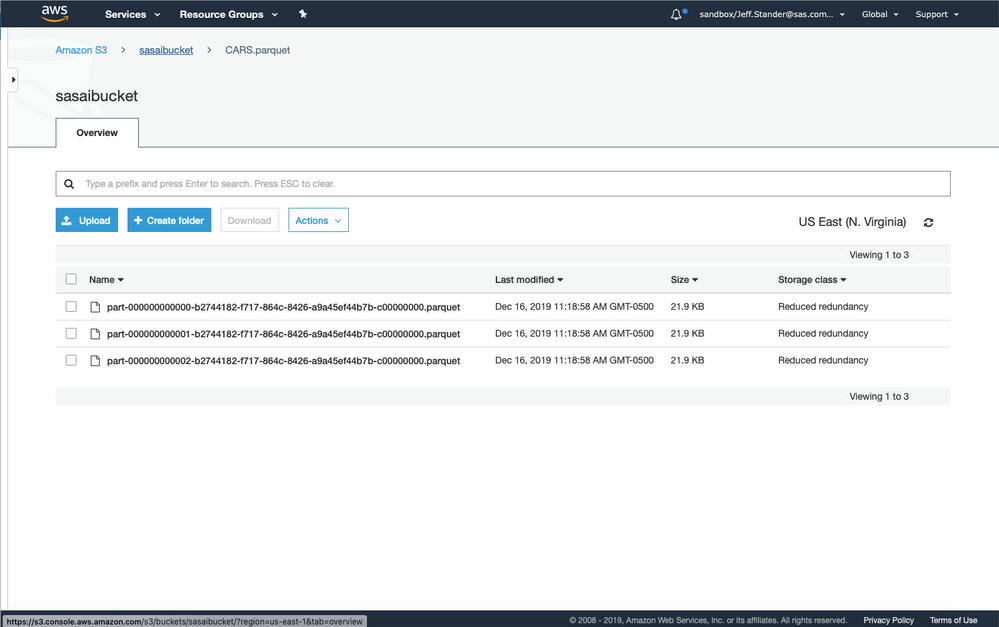

If I look at the bucket on Amazon S3, you can see the directory is created.

And inside the directory is a set of parquet files.

Perhaps the hardest part was remembering how to get the AWS keys.

Hopefully you will this example useful if you are doing this for the first time!

Good luck my friend on your journey!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Do you know of anyway to leverage SAS 9.4 M6 and PROC S3 to write out a Parquet formatted dataset from a SAS dataset? Seems like the only thing i have found would be to write out the data to a flat file, then use something like Python to format the data into a parquet format. Just curious if there is anything to make this easier if you have 9.4m6 and Proc S3, but not Viya which has CASLIB.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

could use saspy:

sas.sd2df('cars','sashelp').to_parquet('/tmp/cars.parquet')

I run in databricks [community edition] which allows me to then write directly to s3 using a dbfs:/mnt mount and following:

dbutils.fs.cp('file:/tmp/cars.parquet','dbfs:/mnt/jgalloway/parquet/cars_from_sashelp.parquet')

but there will be numerous other ways to get your parquet to s3 (WinSCP is another option for this)

SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.