- Home

- /

- SAS Communities Library

- /

- Clustering across AZ does not automatically provide DR

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Clustering across AZ does not automatically provide DR

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Your customer has given requirements for your project which include disaster recovery (DR). Now, followers of this article might recall that disaster recovery is not a feature of SAS software. But you know that many SAS software components do offer options to improve availability through clustering. And perhaps your customer's IT organization is large enough to have data centers in multiple geographic regions. Or maybe they're running SAS in the cloud using AWS or Azure or GCS, all of which offer multiple availability zones (AZ) to protect cloud resources from the loss of a physical data center.

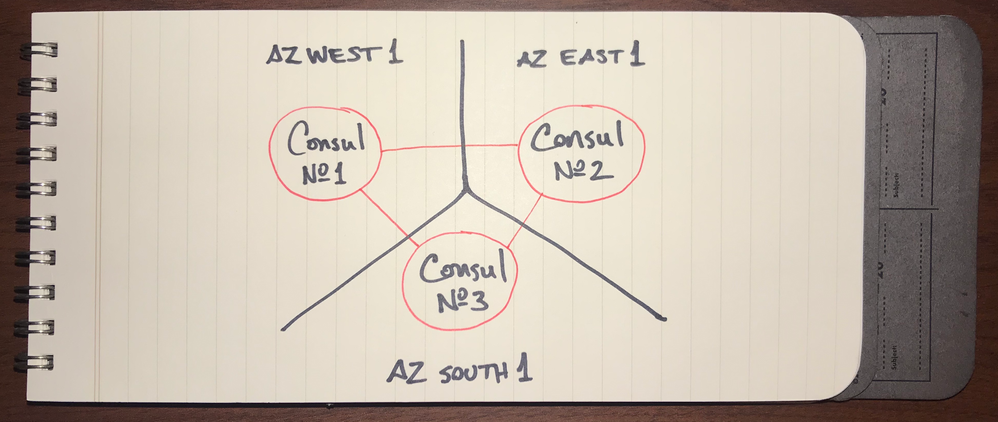

A quick back-of-the-napkin sketch showing SAS software clustered with nodes deployed into different AZ is often looked at as a simple solution to provide DR automatically and on the cheap:

Select any image to see a larger version.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

But this seemingly simple solution is fraught with several challenges. Overcoming these challenges is necessary to deliver the solution the customer expects to have. Not doing so will lead to performance problems and even worse, that the solution won't provide the availability and disaster recovery that was intended all along.

A quick note: In cloud parlance, "availability zone" has a specific meaning (1, 2, 3), usually where two or more AZ will reside in nearby geographic proximity within a "region". A widespread disaster that affects an entire region then might take out multiple AZ. And therefore, some companies will cluster across regions as well. Of course, regions can neighbor each other or be on opposite sides of the planet. To be clear, this post uses "AZ" to simply mean "different physical locations, near or far". Take note that topics discussed here will have varying considerations specific to physical distance.

Challenge No. 1 - Latency, not just throughput

From a computer networking perspective, latency is the amount of time between sending a request and receiving a response. Assuming no processing, just a simple bounce back, then this time is driven mostly by physical distance between the two communication nodes. We often use the ping utility to determine that amount of time. The further the distance, the longer the ping times meaning the greater the latency.

ping localhost # within the same host

64 bytes from 127.0.0.1: icmp_seq=0 ttl=64 time=0.032 ms

64 bytes from 127.0.0.1: icmp_seq=1 ttl=64 time=0.093 ms

ping sas.com # within 1 mile (1.6 km)

64 bytes from 10.15.1.19: icmp_seq=0 ttl=252 time=2.753 ms

64 bytes from 10.15.1.19: icmp_seq=1 ttl=252 time=2.710 ms

ping weibo.cn # over 7,000 miles away (11,000 km)

64 bytes from 47.88.66.107: icmp_seq=1 ttl=90 time=153.716 ms

64 bytes from 47.88.66.107: icmp_seq=2 ttl=90 time=143.928 ms

So the challenge here is splitting up SAS clustered services into different availability zones. The farther the distance between the AZ, then the longer the ping times will be, sometimes substantially, orders of magnitude larger than when services co-exist in the same physical location. To see what this means for Amazon Web Services, visit cloudping.co for a real-time assessment of latencies between the various AZ.

Latency adds up fast, too. Web pages often make dozens or even hundreds of individual requests for files that make up a single web page… especially web apps like SAS Studio and SAS Visual Analytics. As those requests stack up, so does the impact of longer latency - and this means the user may perceive SAS performance as sluggish. If latency is too high, then some requests will timeout, meaning those resources aren't returned properly, leading to a broken user experience.

The same holds true for SAS Cloud Analytic Services and its component controllers and workers. They communicate frequently and sometimes even shuffle data between them. Higher latency between nodes means that the high-performance analytics engine won't be able to complete coordinated tasks as quickly or efficiently.

Challenge No. 2 - Placement of services

Improving availability means eliminating single points of failure. This means that we often cluster our software so that redundant components run on separate hosts. In this way, if a single service instance, disk, power supply, or something else on the host fails, then the overall clustered service can continue operations until the problem is resolved.

The same concept can be extended to data centers, too. If a natural disaster strikes, then the entire data center might go dark. And that’s why cloud providers offer AZ so that it's easy to eliminate their physical infrastructure as a point of failure as well. So eliminating the data center as a single point of failure makes sense, but how many AZ do we need? Two? Three? More?

The answer is it depends on the software being clustered - and how that clustering actually works. Let's look a two quick examples.

Cluster Type: Redundant Service Nodes

For SAS Viya, consider the SAS Configuration Server (a.k.a. Hashicorp Consul). Each instance of Consul maintains its own copy of data. They can act independently to satisfy requests, but coordinate closely to ensure their data is kept in sync.

To maintain quorum, Consul requires clustering on three or more odd number of nodes. That way, as long as more than half the nodes stay in communication with each other, then Consul will continue in service. If we split those three nodes across two AZ, have we eliminated the data center as a single point of failure?

No. Not really.

With only two AZ, that means two out of three Consul nodes are in the same AZ. If that one AZ goes down for some reason, then Consul loses more than half of its nodes, which means it no longer has quorum. Even though one Consul node is still active, Consul will cease service at that point. To be consistent with Consul's expected operations in support of quorum, then it's necessary to deploy each Consul node to a different AZ.

Make similar considerations for SAS Infrastructure Data Server (Postgres and pgPool), SAS Message Broker (RabbitMQ), SAS Secrets Manager (Hashicorp Vault), SAS Cache Locator and Server (Apache Geode), as well as the various SAS server and microservices components. And keep in mind that each technology employs its own approach for ensuring a single version of the truth - not the same quorum technique as Consul.

Cluster Type: Collaborative Service Nodes

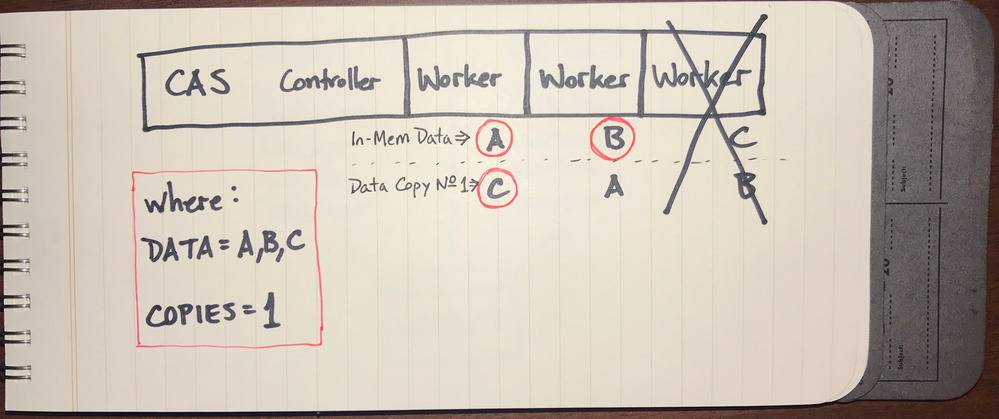

With the SAS Cloud Analytic Services (CAS), we have a different type of cluster technology. CAS is built using a shared-nothing MPP architecture. CAS employs one (or more) controllers to coordinate workload and a number of workers. The workers each are given a subset of the larger data problem to solve. They work independently on their own assignments, then send the results back to the controller.

If we split MPP CAS across two AZ, have we eliminated the data center as a single point of failure?

Maybe. It's possible.

CAS offers two controllers: a primary (required) and a secondary (optional). The secondary controller does not offer full functionality of the primary - and is only intended as a temporary backup for failover if the primary is suddenly lost. So if you place the primary controller in one AZ and the secondary controller on a different AZ, then split the workers evenly across both AZ, then the CAS service should be resilient if one data center does go dark.

But, keeping the CAS service running is only one problem to solve. More important is the data which CAS maintains in memory. Without data, CAS can't do much. If too many CAS workers go offline at once, then it's likely that the data CAS was keeping in memory distributed across all workers will be incomplete, effectively corrupt, and therefore unusable. So we need to consider what CAS requires to keep in-memory data highly available.

Challenge No. 3 - Data availability

For many, but not all, data sources and formats, CAS defaults to keeping one additional copy of data available to its workers (see COPIES= option for the PROC CASUTIL SAVE statement). With COPIES=1, then CAS can maintain data integrity if one CAS worker goes out of service.

Now what if a second CAS worker goes down? Without a second copy of the data to reference on the remaining node, then the data table is no longer complete. So if you want CAS to be resilient and maintain data integrity after the loss of multiple CAS workers, then increase the COPIES= value accordingly.

Consider this for an MPP CAS deployment with 10 workers split across two AZ - five workers in each AZ. If one data center goes offline, can CAS maintain data integrity with the remaining five workers?

Yes, but only if COPIES=5 was in effect when the data was loaded.

That's a big if. And it's a big ask. That's a lot of redundant data to copy around. And it requires considerable space for the CAS_DISK_CACHE on each of the workers. That's a large commitment to resources which must be maintained for high availability of data to protect against an event which is unlikely to occur.

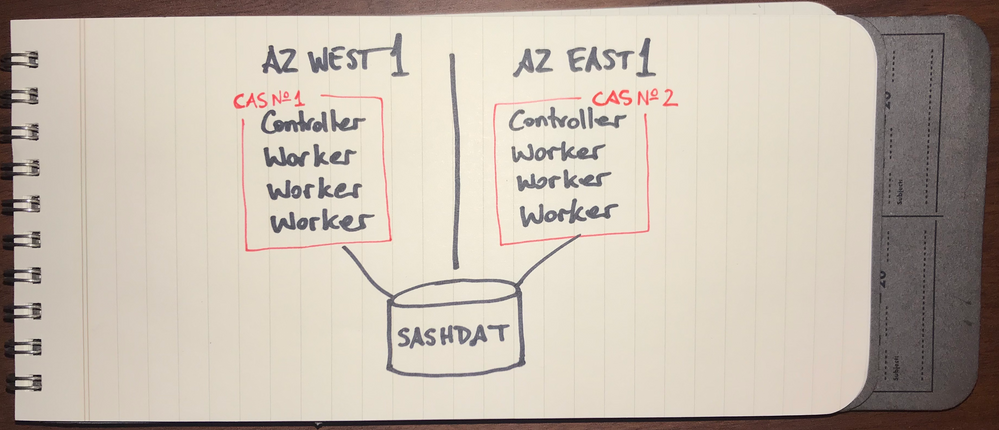

An alternative to consider might be to establish a manual recovery process. For example, run two different MPP CAS servers, each in their own AZ with their respective workers together. The idea is that if one AZ goes down, then the CAS server in the other AZ is still available for service.

But what about the data? For this scenario, establish a process that all data for CAS use will first be staged in appropriate infrastructure that provides rapid parallel loading (e.g. SASHDAT format in DNFS). And of course, ensure it also has high availability across AZ.

This way, both instances of CAS will have speedy access to the same data source. If one CAS goes down, then the other could be used to run the same workload. While this requires more manual effort and some coordination, it's likely a simpler and more efficient use of resources over time. Indeed, the process could be setup so that a load action for SASHDAT data in the staging area is always run on both CAS servers every time. Due to how CAS loads SASHDAT from DNFS, the redundant copy of the data on the other CAS server may consume no in-memory resources if it's not actually used. See "Lazy Loading of SASHDAT" in my previous article, 4 Rules to Understand CAS Management of In-Memory Data.

Challenge No. 4 - Synchronizing content

As described above, clustering across AZ presents some significant challenges. If those can't be overcome, but DR is still desired, then a different approach might be employed. Typically this means deploying the full stack of SAS Viya software twice - each in a different AZ.

Active-Active

As you can imagine, many customers would like to see both such deployments of Viya online and processing their day-to-day workloads. They reason that if one Viya deployment goes down, then all of the workload could go to the other. This is most difficult to achieve, however, in terms of keeping the data and user-generated content of two different Viya environments perfectly in synchronization. Currently, we are not aware of a good way to do that for separate databases (like Consul, CAS, or even Postgres) which are operating in different physical deployments… unless they're set up as a single service cluster. But then you have to go back to the top of this post and re-read about Challenges 1-3 again. 😉

Active-Passive

For active-passive, the idea is that the active Viya deployment handles the normal day-to-day operations while the passive deployment sits idle (or even switched off) until it's needed. In a disaster strikes the active deployment, then the passive one is spun up to take over - usually requiring some minutes or hours to come fully online. For Viya deployed using cloud providers, active-passive should be the default approach because the nature of the cloud makes it possible to easily bring up resources - like a passive environment - on demand when they're needed, costing nearly nothing in the meantime.

The challenge here is keeping the passive deployment up-to-date with data and user-generated content from the active environment. And with an environment like SAS Viya, this is usually best achieved with some form of regularly scheduled process which might involve a number of approaches, including elastic storage options, shared file systems, backup+restore and/or promote as well as manual steps to switch on the passive environment at the time it's needed.

Coda

Planning for disaster recovery is necessarily a collaborative process with your customer's IT team. When you see clustering across data centers or AZ proposed, then be wary about attempting that as a shortcut to achieve DR. While that might be tempting, educate your customer's IT team about the significant challenges that must be properly addressed to truly ensure performance is adequate and also that availability and DR are actually accomplished. Also remember that manual and/or site-specific actions might be needed (meaning design, build, testing, and evaluation) to ensure the customer can achieve their goals.

SAS publishes our SAS 9.4 Disaster Recovery Policy and our SAS Disaster Recovery Policy for SAS Viya 3.4 online for easy reference.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.

- Find more articles tagged with:

- administration

- architecture

- availability

- AZ

- backup

- cas

- CAS_DISK_CACHE

- clustering

- DR

- GEL

- HA

- memory

- Recover

- sashdat

- viya