- Home

- /

- SAS Communities Library

- /

- CAS and YARN: integration or interaction?

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

CAS and YARN: integration or interaction?

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

In 2016, I wrote about the integration between CAS and YARN. This was for the first version of Viya (3.1).

As we are seeing more and more customers with large Hadoop clusters adopting Viya and CAS, it is a good time to revisit this topic, bust a few myths, and discuss some real use cases and architecture considerations.

The focus of this article is where:

- The Hadoop environment is being used by users for SAS and non-SAS activities (jobs).

- The CAS Server is co-located with some or all of the Hadoop nodes.

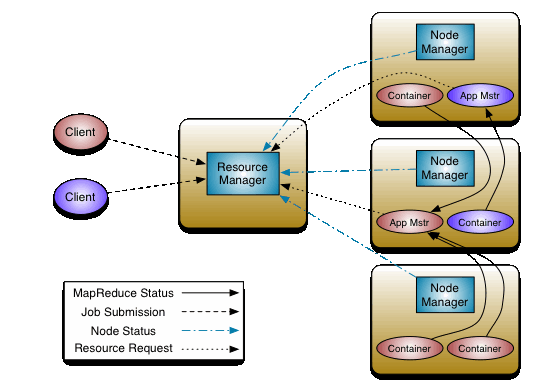

Since SAS and Hadoop processing takes place on a set of shared host machines, it is important to consider the implications when the computing resources are utilized by both applications (see the diagram below).

| example of CAS and Hadoop YARN shared environment |

In this article we will discuss CAS and YARN integration, but to be clear, it is also possible to configure CAS successfully and execute processing with co-located Hadoop only for the storage part with HDFS, without the need for YARN integration.

But that is not discussed further as it is assumed that the reader wishes to understand how CAS and Hadoop resource management can be controlled using one approach, namely YARN. If CAS is not co-located with a Hadoop cluster, then you can stop reading now 🙂

Okay, let's start by reviewing the key points from my previous post that are still relevant in the latest Viya releases:

-

- There is no "true" and dynamic integration between CAS and YARN because the CAS jobs will NOT be run inside YARN containers and NOT be run under the control of YARN.

- However, in situations where your CAS server is deployed inside the Hadoop cluster, there are ways to manage the coexistence of CAS jobs and other Hadoop jobs (Mapreduce, Spark, Impala, etc...) both being generally "memory-intensive" and fighting for cluster resources in general.

- CAS, at start-up time, can be restricted to a certain amount of memory (cas.MEMORYSIZE).

- With the cas.USERYARN option enabled, it is possible create "place holder" containers in YARN to “reserve” this amount of memory against YARN as long as the CAS server is running.

CAS can tell YARN to reserve computing resources for the CAS processing on each node. But to be clear, CAS should not be considered a YARN application.

How does YARN work? (High level view)

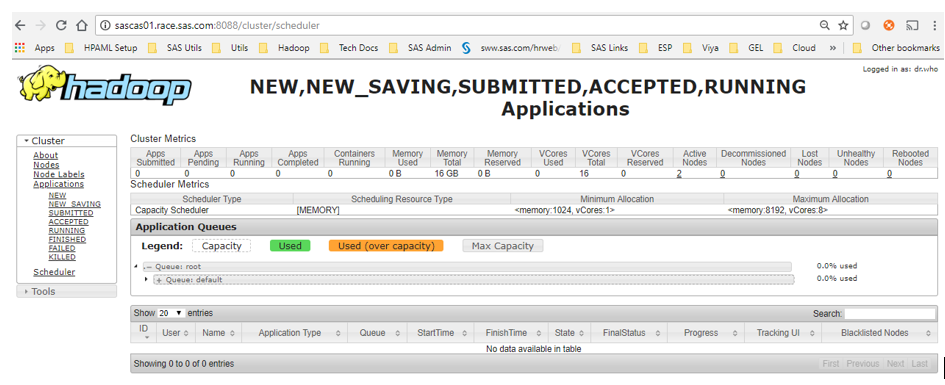

The Apache Hadoop documentation provides a pretty good explanation and architecture overview for YARN (which stands for Yet Another Resource Negotiator). In a YARN cluster, you have two types of roles for the machines: NodeManager or ResourceManager.

The NodeManager is the machine where the task will be executed, inside a YARN Container.

The ResourceManager is not running tasks but it has two main components with different roles: Scheduler and Applications Manager.

| YARN Architecture - Source : Apache documentation |

- "The Scheduler is responsible for allocating resources to the various running applications subject to familiar constraints of capacities, queues etc." But it does not monitor or track the applications status. "The Scheduler performs its scheduling function based the resource requirements of the applications; it does so based on the abstract notion of a resource Container which incorporates elements such as memory, cpu, disk, network etc."

- "The ApplicationsManager is responsible for accepting job-submissions, negotiating the first container for executing the application specific ApplicationMaster and provides the service for restarting the ApplicationMaster container on failure. The per-application ApplicationMaster has the responsibility of negotiating appropriate resource containers from the Scheduler, tracking their status and monitoring for progress."

So the scheduler is where you can define queues/resource pools (where to assign application), with specific rules like maximum percent of cluster usage for jobs in this queue or specific minimum/maximum containers size (Memory or CPU). Whereas the Application Manager is the one who keeps track of what is running where.

There are different kind of schedulers available in YARN, the most well-known are the "FairShare Scheduler" (default with Cloudera) and the "Capacity scheduler" (default with Hortonworks HDP) and they are working in different ways.

| The YARN scheduler web interface |

By default both Capacity scheduler and Fair Share scheduler, only take memory into account when doing their calculations (CPU requirements are ignored). All the math of allocations is reduced to just examining the memory required by resource-requests and the memory available on the node that is being looked at during a specific scheduling-cycle.

What happens when CAS starts with cas.useyarn=true?

Since Viya 3.1 you can configure CAS with the cas.USEYARN configuration parameter set to "true". Here is what happens when you start the CAS service:

- First, CAS will check if a cas.MEMORYSIZE value has been provided, because the 2 configuration parameters are working together (if you want to use YARN nous need to set a memory limit for CAS with cas.MEMORYSIZE).

- If both parameter are set, CAS will create Memory cgroups on each CAS node to restrict the usage of active memory for the CAS process to the value specified in the cas.MEMORYSIZE

- Then CAS sends a request to the YARN Resource Manager in order to start the "CAS application" and pass the information from the cas.MEMORYSIZE parameter to YARN.

The CAS Controller log provides messages to confirm (for example with cas.MEMORYSIZE set to 4g):

18/06/20 08:18:57 INFO tkgrid.JobLauncher: submit: Completed setting up app master command ${JAVA_HOME}/bin/java -Xmx500m -Xms500m com.sas.grid.provider.yarn.tkgrid.AppMaster –jarFile /usr/local/hadoop/share/hadoop/common/lib/sas.grid.provider.yarn.jar –general intcas01.race.sas.com –port 43045 –host intcas01.race.sas.com –host intcas02.race.sas.com –host intcas03.race.sas.com –cores 1 –memory 4096 1 LOG_DIR /stdout 2 LOG_DIR /stderr 18/06/20 08:18:57 INFO impl.YarnClientImpl: Submitted application application_1529477689901_0007 18/06/20 08:18:57 INFO tkgrid.JobLauncher: submit: ApplicationID application_1529477689901_0007 submitted successfully 18/06/20 08:18:57 INFO tkgrid.JobLauncher: submit: Exit, jobID=application_1529477689901_0007 18/06/20 08:18:58 INFO tkgrid.JobLauncher: waitForConnection: connection received 18/06/20 08:19:02 INFO tkgrid.JobLauncher: waitForReadyOrDie: Received READY message from AppMaster - Based on the cas.MEMORYSIZE parameter and the available resources in the cluster at this time, the YARN Resource Manager will accept or reject the request to start the "CAS Application"

- If accepted, YARN first starts the "Application master" container (whose role, in the YARN architecture is to coordinate and track the execution of tasks on the others containers)

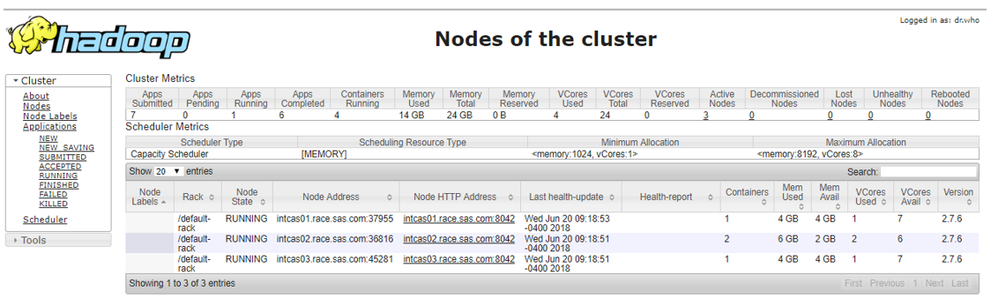

- The application Master will start one container with a size generally corresponding to the cas.MEMORYSIZE on each yarn node manager that CAS runs on (including cas controller) and keep the YARN application in the "RUNNING" state for the whole lifecycle of the CAS Server.

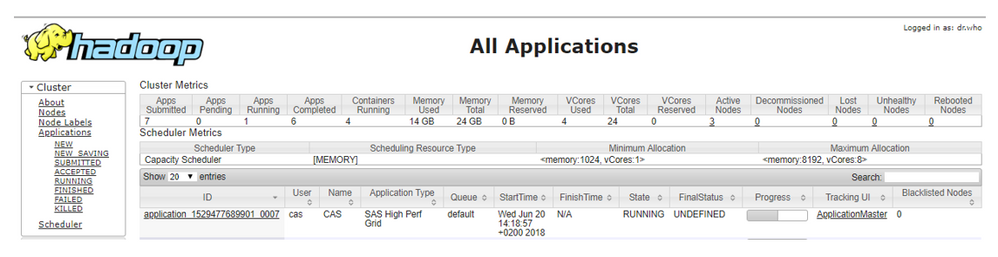

- The ResourceManager GUI will show that the "SAS High Perf Grid" is running and displays the resource consumption (in terms of memory and vcores)

| YARN Application for the CAS Server |

| YARN Containers for the CAS Server |

- From the YARN side, the amount of memory associated to the "CAS Application" containers will stay reserved for our CAS server as long as the CAS server is running (and will not be allocated for other Hadoop jobs).

- From the CAS side, it is consistent to "preserve" this amount of memory from the other Hadoop jobs as the memory used by the CAS server process is indeed limited to the value transmitted to YARN (cas.MEMORYSIZE).

Note: Even if you limit the CAS memory usage with cas.MEMORYSIZE, it does not prevent you to load more data than this limit. Thanks to the files memory-mapped in the CAS Disk Cache, when the limit is exceeded, CAS can "page out" and "page in" required data blocks that would not be already available in the active or cached memory.

Ok for the memory but what about the CPU?

For YARN

YARN works with the concept of vcores (short for virtual cores).

A vcore, is a usage share of a host CPU which YARN Node Manager allocates to use all available resources in the most efficient possible way.

The number of vcores that a NodeManager can use is defined in yarn.nodemanager.resource.cpu-vcores. It is generally set to the number of physical cores on the host, but administrators can increase or decrease it up if they wish to accommodate more or less containers on nodes. It is very important to find the proper value for this parameter.

Just an example

In our research environment, we identified performance issues with Hadoop : only 2 or 3 people out of 20 concurrent users were able to perform their data management activities in the same period of time (Serial and parallel loading from Hive).

This initially surprised me since the users were simply running a Hive query against a small table. Similarly the initial performance when observing parallel loads of data using with the Embedded Process were not encouraging...Given the Hadoop cluster contains 3 workers (each with 2 cores and 16 GB RAM), I was expecting better performance!!!

Why?

Actually this observed sub-optimal performance was caused by the default YARN settings, the default values specified meant that only a very small number of containers were able to start…

Each container requires a minimum of 1 vcore, and with yarn.nodemanager.resource.cpu-vcores set to "2", it was only possible to start a maximum of 6 (2x3, for 2 vcores and 3 NodeManagers) containers across the whole cluster. Once all the 6 slots were taken the remaining jobs were failing or pending.

Given that 2 containers were required to start the MapReduce Job generated by each Hive queries, and 4 for each SAS EP mapreduce Job (in addition of the ApplicationMaster container), it became clear where the bottleneck was…

Changing the value of yarn.nodemanager.resource.cpu-vcores to "8" made instantaneously 24 vcores available to start containers in the cluster and then, all of a sudden, our 20 users were able to perform their hands-on in a reasonable time 🙂

There are many other parameters like this one that you can tune in YARN either at the NodeManager level or at the cluster level (for example maximum and minimum container sizes in term of vcores and memory) and they can impact the containers resource allocation, whatever the client application is requesting. (See the excellent SAS® Analytics on Your Hadoop Cluster Managed by YARN for detailed explanations of those parameters in the context of LASR/HPA usage.) When a client application requests resources to YARN, it generally provides not only a memory amount but also a number of vcores.

For CAS

When CAS starts, to enforce the license restriction, it sets a cpu mask on the created threads telling the threads they can only run on certain cores. So no matter how YARN allocate the "vcores" on the CAS nodes, the CAS process will only be able to use the licensed CAS physical cores of the server.

With cas.USEYARN enabled, in Viya 3.3 when CAS starts up, the number of "vcores" asked to YARN is fixed to 1 (see log extract above). In Viya 3.4, there is a new parameter called env.CAS_ADDITIONAL_YARN_OPTIONS, which, as the name suggests, will allow additional parameters to be specified to YARN when starting the CAS server. For example:

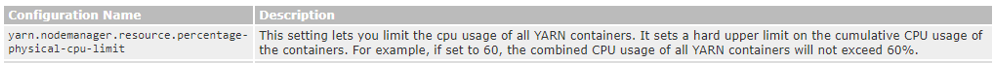

Finally your Hadoop administrator, although it is not enabled by default, might have also configured YARN to use cgroups to limit the containers in the resource usage.

When activated in YARN, you can limit the cumulative CPU usage of all containers on each NodeManager to a defined percent of the machine.

Why might some groups of users feel frustrated? (Requirements and limits)

If you have managed to read the first half of the article and are wondering why all of this is important 😊: it serves as a reminder how setting technical expectations during the technical architecture design of a Viya implementation early in the discussions with the various project stakeholders can be beneficial (e.g. saves time, can speed implementation time, avoids a poor end user experience experience).

Not dynamic

This is key consideration and one which must be clearly understood : Once you have started CAS with the cas.USEYARN configuration parameter set to "true", then the amount of capacity in YARN is reserved for the duration of the CAS server process. Even if there are zero users utilizing CAS, a large part of the memory cannot be used by Hadoop related processing.

While this is true, it is important to understand that CAS is a service, not another Hadoop batch job that will run, terminate and release the resources. The CAS server needs to be up and running so it can provide a 24/7 Analytics service to the end-users community.

In the Hadoop vocabulary, CAS will be known as a "Long Running Services" and the YARN community is aware of the need to also run them in Hadoop and is working to improve their support (e.g. see this page).

Requires available resource on all CAS nodes

When CAS starts up, it needs to start a container on each YARN NodeManager where CAS is installed.

For some Hadoop administrators who are not as familiar with SAS in-memory processing requirements, the request to ring fence resources for CAS (“long running service”) will require an assessment prior to implementing.

- There is a significant configuration consideration for YARN: All CAS nodes (Controller and workers) need to be co-located with a YARN NodeManager (only the NodeManagers can start containers).

- The YARN containers maximum memory size value must equal or exceed the cas.MEMORYSIZE

- When CAS starts up, the amount of resource has to be available on all nodes where CAS is installed.

The third point seems obvious but on very large and busy Hadoop clusters there can be situations where: over the whole cluster there is more than enough memory to support the CAS memory request but a number of the NodeManagers are already using all the host machine RAM/CPU capacity. In such case, CAS will not start, because it needs to start a container on each node where CAS is deployed.

CPU resource management instructions are not passed from CAS to YARN

With Viya 3.3 only the CAS memory usage information is passed to YARN (there is no information about the CPU), the number of cores is hard-coded to 1 per container. However keep in mind that CAS will not be allowed to use more cores than what is defined in the license.

In addition with SAS Viya 3.4 there is also a new parameter cas.CPUSHARES to specify cgroup shares for the CAS server as a whole. This new parameter could be used in conjunction with YARN.

For example YARN jobs could be put in a cgroup with "X" shares, and start CAS with CPU shares "Y". YARN jobs would get X/(X+Y) of the CPU, and CAS would get Y/(X+Y) (assuming there were no other top level CPU cgroups on the host).

Finally with Viya 3.4, thanks to the new CAS environment variable CAS_ADDITIONAL_YARN_OPTIONS it will be possible to specify number of cores, queue, etc... for our "placeholder" containers.

Possible ways to improve the overall experience

Tuning

There are many knobs that a Hadoop administrator can tune in YARN and in the Scheduler configuration using queues, node labels, placement rules, etc…depending on the distribution and associated Scheduler.

Also as already discussed with Viya 3.4, it will also be possible to pass more parameters to YARN at the CAS server startup.

What else?

Well... what can be done from the CAS side is documented and hopefully understood with the previous explanations.

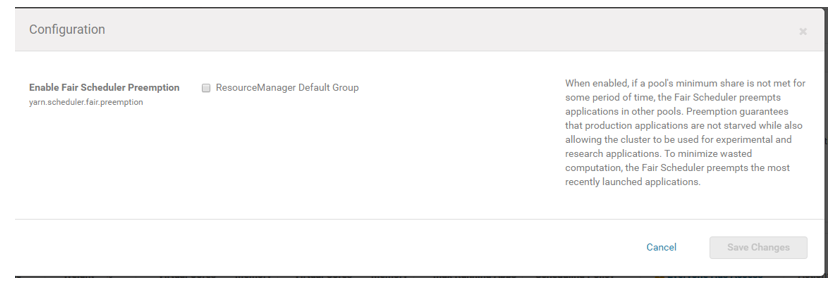

The unknown, though is what can be done on the YARN side and will it be supported with CAS? The YARN capabilities depend on the YARN version and on the YARN community to continue developing advanced resource management features for YARN, such as "node labeling" and "pre-emption". (See this additional explanation on the node labels.)

YARN preemption can be configured in at the scheduler level:

| YARN Preemption configuration |

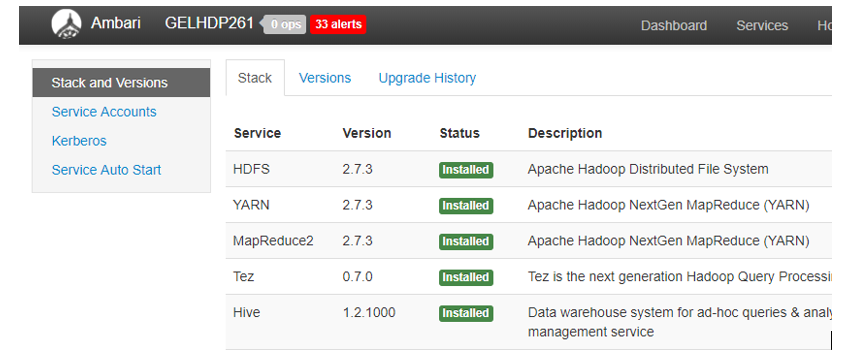

Depending on your Hadoop distribution and version, you will be using a more or less recent version of Hadoop and the YARN capabilities will depends on it.

For example, according to this ticket non-exclusive node labeling (and non-exclusive node labeling preemption) will work starting from 2.8.0 Apache Hadoop. And according to Hortonworks documentation in the latest Hortonworks HDP platform Apache Hadoop 2.7.3 is used.

Also Yarn is currently not able to pre-empt containers correctly if the requested containers are on specific nodes (which is what we do when we start the CAS Server) - Open Ticket

So clearly, "your mileage will vary" and there is no default support for such features.

However it is important to remember that even though CAS notifies YARN with "place holder" containers, CAS has its own focus in terms of resource consumption: if it is not limited through the license to a subset of cores it will try to use all the available CPU resource (whatever has been communicated to YARN :)).

Conclusion...and others co-located architecture considerations

First I'd like to thank Steve Krueger, James Kochuba, Stefan Park and Simon Williams for their help and contribution during the write-up of this article.

As explained in this post, CAS is NOT running inside a YARN container and there are still some limitations that the SAS and Hadoop technical teams working on Viya implementation project need to take into account.

So make sure that the correct expectations are set when discussing this integration and the coexistence challenges of CAS and Hadoop Applications inside the same YARN cluster.

The technical teams must clearly understand that on each host machine where CAS is installed, they will need to give up a part of the Hadoop cluster memory resource for the CAS usage. This amount will remain reserved for CAS (whatever the usage is) and CAS will not use more than this amount of memory. This amount is NOT dynamic depending on the CAS activity.

Finally, YARN does not manage everything in Hadoop like HDFS and Hive servers process/other servers. That is why YARN does not equal full OS resources, but is generally configured to use 60/70 % of the resources on each node manager.

Although this article focuses on YARN and workload management of CAS and Hadoop processing, the other important dimension when considering a co-located environment is the storage.

CAS does not only requires memory and CPU, but it also needs disk space for the CAS Disk Cache. So it is very important to make sure all the Hadoop node storage can provide enough space on local standard File Systems for the CAS Disk Cache, where CAS will "memory-map" its tables. When Hadoop is deployed on Vendors appliances with Highly Performant storage and many local drives attached (like Oracle BDA), it is very likely that 95% of this storage has been already formatted for HDFS.

So with CAS, it will be necessary to find storage that is exposed as a local directory to CAS for our CAS Disk Cache.

For all these reasons, technical teams working to deliver SAS implementations need to discuss if requirements can be best met by co-locating CAS with an existing Hadoop environment, or whether it is better to place CAS and Hadoop on different host machines.

Keeping CAS and Hadoop environments separated from one another (aka "remote deployments") may prove to be more appropriate when the existing Hadoop environment is consistently using large amounts of CPU and RAM. This ”remote deployment” configuration is made possible due to the Data connect accelerator and Embedded process) which allow effective movement and processing of data.When Embedded Process are deployed it can also help to have some dedicated networking infrastructure between CAS and Hadoop.

So, to sum up and I'll quote one of my favorite songs by The Offspring, the best practice is "unless you have no choice, you got to keep 'em separated."

Thanks for reading! Your questions and comments are welcome!

Ready to see what SAS Viya Copilot can do?

Visit the Tips & Tricks page for setup guidance, demos, and practical examples that show how Copilot supports your workflows.

SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.