- Home

- /

- SAS Communities Library

- /

- Autoencoder analysis using PROC NNET and neuralNet action set

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Autoencoder analysis using PROC NNET and neuralNet action set

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

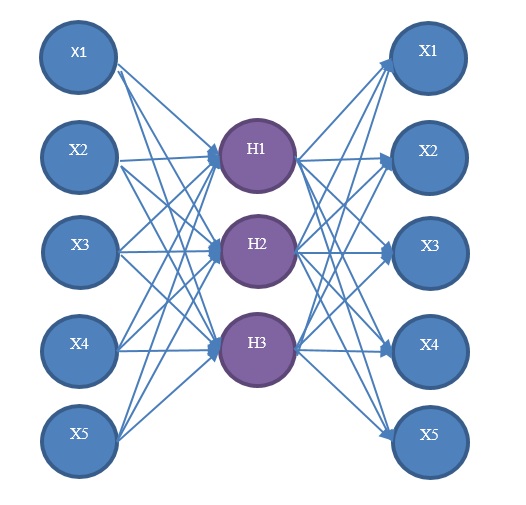

An autoencoder is a multilayer perceptron neural network that is used for efficient encoding/decoding, and it is widely used for feature extraction and nonlinear principal component analysis. For more information about multilayer perceptron neural networks, see [1]. Architecturally, an autoencoder is similar to a regular multilayer perceptron neural network because it has an input layer, hidden layers, and an output layer. However, it differs in that the output layer is duplicated from the input layer. Therefore, autoencoders are unsupervised learning models.

Figure 1 shows an example of an autoencoder. Autoencoders are supported in the NNET procedure and neuralNet action set as a part of SAS® Visual Data Mining and Machine Learning 8.1 offerings. You can train an autoencoder in PROC NNET by omitting the TARGET statement and using MLP as the ARCHITECTURE. The score output of the autoencoder contains the learned features of hidden layers. For more details, check the NNET Procedure chapter [3] in the book of Data Ming and Machine Learning Procedures.

Figure 1. A diagram of autoencoder network

We will show an example of how to call PROC NNET to train an autoencoder using the MNIST (Mixed National Institute of Standards and Technology) database. The MNIST database is a large database of handwritten digits that is commonly used for training and testing of various machine learning models. It has a training set of 60,000 examples and a test set of 10,000 examples. The digits have been normalized for size and centered in a fixed-size image.

Before we delve into PROC NNET, we need to download images files from the database and convert them to SAS data sets. From [2], you can download the four compressed files named train-images-idx3-ubyte.gz, train-labels-idx1-ubyte.gz, t10k-images-idx3-ubyte.gz, and t10k-labels-idx1-ubyte.gz. Then use any third-party unzip tool to decompress all the files to the destination directory of your choice. Decompressing should result in four files named train-images.idx3-ubyte}, train-labels.idx1-ubyte, t10k-images.idx3-ubyte, and t10k-labels.idx1-ubyte. Assuming you save all the files to the data directory, the following DATA step code snippet creates the SAS data set named mnist_train in SAS® Studio:

Next we can upload the data set to CAS (the Cloud Analytic Services execution engine for SAS Viya) and invoke PROC NNET. Here we assume you have already connected to a CAS server and initiated a session, and in this session you have a CAS library named mycaslib. For more information about CAS session and CAS libraries, see the sections “Using CAS Sessions and CAS Engine Librefs” on page 5 and “Loading a SAS Data Set onto a CAS Server” on page 6 in Chapter 2, “Shared Concepts” in the same book mentioned above. For illustration purpose, we include the first 10 observations in the training.

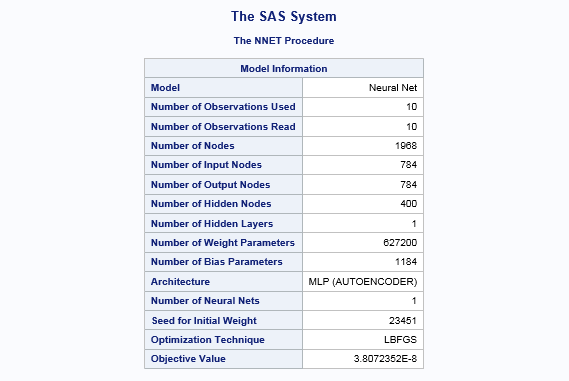

The following is the code snippet of how to load data set mnist_train to the active CAS session and run PROC NNET. Variables from var2 to var785 in the INPUT statement are the 784 dimensions of each handwritten image, and MLP is specified as the architecture, which is also the default. We add one hidden layer with 400 neurons, and the activation function is the hyperbolic tangent function. The SCORE statement produces a score data set based on the training model, and the score results include the values of each hidden neuron.

As you can see from the PROC NNET example above, there is no TARGET statement and MLP is used as the ARCHITECTURE. PROC NNET will train an autoencoder under this setting.

Figure 2 shows the model information from PROC NNET run. The value of the error function is about 2.8e-08, which indicates the optimization solver finds a very good solution and the model is fits well.

Figure 2. The model information from proc NNET run.

You probably already heard that SAS® Viya is open, and it means you can do the similar task from different clients like Python, Lua, and CASL. So next, we will train the same autoencoder in CASL by calling actions annTrain and annScore in the action set neuralNet. What is nice about CASL is that you can leverage the option listnode to save the output from the autoencoder analysis. By doing that, it allows you to visualize feature extraction by comparing the input plots with output plots. The following is the code snippet of CASL, which does training first and then use the training result to do scoring. In essence, it does the same job that PROC NNET does behind the scenes.

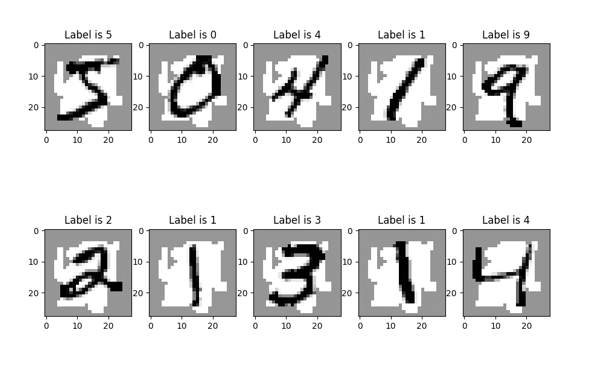

The Figure 3 shows the original handwritten digits in the first ten rows of data set mnist_train, and Figure 4 shows the reconstructed handwritten digits from the autoencoder training for the same ten digits. As you can see they share similar patterns. This example shows the hidden layers of the autoencoder is able to represent most important features of the inputs with much fewer dimensions, and the information can be further trained for analysis like denoised stack autoencoder in deep neural networks.

Figure 3. The original ten handwritten digits in the MNIST database.

Figure 4. The reconstructed ten handwritten digits from autoencoder training.

Lastly, if you are interested to create those mnist plots in Python 2.7, the following is the code snippet [4].

The source can be download from https://github.com/sassoftware/sas-viya-machine-learning/tree/master/autoencoder

References

[1] Bishop, C. M. (1995). Neural Networks for Pattern Recognition. Oxford: Oxford University Press.

[2] LeCun, Y., Cortes,C., and Burges,C.J.C (2016). “The MINST Database of HandWritten Digits.” http://yann.lecun.com/exdb/mnist/.

Acknowledgement

I would like to thanks for my colleagues Tao Wang, Wendy Czika, Brett Wujek for their suggestions and proof reading to the tip.

Ready to see what SAS Viya Copilot can do?

Visit the Tips & Tricks page for setup guidance, demos, and practical examples that show how Copilot supports your workflows.

SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.