- Home

- /

- Administration

- /

- Admin & Deploy

- /

- SAS GRID - View log file (from proc printto) while batch program is ru...

- RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

We are transitioning from a windows desktop server-based infrastructure to a Linux Grid environment, and are getting a lot of complaints from users that they are no longer able to view their log (generated through PROC PRINTTO) while a program is running in batch mode. I have tried messing around with the LOGPARM options but to no avail. I read that LOGPARM only works when ALTLOG is set, so I tried using them together without proc printto (even though this would not be a feasible solution) and even then I was not able to access the file. I've attached the error I'm getting when I try to open a log file. I was told that SAS does not write out to the log until the program is done running (or until some buffer is filled up) but I can see the file size growing, I just can't access the data. Even going into the Linux server and using cat just prints out gibberish. Any help would be appreciated!

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Why are you using PROC PRINTO when running a batch program? In batch mode a log file is produced automatically and can be written to the required folder location with the -LOG option when invoking SAS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Will that work? I'm open to suggesting it. Our users are not very skilled with Linux and are used to batch submitting from a right click, so they may push back against having to type another filepath into Linux.

EDIT: I tested it with the -log option and am confirming that it still does not work. I get the same error when trying to open the log.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

You haven't described in enough detail what you are doing. What does "batch submitting from a right click" mean? Where are you doing this? What are you opening the SAS logs with? Posting one or two screenshots of these processes would help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

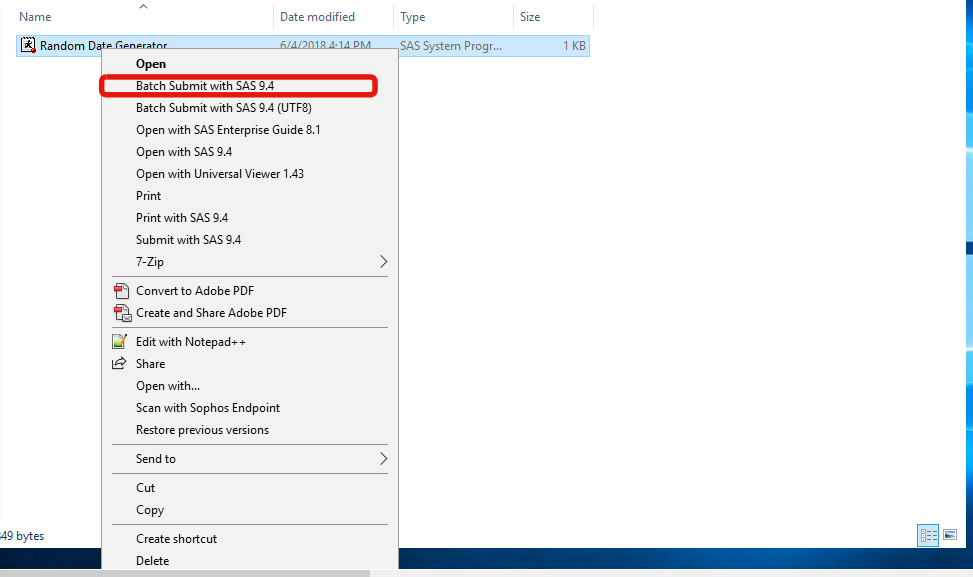

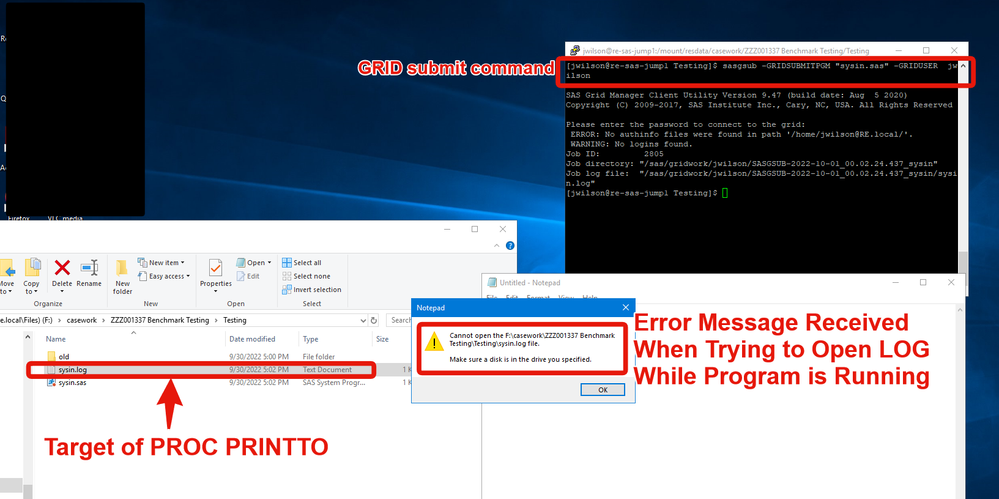

Screen shots of the old system (right-click -> "Batch Submit with SAS 9.4") and new system (Accessing GRID through Terminal/Putty) attached. Note that the program I am submitting is a dummy program that just has a PROC PRINTTO and a %PUT statement, followed by a call sleep for 60 seconds so that I have time to try and open the log.

Note also that in the old system, while the program is running, I am able to view the log. In the new system, it doesn't matter what application I use to open the log (notepad, notepad++, more/cat in Putty), the outcome is the same (i.e., no access).

Old system (windows):

New system (Linux back-end with a windows UI) with hopefully helpful commentary:

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

You should NOT have any trouble looking at files that are being written to on UNIX (unlike on Windows).

The tail command should work normally on such files.

That right click nonsense on Windows GUI is just a difficult way to run a SAS program. Instead just use the normal way to run a SAS program, which is to use the command sas (or whatever your system admin has setup for you) followed by the filename. The fact that you are using LSF to submit the job to a GRID node should not matter if they configured your Unix environment correctly.

You should not be using PROC PRINTTO on either WIndows or Unix for simple "batch" or background runs.

To run a SAS program the syntax is trivial and essentially the same as running another command or Unix or WIndows.

sas filename

If you don't use another options the SAS will write the LOG to a file with that base name and the extension .log and the PRINT (or "listing" destination) to a file with extension named .lst. So if you run any of these commands:

sas myfile sas myfile.sas sas /some/other/directory/myfile.sas

It will create create files named myfile.log and myfile.lst in the current directory.

If you want to direct the log and listing to other places use the -log and -print command line options.

sas myfile.sas -log myfile_run1.log -print myfile_run2.lstIf you push the run into the background (or open another command window) then you can check the status of the job by checking the end of the file(s)

tail myfile.log tail myfile.lst

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Hi Tom (big fan by the way, you're a forum machine),

I'm not sure your solution works in my case since we have a GRID server, so the "sas" command isn't available on the jump server. we have to submit everything with "sasgsub" instead. Also, just to be clear, the right-click batch submit is in our old system. I just provided that information for context since SASKiwi asked.

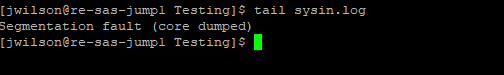

Basically, the bottom line is that no matter how I run sas, the log is still inaccessible while the program runs (I even bypassed the GRID and logged directly into a node and used the "sas" command and got the same results). Even if I use tail on the log while the program runs, I just get a Segmentation fault. Picture below. I have tried every combination of running SAS and viewing the log (including with and without PROC PRINTTO) and get the same result in all cases.

I also have a ticket open with support.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

If your system team hasn't figured out how to make a shell script that works like the normal old sas command then ask them to please do so. Out team did not really have any trouble doing it.

You could look at whatever the heck that sasgsub command is. Is it a shell script? If so look at what actual commands it is running. I suspect it is just a wrapper to call the normal bsub (or whatever tool you are using to submit to the grid) to send a call to sas.exe the the proper job queue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

If I am deciphering this cryptic help page it looks like you need place the actual SAS options inside the -GRIDSASOPTS option of the sasgsub command. Looks like getting the quoting right might be a pain in the ***.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

There should be a sasbatch.sh under the batch server which you could use as starting point.

Path is likely something similar to /sso/biconfig/940/Lev1/SASApp/BatchServer

A call could then look like:

<path>/sasbatch.sh -log <path to log directory>/<name of program>_<time directives>.log -batch -noterminal -logparm "rollover=session" -sysin <path to job>/<name of program>.sas

And you could of course already construct this call within the .sh that you provide to your users so they just need to pass in the path and name of the program.

And eventually add the .sh to a search path so users can just write: site_batch.sh <path and name of program>

And last but not least: I normally run my programs via SAS EG/Studio for development or adhoc tasks. I only run them via batch as the very last test before scheduling the scripts.

And if I run via batch then I run normally in background (sasbatch.sh ..... & and potentially a whole set of scripts together) and then use WinSCP to check the logs later on (and I've configured my WinSCP so it opens .log files in Notepad++ just by double clicking them in the file list).

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

@jwilson wrote:

I don’t think this will work since I also tried using the sas command when logging directly into the node, again both with and without proc printto and was getting the same issue. I don’t have an issue writing a shell script for our users but I haven’t found a way to make this work first.

I have lost the thread of the issue you are having. I thought the issue was the way you were trying to LOOK at the file being written by SAS was not working. I assumed it was because you were using some tool that required that it add exclusive access to the file, which I obviously cannot have if the file is still being written. Tools like cat and tail should work even when SAS is still writing to the file. (Note there is a delay in the content actually being written to the physical file caused by SAS buffering the writes. This can be controlled by SAS options)

Is that your issue or not?

Or is the issue that the file system where the GRID nodes are running SAS are writing to disks that the users cannot see?

I don't think PROC PRINTTO is the problem (other than making it more difficult to figure out where the heck the SAS job is actually writing).

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

When something simple as tail crashes with a coredump, I suspect an issue caused by the underlying (shared?) filesystem. Technical support is probably your best bet, as we can help with SAS issues, but not with operating system issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

I have a track open with Support. So far where we are at, they have said that this is expected behavior with SAS logs so I don't think this is an OS issue. I will run a few more tests and see if I can convince myself of that and will report back.

- Ask the Expert: Where Does My SQL Process? | 21-May-2026

- Printemps 2026 - MONSUG | 26-May-2026

- Free Half-Day Michigan SAS User Group Conference | 27-May-2026

- SAS Bowl LXI - SAS at 50 and SAS Innovate Recap | 27-May-2026

- WUSS Virtual Class: SQL – The Grammar behind AI: AI depends on data – SQL makes that data usable | 27-May-2026

- Ask the Expert: Advanced Data Collection and Insights in SAS Customer Intelligence 360 | 28-May-2026

- Spring 2026 OASUS Meeting | 28-May-2026

Learn how to explore data assets, create new data discovery agents, schedule data discovery agents, and much more.

Find more tutorials on the SAS Users YouTube channel.