Today the quality of data is paramount. Every decision, every insight hinges on the reliability and accuracy of the underlying data.

Many of the tools and techniques for improving data quality are locked behind deep technical knowledge and programming skills in different programming languages. What if I told you, it does not have to be that way?

Let me lead you into the world of Entity Resolution, where you will learn about techniques such as Data Identification, Parsing, Standardization, Matching, Clustering and Surviving Records. Entity Resolution attempts to identify different representations of the same data and provides a normalized, standardized master record, which will improve downstream analysis.

Entity Resolution has many different use cases, many of them within Fraud Detection and Anti Money Laundering.

How do we identify the same entity within data and then enhance this entity with information from "contributor" rows, all without writing a single row of code and only using out-of-the-box functionality? Let us get started!

I will be using a table found in Databricks that has some obvious data quality issues, which I will not be able to solve with the tools available in Databricks, unless I have very deep knowledge in SQL and Python programming as well as specific Python packages. You all know by now, how easy it is to connect to Databricks, as explained in my good colleague Cecily Hoffritz's blog:

SAS and Databricks: Your Practical Guide to Data Access and Analysis - SAS Support Communities

The table I will be working with contains customers, both individuals and organizations. To keep it simple, I will focus on the individual customers and how I can find the entities, remove duplicates, standardize the data, and create my master records.

To help me on this journey I will be using the SAS Quality Knowledge Base (QKB) and SAS Studio for engineering. The QKB contains massive amounts of predefined data quality rules and logic and in SAS Studio I can create visual modern data pipelines.

Identification

The first step is to identify whether a customer is an individual or an organization, for which I use the Clean Data Step and its Identification Analysis.

Using the Name column to identify whether it is an Individual or Organization

Using the Name column to identify whether it is an Individual or Organization

Once identified, I use the Branch Rows Step to split individuals and organizations into separate tables.

Parsing

To make any sense of this data, I would need a way of Parsing (dividing) it into tokens (components). I apply different rules on these tokens, as the information in them will vary and must be treated differently.

Fortunately, I have a SAS Studio component (step) called Parse Data, that will do just this for me!

As my data is in Swedish, I will be using the Swedish Locale from the QKB to Parse the columns Name and Address.

From the Name column, I keep the Given Name (Name_GIVENNAME) and Family Name (Name_FAMILYNAME) tokens. Extra tokens such as titles and additional Info, prefix and suffix can also be added, but for this Entity Resolution example they are not needed.

From the Address column, I keep Street Name (Address_STREETNAME), City (Address_CITY), Postal Number and Street Number Tokens. Notice that the Street Name has standardization issues, and there are missing values for City and Postal Number. Let us fix that!

Standardization

For the standardization issues I will use the Clean Data Step and its Standardization capabilities.

Now that I have identified, parsed, and standardized my data, I am halfway through my Entity Resolution process.

Match Code Creation

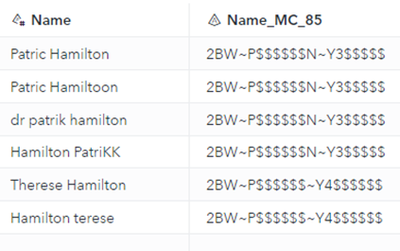

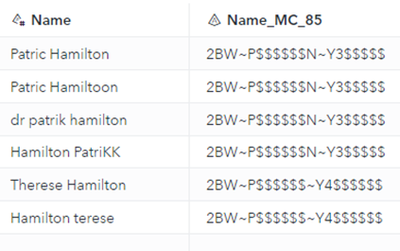

Next step is to create Match Codes. A match code is an encrypted string that represents portions of the original input string. During the match code creation, techniques such as Phonetic Rules, Noise word removal, Standardization and Normalization are used.

You can use different sensitivity levels to determine the amount of information stored in the match code. Use Lower sensitivity levels to sort data into general categories, or higher sensitivity for a closer match.

Phonetic Rules are applied in the matching process and will translate Patric, Patrick, Patrikk to Patrik.

Clustering

With the match codes generated I move on to clustering the data. I will be creating two different clusters where one of them is based on the Match Codes from the Name column, and the other one is based on the combination of Match Codes from Street Name and Street Number. The result of this clustering is that I get a Name cluster and an Address cluster. I will be putting rows with the same Match Code into the same cluster.

For clustering I use a Custom Step called DQ – Clustering that is publicly available on the SAS github for Custom Steps.

Once the clustering process has executed, we can clearly see that we have 2 different persons (NAME_CLUSTER), living on the same address(ADDRESS_CLUSTER).

Master Record Creation

Now it is time for me to create a master record for each person and enhance it with even better information from “Contributor” rows. Looking closer at the Address column, I see that there is missing information, some rows do not have a Postal Number while others are missing information about City. This means that this is cherry picking time where I pick the cherries (values) that suit the best!

To aid me in the cherry-picking process, I use a Custom Step from the SAS Github site called DQ – Surviving Record. This Step allows me to apply rules in the process of creating a surviving (master) record for each entity (Person). As an example, I will let the majority (high occurrence) of “contributor” rows decide which Given Name will be used for each cluster. Also, I am not allowing any missing values in the columns Address_CITY and Address_POSTALNUMBER.

Based on the rules in the DQ – Surviving Record step, values are selected for each cluster, and a Master Record from each cluster will be created.

And once the cherry-picking process is done, I can review the fruit of my labor in my two master records.

Summary

We have now traveled through the Entity Resolution process, all the way from our Databricks table with poor data quality, to a refined result with master records, ready to be used for downstream analytics. And I did not have to write a single row of code!

Of course, In SAS Studio I can access all these capabilities with code as well, if that is what I prefer.

But in the era of democratization, why not democratize functionality as well as data?

Learn more about SAS and Databricks

- Harness the analytical power of your Databricks platform with SAS

- Data everywhere and anyhow! Gain insights from across the clouds with SAS

- Elevated efficiency and reduced cost: SAS in the era of Cloud Adoption

- SAS and Databricks: Your Practical Guide to Data Access and Analysis

- Data to Databricks? No need to recode - get your existing SAS jobs to SAS Viya in the cloud

- Maximize Coding and Data Freedom with SAS, Python and Databricks