- Home

- /

- SAS Communities Library

- /

- Publish and Run a SAS Scoring Model In Azure Synapse Analytics

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Publish and Run a SAS Scoring Model In Azure Synapse Analytics

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

In my last post, I wrote about publishing and running a SAS scoring model in Azure Databricks. Let’s focus now on scoring in Azure Synapse Analytics. The overall process is quite similar.

Setup

Like in Azure Databricks, we need to install the SAS Embedded Process in Azure Synapse Analytics. The deployment steps are documented here.

As a reminder, the SAS Embedded Process is this lightweight SAS engine that will be deployed on a cluster (here a Spark pool) and that takes advantage of the cluster infrastructure. Basically, it will be able to run SAS code in parallel on the cluster’s distributed data.

Publish the Model

To be able to score data in Azure Synapse, we need to publish the model in ADLS (Azure Data Lake Storage) that will be accessed by Azure Synapse behind the scenes.

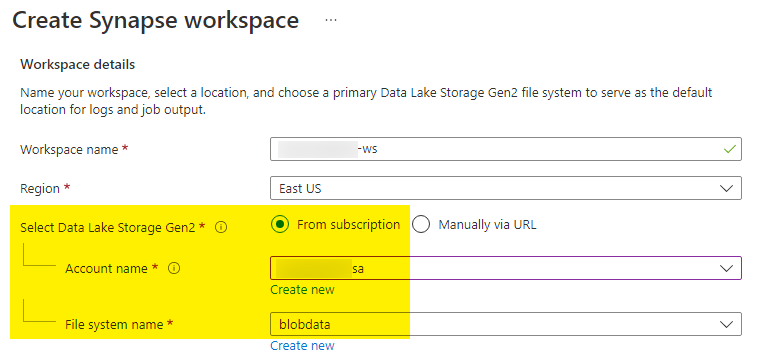

Indeed, when you create an Azure Synapse workspace, you are asked to link an ADLS Gen2 filesystem (blob container) to the workspace. This ADLS container is where the SAS models will be published.

Select any image to see a larger version.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

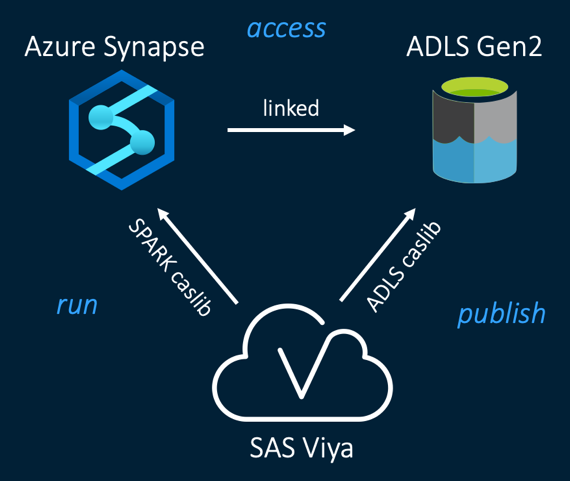

The overall publishing and running process is depicted below:

We need an ADLS caslib to publish a SAS model to ADLS and a Spark caslib to run it in Azure Synapse.

In addition, due to the nature of Azure Synapse which combines both data lake (Spark) and data warehouse (SQL Server) capabilities, we might need additional caslibs to manipulate/view data:

- An SQL Server caslib that will access data lake (Spark) data

- Indeed, the Spark caslib defined above for running models in Synapse does not support yet accessing Spark data. Thus, if we need to access Spark data, we need an SQL Server caslib.

- An SQL Server caslib that will access data warehouse (SQL Server) data

To publish a SAS model for consumption in Azure Synapse, I only need an ADLS caslib. It is exactly the same step as for Databricks (check out previous blog for more details). The ADLS storage account that we are publishing to must be the one that is linked to your Azure Synapse workspace. The code looks like the following:

caslib adls datasource=

(

srctype="adls",

accountname="**my-storage-account**",

filesystem="**my-container**",

applicationid="**my-application-id**",

resource="https://storage.azure.com/",

dnssuffix="dfs.core.windows.net"

) subdirs libref=adls ;

proc scoreaccel sessref=mysession ;

publishmodel

target=filesystem

caslib="adls"

password="**my-application-secret**"

modelname="01_gradboost_astore"

storetables="spark.gradboost_store"

modeldir="/models"

replacemodel=yes ;

quit ;

Run the model

To run a SAS model in Azure Synapse, we need a Spark caslib. This Spark caslib just acts as a placeholder for the connection details to the Spark pool in Synapse.

Then we start a Spark continuous session of the SAS Embedded Process and within Synapse we can specify how much resources we want to allocate to the Spark session.

/* Used for running models in Synapse */

caslib spark datasource=

(

srctype="spark",

platform=synapse,

username="**my-application-id**",

password="**my-application-secret**",

server="**synapse-workspace**.dev.azuresynapse.net",

schema="sqlpool",

hadoopJarPath="/azuredm/access-clients/spark/jars/sas",

resturl="**livy-rest-url**",

bulkload=no

) libref=spark ;

/* Start the SAS Embedded Process */

proc cas ;

sparkEmbeddedProcess.startSparkEP caslib="spark" trace=false

executorInstances=4

executorCores=4

executorMemory=56

driverMemory=32 ;

quit ;

We can run the model now:

/* Run the model */

proc scoreaccel sessref=mysession ;

runmodel

target=synapse

caslib="spark"

modelname="01_gradboost_astore"

modeldir="/models"

intable="hmeq_spark"

schema="default"

outtable="hmeq_spark_astore"

outschema="default"

forceoverwrite=yes ;

quit ;

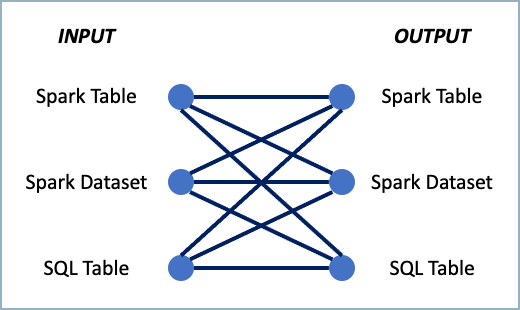

Scoring data in Synapse is very flexible in terms of input and output data objects. As depicted in the following figure, you can take several routes to score data in Synapse from SAS:

The following options drive the type of source/target data structure accessed:

- intable/outtable for Spark tables (single-part names)

- indataset/outdataset for Spark datasets

- intable/outtable with a two-part name ("dbo.hmeq_sql") for SQL tables (the first part is the SQL database schema name)

You can even interact with the Spark session before or after the model execution, for pre- or post-processing:

/* Load a filtered Spark table into a Spark dataset */

proc cas ;

sparkEmbeddedProcess.executeProgram caslib="spark"

program="var dsin = spark.table(""default.hmeq_spark"").where($""REASON"" === ""DebtCon"");" ;

quit ;

The program option accepts a user-written Scala syntax. Once you are done with the execution of all your models, you can stop the Spark continuous session:

proc cas ;

sparkEmbeddedProcess.stopSparkEP caslib="spark" ;

quit ;

Thanks for reading.

Find more articles from SAS Global Enablement and Learning here.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.