- Home

- /

- SAS Communities Library

- /

- Monitor your SAS Viya deployment with Lens

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Monitor your SAS Viya deployment with Lens

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

No matter the SAS Software version, there is an inevitable phenomenon that you will routinely observe in your professional life if you are working as an installation engineer for SAS : you launch a deployment process, and then you must wait…and wait, cross your fingers very hard (maybe pray if you are a believer), observe how things are going and then at some point check that it worked (or not) 😊

The part I’d like to talk about in this blog is the "observe how things are going" and I’d like to talk about it in the context of a Viya 4 deployment.

Spot a deployment issue as soon as it occurs

A standard Viya 4 deployment should take around one hour to complete, sometimes more (depending on the type of order, the latency between the cluster nodes and the image registry, the cluster nodes CPU power, etc…).

During this "wait" phase, some colleagues will take the opportunity maybe to grab a coffee or stretch their legs outside of their office. But others, maybe a little bit more anxious (like myself 😊) will want to monitor as closely as possible if things are REALLY going well…

My colleague @ScottMcCauley explained in his article how to assess the sas-readiness pod to determine if the platform has reached a global “readiness” state where you can tell your users to start connecting to the Viya platform to load and start to crunch their data 😊

But here, I’m more interested into looking the sequence of events, making sure everything happens as expected between the various parts of the platform that need to collaborate and identifying any problem as early as possible.

Most of the initial deployment or startup issues can be resolved without having to redeploy everything, so the sooner a problem is detected, the better 😊

Finally, monitoring closely what happens in the cluster when Viya is deployed (or started) really helps to understand how the Viya platform is working and what are the relations between the various components of the platform.

The sequence

For the moment, there is no order defined in the startup of the various components. All the pods (that contain the Viya services) will be submitted to the K8s system at once when their definition (manifest) is applied with the "Kubernetes apply" commands.

Then all the pods will follow a similar process moving from the "pending" to the "running" (and sometimes "completed") state.

You can check the official Kubernetes documentation for details, but basically, pods first wait for being scheduled by K8s on a given node, then each container images of the pod must be pulled (all that happens during the "pending" phase) and then the containers are created and started on the nodes ‘("running").

Even though there are hard dependencies between components (for example many micro services won’t be able to be ready until the sas-logon pod is ready – which in turn requires postgres to be fully up before reaching readiness), there is no startup order because the vast majority of the services can wait and loop until the service they depend on gets ready.

Note : Although there is no order at the moment, it could be interesting to have one, to avoid to lose all this waiting time spent by the services. Also with the current random start and limited capacity, stateless pods could prevent critical infrastructure services (like crunchy) to start preventing the platform to reach a working state.

While there is no pre-determined order in the way pods are started, we know that there are things that needs to be there to allow other things to come up.

We'll use this knowledge to know what to look at first, when we want to closely monitor a Viya deployment (or startup).

Demo with Lens (20mn)

In case you were not able to watch the whole video, or maybe, getting tired of listening the French accent 😉, I have provided, below, the key points to take away.

Recap

What to look at in Lens :

-

-

- stateful sets (01:47-3:31) : If something is not working there, your deployment will never complete successfully.

- sas-logon and Crunchy (3:31-8:16): sas-logon will complain about missing JDBC connection until the postgres instances are up and running.

- Storage (8:16-10:32) : make sure all the Persistent Volume are "bound", if Viya volumes are not there your deployment will never complete successfully.

- CAS pods status (17:13-18:02) : CAS is a core component of the platform, it could not work for various reasons, so it is always a good idea to do a sanity check of the CAS pods status as soon as possible.

- sas-readiness pod log (13:34-14:49 and end of the video) : It will tell you when the users can start to login.

We often use this handy command to monitor in real time the sas-readiness log :

-

-

watch -c -n 20 'kubectl -n viya4gcp-auto logs \ --selector=app.kubernetes.io/name=sas-readiness \ | tail -n 1 '

It will display different kind of messages depending on the deployment stage.

-

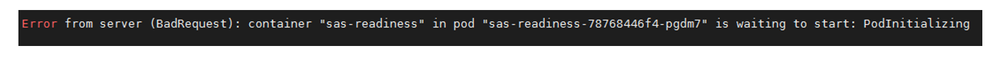

At the very beginning it just says that the sas-readiness pod is still initializing…

Select any image to see a larger version.

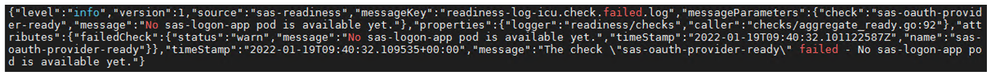

Mobile users: To view the images, select the "Full" version at the bottom of the page.Once the sas-readiness image will have been pulled and the container started, the log says that sas-logon is not up yet (sas-logon)

-

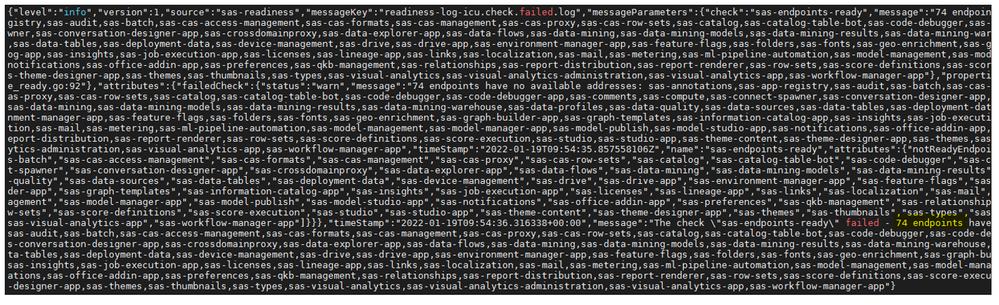

Then it tells us how many endpoints are still not available (74 in this example)

-

Finally, the number of unavailable endpoints should slowly decrease and, at the end , when all the endpoints are responding positively, the log will inform us that all checks are passed and for how long it has been testing the endpoints (41 minutes in the example below).

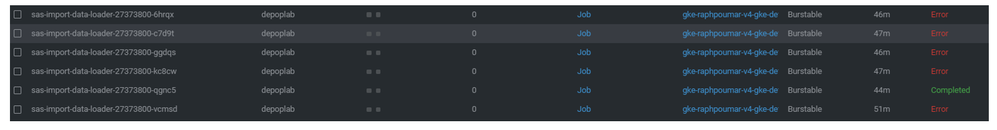

6. Pods with "Error" : Sometimes we can see some Pod's "Error" status as below. But they are not necessary a problem. For example in the screenshot below, it corresponds to several attempts for the sas-import-data-loader job to run with success. However we see that the last attempt was successful.

-

Conclusion

The 2 main challenges when monitoring a deployment (or restart of the platform services) are 1) to identify real errors from errors related to the services synchronization, and 2) the time duration factor is relative (depending on your combination of hardware and licensed software, a specific task taking more than 5 minutes could be either normal or indicate a problem).

-

What should NOT worry you; and could be perfectly normal during the deployment or startup phase:

-

- Startup probe failed

- Errors in the container’s logs

- Sas-logon pod taking time to start up

- Sas-logon complaining about no postgres database found (...unless there is a real issue with the postgres database)

- A lot of pods that were having the "ready" state now get the “pending” state (it means that they are restarted on other nodes, but thanks to the Viya deployments "RollingUpdate" strategy, the service remains available and the initial pod is only terminated when the new instance is ready).

-

What should worry you :

-

- Error messages in the containers logs that does not go away after a little while!

- Kubernetes "CrashLoopBackoff" or "Error" status that never goes away.

- Kubernetes pods that stays in "Pending" state forever.

- Kubernetes Error status ("CreateContainerConfigError", "CreateContainerError", etc...)

- Unbound persistent volumes

- A deployment that takes more than 2 or 3 hours (unless it is explained by long times to pull the images because of network slowness or a lot of latency between the cluster and the registry)

Thanks for reading !

Find more articles from SAS Global Enablement and Learning here.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.

- Find more articles tagged with:

- GEL