- Home

- /

- SAS Communities Library

- /

- Getting Started With Snowflake Database - Making it Faster

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Getting Started With Snowflake Database - Making it Faster

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

You may have heard that SAS is developing a new SAS/ACCESS engine for Snowflake. If you haven't, let me be the first to tell you that SAS is developing a new SAS/ACCESS engine for Snowflake!

In the meantime, we will be using SAS/ACCESS Interface to ODBC (or the new JDBC product) to access our Snowflake data. It is going to be fun but it is more fun to know what I am about to show you.

Let's start with some SAS code:

libname snow odbc complete="DRIVER={SnowflakeDSIIDriver};

SERVER=mysnowflakeaccount.snowflakecomputing.com;

WAREHOUSE=mywarehouse;

UID=myuser;

PWD=mypassword;

DATABASE=mydatabase;"

SCHEMA=myuser;

/* Let's load the cars data into snowflake */

data snow.cars;

set sashelp.cars;

run;

Here is what that happens:

175 libname snow odbc complete="DRIVER={SnowflakeDSIIDriver};

176 SERVER=mysnowflakeaccount.snowflakecomputing.com;

177 WAREHOUSE=mywarehouse;

178 UID=myuser;

179 PWD=mypassword;

180 DATABASE=mydatabase;"

181 SCHEMA=myuser;

NOTE: Libref SNOW was successfully assigned as follows:

Engine: ODBC

Physical Name:

182 data snow.cars;

183 set sashelp.cars;

184 run;

NOTE: SAS variable labels, formats, and lengths are not written to DBMS tables.

NOTE: There were 428 observations read from the data set SASHELP.CARS.

NOTE: The data set SNOW.CARS has 428 observations and 15 variables.

NOTE: DATA statement used (Total process time):

real time 44:24.54

cpu time 1.07 seconds

44 minutes and 25 seconds is a lot of time! It's crazy. Yes, I went to lunch while this was running. This is the first experience that SAS users had with Snowflake. If you have ever heard complaints, this is why.

Let's try something different:

libname snow odbc complete="DRIVER={SnowflakeDSIIDriver};

SERVER=mysnowflakeaccount.snowflakecomputing.com;

WAREHOUSE=mywarehouse;

UID=myuser;

PWD=mypassword;

DATABASE=mydatabase;"

SCHEMA=myuser

dbcommit=10000 autocommit=no

readbuff=200 insertbuff=200;

/* Let's load the cars data into snowflake */

data snow.cars2;

set sashelp.cars;

run;

And the results are (much better)!:

185 libname snow odbc complete="DRIVER={SnowflakeDSIIDriver};

186 SERVER=mysnowflakeaccount.snowflakecomputing.com;

187 WAREHOUSE=mywarehouse;

188 UID=myuser;

189 PWD=mypassword;

190 DATABASE=mydatabase;"

191 SCHEMA=myuser

192 dbcommit=10000 autocommit=no

193 readbuff=200 insertbuff=200;

NOTE: Libref SNOW was successfully assigned as follows:

Engine: ODBC

Physical Name:

194 data snow.cars2;

195 set sashelp.cars;

196 run;

NOTE: SAS variable labels, formats, and lengths are not written to DBMS tables.

NOTE: There were 428 observations read from the data set SASHELP.CARS.

NOTE: The data set SNOW.CARS2 has 428 observations and 15 variables.

NOTE: DATA statement used (Total process time):

real time 10.70 seconds

cpu time 0.07 seconds

The key take-away is this. When using SAS/ACCESS Interface to ODBC with Snowflake it is a great idea to add the following options:

- DBCOMMIT=

- AUTOCOMMIT=no

- READBUFF=

- INSERTBUFF=

The heavy hitters are READBUFF= and INSERTBUFF=. It is important to avoid the "bigger is better" trap. Notice the I set the values to 200; a simple guess. I am pretty sure that I could make this faster. It is possible that setting the values to a smaller numbers would improve performance.

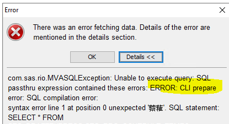

If you are using Linux/UNIX you may encounter issues with character sets. If you run code and see something similar to this you will need to change your encoding.

OPTIONS NONOTES NOSTIMER NOSOURCE NOSYNTAXCHECK;

72

73 proc sql;

74 select * from someuser.persons;

ODBC: AUTOCOMMIT turned ON for connection id 0 4 1544636964 no_name 0 SQL (2)

5 1544636964 no_name 0 SQL (2)

ODBC_1: Prepared: on connection 0 6 1544636964 no_name 0 SQL (2)

SELECT * FROM SOMEUSER.PERSONS 7 1544636964 no_name 0 SQL (2)

8 1544636964 no_name 0 SQL (2)

ERROR: CLI prepare error: SQL compilation error:syntax error line 1 at position 0 unexpected '䕓䕌'.

SQL statement: SELECT * FROM SOMEUSER.PERSONS.

NOTE: PROC SQL set option NOEXEC and will continue to check the syntax of statements.

75

76 OPTIONS NONOTES NOSTIMER NOSOURCE NOSYNTAXCHECK;

Here is the fix for the encoding issue (your sasv9.cfg file may be in a different location).

Modify the /opt/sas/viya/config/etc/workspaceserver/default/sasv9.cfg file to add the following:

-encoding latin1

I hope this helps.

Best wishes,

Jeff

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@JBailey - appreciate the tips. Looking to connect SAS to Snowflake but JDBC seems to be the better choice as SAS is running on AIX. You reference the new SAS/ACCESS JDBC product - have you been able to run the same test using JDBC? Assuming similar results but given the ODBC connector has been around longer, it's probably been run through the paces a bit more. Appreciate any guidance on this.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

This is exciting. this allows users to use compute power of Snowflake for data manipulation and use analytical power from SAS for analytics(pure statistics). Doesany statistical procs supports indb processing in snowflake?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi @cfranklin, I haven't run the same tests with JDBC. I should really do that but, I don't know when I would get to it.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi @umeshMahajan, neither SAS/ACCESS Interface to ODBC nor SAS/ACCESS Interface to JDBC support SQL In-Database procedure push-down.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@umeshMahajan : We are also facing similar issue while trying to access Snowflake table from SAS using ODBC connection. It was working fine till the time SAS encoding was latin1 but once we have changed it to UTF-8 this particular issue pops up.

Please let me know if you have any suggestions to overcome this issue.

Thanks in advance,

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@saurabh15 I don't know much of this particullar ODBC connector but this kind of issues generally appear when the ODBC connection configuration and the SAS configuration, in regards of encoding, are misaligned, specifically if SAS goes to double-byte (UTF-8) but the ODBC connection stays in single-byte (as latin), making the ODBC connection a bottleneck in regards of encoding.

I would like to suggest to check and change the configuration of the ODBC connection itself to a double-byte (as standard), generally in odbc.ini and/or odbcinst.ini, then test the SAS UTF-8 code again.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@JuanS_OCS Thanks for the suggestion. I will try this out and will get back to you.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@saurabh15 let us know!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Please has anyone try sas/snowflake odbc connection for bulk load using snowflake stage? When I tried I am getting invalid option name BL_STAGE.

Here is an excerpt of my code. Thanks.

data snow.table_stage (bulkload=yes bl_use_pipe=no bl_stage="user/test1" BL_COMPRESS=yes);

set tablex;

run;

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I suspect bulk loading isn't supported with the generic ODBC driver: SAS Help Center: Bulk Loading for ODBC

You most likely need SAS/ACCESS to Snowflake for this.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks, SASkiwi. Please what is the difference between SAS/ACCESS to Snowflake and SAS with generic ODBC My basic understanding now is that SAS with generic ODBC works with client sas base whereas SAS/ACCESS to Snowflake works with sas viya.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

SAS/ACCESS to Snowflake works with both SAS 9.4 and Viya, as does ODBC. It's a question as to whether you want the additional features a custom interface provides.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi @awbadam

SAS/ACCESS Interface to Snowflake works with both SAS Viya and SAS 9.4.

The differences between the Snowflake product and generic ODBC product are discussed in this paper.

https://www.sas.com/content/dam/SAS/support/en/sas-global-forum-proceedings/2020/4103-2020.pdf

Best wishes,

Jeff

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Jeff

I have checked your post of - connecting SAS to Snowflake with ODBC and tried the same with JDBC

libname snow jdbc complete="DRIVER={SnowflakeDSIIDriver};

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

The JDBC engine error suggests that you don't have the SAS/ACCESS Interface to JDBC installed and / or licensed. You can check this by running this code:

proc product_status;

run;

proc setinit;

run;Do you not have SAS/ACCESS Interface to Snowflake? That would be the best option to try.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.