- Home

- /

- Analytics

- /

- Forecasting

- /

- Re: Out-of-Sample Forecasting with Unobserved Components Model

- RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

I have a data set of 54 data points. my objective is to (1) decompose my data into trend and cycles using UCM and (2) to forecast. My question is that as I only have 54 data points is it necessary for me to HOLD some sample points for out-of-sample forecasting? It is right if I first estimate my model using all the data points and then use the entire sample to forecast.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

It is generally preferable to utilize a hold out (or "test") sample, but of course, you don't always have as many historical data points as you'd like.

Rob Hyndman provides useful guidance on sizing your "training" and "test" data sets in the article "Measuring Forecast Accuracy" that appears in the new book Business Forecasting: Practical Problems and Solutions (Wiley, 2015). You can also check out his online text at www.otexts.org/fpp/2/5.

Per Hyndman, test data is typically about 20% of the total sample, and ideally is at least as large as the maximum forecast horizon required. So if you have monthly data and need to forecast out one year, you have plenty of observations to include 42 points in your training data and the most recent 12 points in your test data. This should work fine for monthly data since you have over 3 full year cycles in the training data

If you have weekly or daily data, you might want to use the method of time series cross-validation, that is described in the article (and also discussed in this blog post: http://blogs.sas.com/content/forecasting/2016/03/18/rob-hyndman-measuring-forecast-accuracy/

Time series cross-validation is a good method when you don't have enough historical data, and can't afford to split off an adequately sized test set. Udo Sglavo wrote about implementing time series cross-validation in SAS in this series of blog posts:

- http://blogs.sas.com/content/forecasting/2011/09/01/come-on-irene-cross-validation-using-sas-forecas...

- http://blogs.sas.com/content/forecasting/2011/09/02/guest-blogger-udo-sglavo-on-cross-validation-usi...

- http://blogs.sas.com/content/forecasting/2011/09/06/guest-blogger-udo-sglavo-on-cross-validation-usi...

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Hi:

The typical practice, followed for example by Forecast Server, is to estimate the model first with in-sample data, select a model, then reestimate the model parameters using all data.

Usually, you want a hold-out period that is at least as long as your forecasting horizon, and you want to leave enough data in your sample to estimate your parameters accurately. Exactly what that means depends a lot on your data. If you have weekly data and want to forecast one year head, obviously 54 data points is not even enough for a model with a weekly cycle, let alone a hold-out sample. If you have yearly data with low noise, no cycles, and a clearly defined trend, you most likely can use a hold-out sample of 20% of your data without impunity.

AIC has been often used for model selection when out-of-sample analysis is not practical because it is asymptotically equivalent to minimizing the one-step ahead MSE. You need to use some caution for comparing models using AIC but if you are limiting yourself to the class of UCM models you should be OK.

For more details about the use of AIC in time series forecasting you can look at these comments by Rob Hyndman .

http://robjhyndman.com/hyndsight/aic/

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the replies. The blog was helpful. My data is annual time series from 1958 to 2012 and I want to forecast till 2020. This is what I understood.

- I should use data points 1958 to 2004 to estimate my model (I am using UNOBSERVED COMPONENTS MODEL)

- Then I should use the same model to forecast from 2005 to 2012 and compare the actual and the forecasted value.

- Then based in the best performing model I should make forecasts from 2012 to 2020 using the same model (estimated using data points 1958 to 2004)

Is this procedure right?

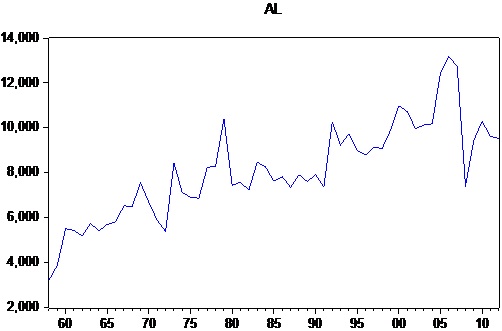

However my data shows extreme values from 2004 till 2009. I am attaching my data and the Graph

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Using holdout when there is a disturbance at the end of the series is always a tricky business. Especially if that represents a structural break. In that case, the model you estimate with your training data is of little use for forecasting the data after the break. Does your series behaves differently after the 2008 recession? You probably need to answer that question before you consider using a hold-out sample.

My collegue suggests the following models to detect if there is a level shift.

data check;

input y @@;

date = intnx('year', '01jan1958'd, _n_-1);

format date date.;

shift08 = year(date) >= 2008;

datalines;

3230.238004

3880.27782

5514.404608

5444.358113

5185.454737

5743.991179

5414.308928

5707.274559

5815.515973

6530.864179

6510.614764

7558.541769

6675.955715

5896.869334

5386.803788

8433.694569

7131.877172

6904.044902

6871.351978

8244.785764

8291.976594

10396.47307

7443.580244

7583.28

7229.149372

8471.909654

8260.934809

7634.038233

7825.882353

7361.713167

7915.309201

7616.26382

7909.621007

7357.098918

10272.38638

9232.976

9744.024933

8976.652994

8796.254019

9133.507813

9086.751497

9902.849854

10982.60692

10724.79418

9961.156651

10127.82939

10175

12464.84375

13182.99445

12721.34039

7407.807309

9458.164094

10289.00846

9612.903226

9535.010197

.

.

.

.

.

.

.

.

;

proc sgplot data=check;

series x=date y=y;

run;

*Detects a level shift starting from 2008;

*simple y = smooth trend + cycle + error model;

*the model seems OK based on residual plots;

proc ucm data=check;

id date interval=year;

model y;

irregular plot=smooth;

level variance=0 noest checkbreak ;

slope;

cycle plot=smooth;

estimate plot=panel;

forecast plot=decomp;

run;

*simple y = shift08 + smooth trend + cycle + error model;

* because of the dummy shift08, very few residuals to do

residual diagnostics. However, information criteria seem

to say that the model does improve on the previous one;

proc ucm data=check;

id date interval=year;

model y = shift08;

irregular plot=smooth;

level variance=0 noest checkbreak ;

slope;

cycle plot=smooth;

estimate plot=panel;

forecast plot=decomp;

run;

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Thank you very much for your reply. My data has two breaks detected in 1973 and in 1992. I include step intervention variables for them and run the model

proc ucm data = metals;

id year interval = year;

model AL=BREAK1973 BREAK1992;

irregular;

level variance=0 noest Plot=smooth;

slope;

cycle;

cycle;

estimate plot=all;

run;However I am stuck about the forecasting. i am not sure if i should hold out sample points (my data pertains to commodity markets and since mid 2000 markets have been volatile).

Can I estimate my model using the whole sample (1958 to 2012) and then forecast from 2008 till 2020 (Using the command given below) ? Is there any other option that i can use??

proc ucm data = metals;

id year interval = year;

model AL=BREAK1973 BREAK1992;

irregular;

level variance=0 noest Plot=smooth;

slope;

cycle;

cycle;

estimate plot=all;

forecast back=5 lead=13 plot=decomp;

run;

Thank you

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →- Printemps 2026 - MONSUG | 26-May-2026

- Free Half-Day Michigan SAS User Group Conference | 27-May-2026

- SAS Bowl LXI - SAS at 50 and SAS Innovate Recap | 27-May-2026

- Ask the Expert: Advanced Data Collection and Insights in SAS Customer Intelligence 360 | 28-May-2026

- Spring 2026 OASUS Meeting | 28-May-2026

- Spring 2026 ESUG Meeting - Social Celebration | 02-Jun-2026

- Spring 2026 ESUG Meeting | 03-Jun-2026