- Home

- /

- SAS Communities Library

- /

- How to read JSON data in SAS

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

How to read JSON data in SAS

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The number of SAS users who need to access JSON data has skyrocketed, thanks mainly to the proliferation of REST-based APIs and web services. JSON is also increasingly used as a standard for data exchange among widely used systems (such as FHIR for health data). The JSON libname engine is the recommended method for reading JSON data in SAS.

The basic use of the JSON engine looks like this:

filename mydata "/path/my-json-file.json";

libname myjson JSON fileref=mydata;

proc datasets lib=myjson; quit;You must use the FILENAME statement to create a fileref for the JSON file. In the LIBNAME statement, the JSON keyword and fileref=option indicate that you want SAS to read the JSON file as data. In the above example, PROC DATASETS is used to explore the structure of the data as SAS interprets it.

Notes:

- The JSON libname engine is a read-only engine. To convert SAS data to JSON, use PROC JSON.

- The JSON engine can read data only from a fileref, not from literal string values or from variables in a table. If you have JSON data from another source (such as a field in a database), you must first write the content to a file so the JSON engine can process it.

- The JSON engine requires complete, valid JSON in order to map the data into SAS. JSON snippets, or variations such as JSONL (multiple JSON objects in a single file, one per line), require preprocessing to build valid JSON before the engine can read them.

- JSON is almost always UTF-8 encoded text (so it supports national characters and even extended characters such as emojis). Therefore, you'll have the best success when using SAS with Unicode support, or ENCODING=UTF8 enabled. This is the default in SAS Viya.

How the JSON engine maps objects to tables

The JSON libname engine reads a valid JSON file and maps the content to one or more SAS data tables. As JSON data often represents a nested series of objects, the JSON engine creates these tables with relational keys that you can use to combine the data to fit your needs. The following example illustrates how the JSON engine interprets the sample JSON data included in the Wikipedia article about JSON. First, here's the SAS program to create and read the JSON:

filename wiki temp;

/* Sample JSON from Wikipedia */

data _null_;

file wiki;

infile datalines;

input;

put _infile_;

datalines;

{

"firstName": "John",

"lastName": "Smith",

"isAlive": true,

"age": 27,

"address": {

"streetAddress": "21 2nd Street",

"city": "New York",

"state": "NY",

"postalCode": "10021-3100"

},

"phoneNumbers": [

{

"type": "home",

"number": "212 555-1234"

},

{

"type": "office",

"number": "646 555-4567"

}

],

"children": [

"Catherine",

"Thomas",

"Trevor"

],

"spouse": null

}

;

run;

libname data JSON fileref=wiki;

proc datasets lib=data nolist nodetails;

contents data=_all_;

quit;

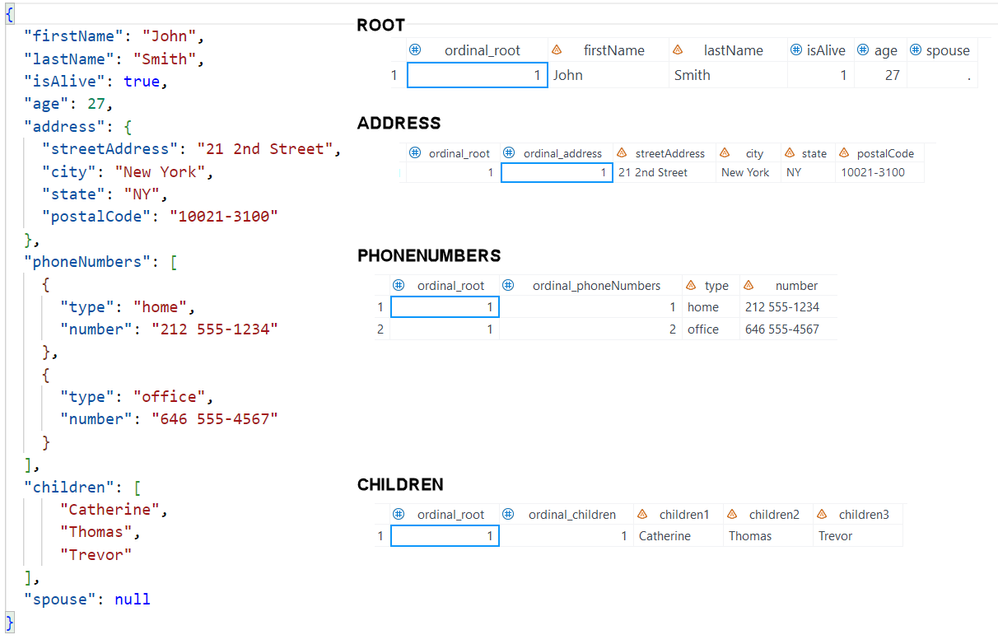

Here's the default mapping created by the JSON engine:

This example illustrates several of the default behaviors of the JSON engine:

- The engine creates a table named ROOT to hold the data that doesn't appear under another nested object.

- JSON data types lsuch as boolean (true/false) and null are converted to the SAS native values (0 or 1 for boolean, missing value (.) for null).

- The JSON engine adds key columns with the "ordinal_" prefix so you can combine these relational tables if needed.

- Name/value pairs are converted to SAS variables with values in the rows. JSON arrays (such as the "children" object in this example) are converted to a series of variables with the root name and a numerical suffix (children1, children2, children3).

With these related object tables, you can use DATA step or PROC SQL to combine the pieces for further analysis and reporting.

Example: Using SQL to combine JSON tables

For example, suppose you want to create a table with names (in the ROOT table) and all phone numbers (from the PHONENUMBERS table). In this example, ordinal_root is the common key that facilitates an inner join clause to combine the records.

proc sql;

create table phNumbers as select

t1.LastName, t1.firstName,

t2.type as phType, t2.number as phNumber

from data.root t1 inner join data.phonenumbers t2

on (t1.ordinal_root = t2.ordinal_root);

quit;

Output:

last first

Obs Name Name phType phNumber

1 Smith John home 212 555-1234

2 Smith John office 646 555-4567

Example: Using DATA step to combine JSON tables

Suppose we want to transform the JSON array of "children" associated with the root names, but create output with one record per child. We can use DATA step merge and a DATA step array to convert the "wide" data to long:

data children (keep=lastName firstName childName);

merge data.root data.children;

by ordinal_root;

array c{*} children:;

do i = 1 to dim(c);

childName = c[i];

output;

end;

run;

Output:

first last

Obs Name Name childName

1 John Smith Catherine

2 John Smith Thomas

3 John Smith Trevor Using the ALLDATA data set in a JSON library

In addition to the mapped "object" tables. the JSON engine creates the ALLDATA member that provides access to all of the JSON data in a single table. For the Wikipedia JSON example, the ALLDATA member looks like this:

Obs P P1 P2 V Value

1 1 firstName 1 John

2 1 lastName 1 Smith

3 1 isAlive 1 true

4 1 age 1 27

5 1 address 0

6 2 address streetAddress 1 21 2nd Street

7 2 address city 1 New York

8 2 address state 1 NY

9 2 address postalCode 1 10021-3100

10 1 phoneNumbers 0

11 2 phoneNumbers type 1 home

12 2 phoneNumbers number 1 212 555-1234

13 1 phoneNumbers 0

14 2 phoneNumbers type 1 office

15 2 phoneNumbers number 1 646 555-4567

16 1 children 0

17 2 children children1 1 Catherine

18 2 children children2 1 Thomas

19 2 children children3 1 Trevor

20 1 spouse 1 null

The ALLDATA data set contains these variables:

- P is a number that shows how many of the P1-Pn variables contain information for this observation

- P1–Pn are character values. There will be one "Pn" level variable for each nested level of the object represented.

- V is a number indicating whether the current observation contains a value (1 = yes, this record has a value)

- Value is a character value with the raw (untransformed) JSON value. For example, in the above example the value of "spouse" is null, not missing as it appears in the mapped ROOT table. "isAlive" is "true", not 1 as it appears in the mapped table.

The ALLDATA table is useful to process JSON data that doesn't map neatly to relational object tables, or for cases where you need just one or two values from the JSON data.

Example: Using PROC SQL and the ALLDATA table for summary operations

This PROC SQL step creates two macro variables: one with the number of "isAlive" records, and another with the total number of "children" records.

proc sql noprint;

select count(*) into :personsAlive

from data.alldata t1

where t1.v=1 and p1="isAlive" and value="true";

select count(*) into :numChildren

from data.alldata t1

where t1.v=1 and p1="children";

quit;

%put &=personsAlive &=numChildren;

Output:

PERSONSALIVE= 1 NUMCHILDREN= 3

Read more about the ALLDATA table in the JSON engine documentation.

Use JSON map files for greater control

While you can use post-processing techniques like PROC SQL and DATA step to create the data sets you need from JSON inputs, it's also possible to define these table structures "up front" by using a JSON map file. The JSON map file tells the JSON libname engine how to interpret the JSON inputs and types to create the tables that you ultimately need.

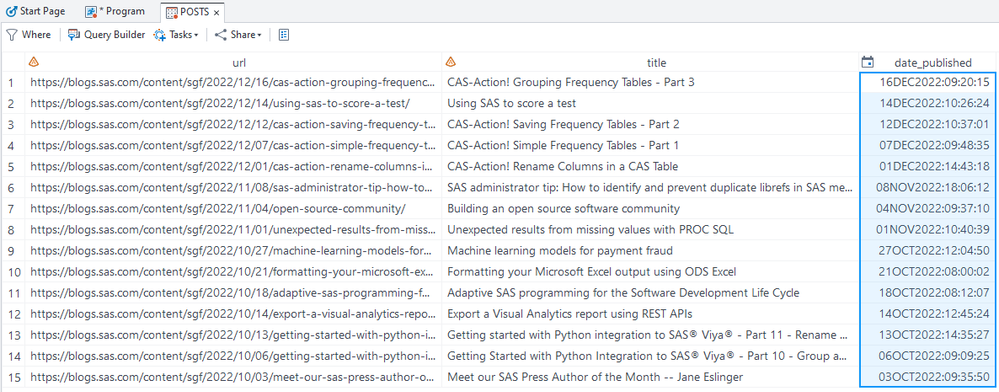

The following example reads the JSON-formatted feed from The SAS Users blog at blogs.sas.com. By adding the map and automap=create option, SAS will create a JSON map file that describes the data sets and fields in the JSON input -- the default mapping as SAS sees it.

filename blogs temp;

filename map '/home/blogs.map';

proc http

url="https://blogs.sas.com/content/sgf/feed/json"

out=blogs;

run;

libname sgfblog JSON fileref=blogs map=map automap=create;

With the automatic map, the JSON engine interprets these tables in the library:

ALLDATA DATA

ITEMS DATA

ITEMS_AUTHOR DATA

ITEMS_AUTHORS DATA

ITEMS_TAGS DATA

ROOT DATA

The generate map file stored in blogs.map is over 300 lines long. Here's an excerpt of just the ITEMS table:

"DSNAME": "items",

"TABLEPATH": "/root/items",

"VARIABLES": [

{

"NAME": "ordinal_root",

"TYPE": "ORDINAL",

"PATH": "/root"

},

{

"NAME": "ordinal_items",

"TYPE": "ORDINAL",

"PATH": "/root/items"

},

{

"NAME": "id",

"TYPE": "CHARACTER",

"PATH": "/root/items/id",

"CURRENT_LENGTH": 42

},

{

"NAME": "url",

"TYPE": "CHARACTER",

"PATH": "/root/items/url",

"CURRENT_LENGTH": 136

},

{

"NAME": "title",

"TYPE": "CHARACTER",

"PATH": "/root/items/title",

"CURRENT_LENGTH": 98

},

{

"NAME": "content_html",

"TYPE": "CHARACTER",

"PATH": "/root/items/content_html",

"CURRENT_LENGTH": 23646

},

{

"NAME": "content_text",

"TYPE": "CHARACTER",

"PATH": "/root/items/content_text",

"CURRENT_LENGTH": 12066

},

{

"NAME": "date_published",

"TYPE": "CHARACTER",

"PATH": "/root/items/date_published",

"CURRENT_LENGTH": 25

},

{

"NAME": "date_modified",

"TYPE": "CHARACTER",

"PATH": "/root/items/date_modified",

"CURRENT_LENGTH": 25

},

{

"NAME": "image",

"TYPE": "CHARACTER",

"PATH": "/root/items/image",

"CURRENT_LENGTH": 104

},

{

"NAME": "summary",

"TYPE": "CHARACTER",

"PATH": "/root/items/summary",

"CURRENT_LENGTH": 282

}

]

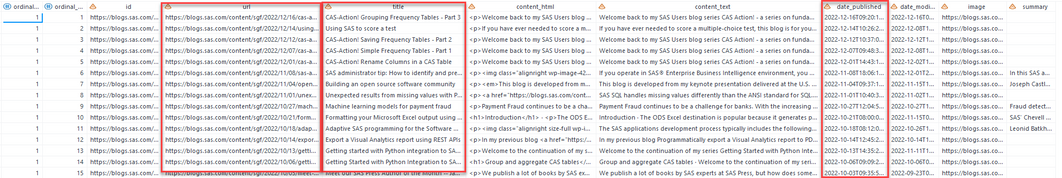

}The items table looks like this:

By using this map as a starting point, we can create a new map file -- one that is simpler, much smaller, and defines just the fields that we want. We can reference each field by its "path" in the JSON nested structure, and we can also specify the types and formats that we want in the final data.

In this example, let's assume that we want to rename the table to POSTS and keep only the url, title and date_published fields. Note that with the default map, the date field is character -- we want to convert that to a SAS datetime field.

This short map file accomplishes this:

{

"DATASETS": [

{

"DSNAME": "posts",

"TABLEPATH": "/root/items",

"VARIABLES": [

{

"NAME": "url",

"TYPE": "CHARACTER",

"PATH": "/root/items/url",

"CURRENT_LENGTH": 200

},

{

"NAME": "title",

"TYPE": "CHARACTER",

"PATH": "/root/items/title",

"CURRENT_LENGTH": 200

},

{

"NAME": "date_published",

"TYPE": "NUMERIC",

"PATH": "/root/items/date_published",

"INFORMAT": [ "IS8601DT", 19, 0 ],

"FORMAT": ["DATETIME", 20],

"CURRENT_LENGTH": 8

}

]

}

]

}

Our revised code for the libname:

/* new map file stored in blogs-revised.map */

libname newsgf JSON fileref=blogs map='/home/blogs-revised.map';

The new assigned library has just two tables: ALLDATA and POSTS. The POSTS table has the fields we asked for, set to the desired lengths and with the proper variable types and formats.

See the SAS documentation for the JSON Libname engine for more details about how to use JSON map files.

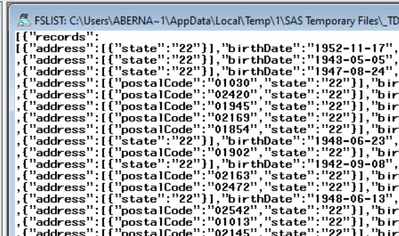

Reading newline-delimited JSON files (also known as JSONL or NDJSON)

Some systems store and exchange data using newline-delimited JSON. These standards are known as JSON Lines (.jsonl) or NDJSON. In a JSONL file, each line of text represents a valid JSON object -- building up to a series of records. But there is no hierarchical relationship among these lines, so when taken as a whole the JSONL file is not valid JSON. That is, a JSON parser can process each line individually, but it cannot process the file all at once. This includes the JSON library engine.

For a simple approach to read this in SAS, convert the JSONL input to a valid JSON by adding an object wrapper and comma delimiter between each line. Here's a sample program, adapted from one provided in a solved forum topic:

filename jsonl url "https://bcda.cms.gov/assets/data/Patient.ndjson";

filename json temp;

filename map temp;

/* Convert the NDJSON to JSON */

data _null_;

file json;

if _n_=1 then put '[{"records":' / '[' @;

else if eof then put ']}]';

else put ',' @;

infile jsonl end=eof;

input;

put _infile_;

run;

libname json json automap=create map=map;

/* Check resulted Library and data sets */

proc contents data=json._all_;

run;

Learn more

Reading data with the SAS JSON libname engine

How to test PROC HTTP and the JSON library engine

Copying data between SAS and JSON files (video)

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

The data step to generate the JSON file from a JSONL file can be simpler.

data _null_;

file json;

if _n_=1 then put '[{"records":' / '[' @;

else if eof then put ']}]';

else put ',' @;

infile jsonl end=eof;

input;

put _infile_;

run;Since the _INFILE_ variable is not referenced it will allow the copying of lines longer then 32K characters.

It also has the advantage or generating a file that is easier for humans to scan since the commas are the start of the line instead of the end.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks @Tom! I changed the version in the article to your improved version.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Another approach for reading JSON into SAS datasets is using PROC LUA.

https://www.sas.com/content/dam/SAS/support/en/sas-global-forum-proceedings/2019/3113-2019.pdf

https://www.lexjansen.com/cgi-bin/xsl_transform.php?x=phuse2021#phuse2021.ad06

https://www.lexjansen.com/pharmasug/2021/AD/PharmaSUG-2021-AD-168.pdf

Another use case of working with JSON in SAS is Dataset-JSON. Dataset-JSON is a new proposed format to replace the antiquated SAS v5 XPT files for submission of clinical data to regulatory agencies:

https://www.lexjansen.com/cgi-bin/xsl_transform.php?x=pharmasug2022#pharmasug2022.ad150

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks @lexjansen. And yet another approach is to use PROC PYTHON in SAS Viya. There's a planned webinar to cover using SAS with JSON in FHIR, another health-care related standard.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks for the link, @ChrisHemedinger

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks, @ChrisHemedinger . After some recent exposure to FHIR, I feel the reference to FHIR data as JSON might be a little confusing. An access mechanism for FHIR data (the Bulk API, which is suited for large analytical datasets) provides data in NDJSON format and the proper parsing of such data is an operation that some teams are paying attention to. Just a thought.

Ready to see what SAS Viya Copilot can do?

Visit the Tips & Tricks page for setup guidance, demos, and practical examples that show how Copilot supports your workflows.

SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.