- Home

- /

- SAS Communities Library

- /

- Do you have enough memory to launch?

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Do you have enough memory to launch?

- Article History

- RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The SAS GEL team manages numerous collections of test VMs that are heavily used by a large number of workshops. For a majority of the workshops each student is assigned his or her own collection. However, the larger collections are shared in order to minimize demand on the test environment and ensure there are enough machines for other workshops. The shared environments are long-running reservations that many students use over a period of months for multiple workshops. It was on a shared reservation where a memory-related issue surfaced that I believe is worth sharing.

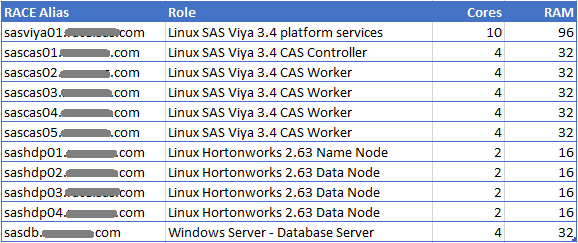

The Testing Environment

The shared testing environment that is used by multiple workshops at SAS is configured as follows.

Select any image to see a larger version.

Mobile users: To view the images, select the "Full" version at the bottom of the page.

When students enroll in a class, they receive a client machine that will connect them to the environment. Over the course of a couple of months several hundred users might connect to the shared resource for the virtual learning environment (VLE). As a result the memory usage of the environment experiences growth over time.

Lack of Memory?

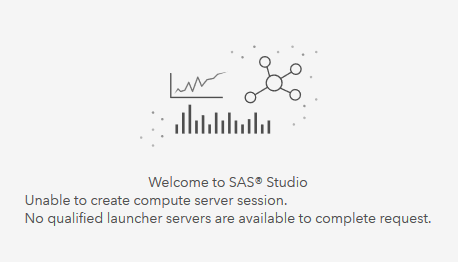

Recently a student sent a notification to the VLE message board that they were stepping through a hands-on exercise but that SAS Studio 5 couldn't launch a compute server. You may have seen this message before. However, the cause behind the failure is not immediately obvious:

Upon digging into this message I came across an internal SAS note that provides diagnostic steps.

After sifting through several logs, I discovered the following message in the consul log:

2019/04/15 11:08:13 [WARN] agent: Check "memory-check" is now warning

Based on this, it appeared to be a memory issue.

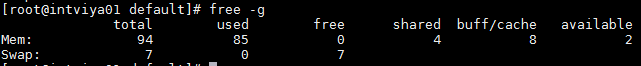

Looking at the amount of free memory it shows that 85 GB out of 94 GB are in use (~90%). There are two GB available and 8 GB used by buffer/cache, but no free memory. The man page for the free command defines used memory as [used = total - free - buffers - cache].

(The numbers shown here do not add up accurately because of rounding to gigabytes.)

The key field here is the available field, which is defined as "Estimation of how much memory is available for starting new applications, without swapping." So it appears that only 2 GB are available to start new applications. Based on the limited available memory it seemed worth the time to attempt to free some memory.

The top Command

The Linux "top" command is an efficient tool that can be used to quickly find the largest consumers of memory. You can use the sort option within "top" to sort entries on the RSS (Resident Set Size) column to find the largest consumers. A review of the sorted output reveals there were five java processes using over 5 GB of RAM:

Top Memory Users

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

4659 sas 20 0 4574020 1.725g 8820 S 0.3 1.8 144:01.34 java

6470 sas 20 0 2820028 1.019g 8352 S 0.0 1.1 63:41.74 java

21250 sas 20 0 6095888 0.978g 8396 S 0.0 1.0 48:18.93 java

20696 sas 20 0 3696948 992272 9460 S 1.3 1.0 410:26.14 java

22441 sas 20 0 2799880 952356 7772 S 0.0 1.0 51:18.43 java

Using the PID from the top coomand it is possible to track the process back to a service.

4659 - sas-viya-microanalyticservice-default

6470 - sas-viya-modelrepository-default

21250 - sas-viya-parse-execution-provider-default

20696 - sas-viya-report-data-default

22441 - sas-viya-themes-default

One of the actions that are recommended in the SAS note mentioned above is to restart the node where the launcher/compute server are deployed. In this shared environment we wanted to avoid bouncing the machine; therefore it was decided to restart the top five services with the hope that restarting them would free enough memory to be able to launch a compute server.

Here are the results captured immediately before and after the restarts.

| Service | Before (GB) | After (GB) |

| sas-viya-microanalyticservice-default | 1.73 | .33 |

| sas-viya-modelrepository-default | 1.02 | .91 |

| sas-viya-parse-execution-provider-default | 1.00 | .34 |

| sas-viya-report-data-default | 1.00 | .45 |

| sas-viya-themes-default | .90 | .40 |

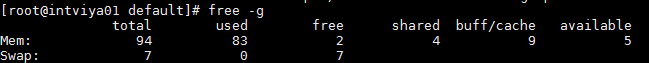

Now if we check memory at the system level we see that 2 GB are now free, compared to 0 GB before, and a total of 5 GB is available for starting new applications.

Once the five services were restarted an attempt to logon to SAS Studio 5 was successful and the launcher was able to launch a compute server.

But what is the memory limit that triggers this situation?

Some Additional Testing

Although the aforementioned SAS note provides some diagnostic steps that indicate lack of memory may trigger this scenario, no documentation could be found on configuration settings that manage the value that triggers it. The SAS Viya Administrator's documentation mentions memory warnings that are triggered when memory utilization hits 95%, but it makes no mention of the impact when it exceeds that level. So a little trial and error testing was in order.

A program that progressively consumes memory was needed in order to test the threshold. An internet search quickly provided a simple solution. The following C program once compiled and executed will consume 1 GB of RAM every 20 seconds. The interval of 20 seconds was chosen to allow a logon attempt for each gigabyte of RAM consumed.

#include <malloc.h>

#include <unistd.h>

#include <memory.h>

#define MB 1024 * 1024

int main() {

while (1) {

void *p = malloc( 1000*MB );

memset(p,0, 1000*MB );

sleep(20);

}

}Testing with Viya Services Split across Two Machines

Testing was initially performed on a different collection of SAS VMs that were used for another workshop. This collection spread the SAS Viya services over two machines, each with 32 GB of RAM. In this collection the intviya02 machine hosted the services in question.

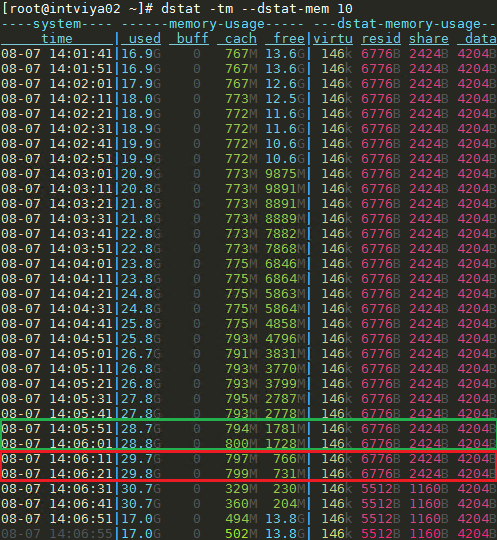

The above program was started and the dstat command was used to track memory usage every 10 seconds. (Unfortunately, dstat doesn't calculate and report the available memory.) Once the dstat command and the memory program were started a logon to SAS Studio 5 was attempted every 20 seconds.

When free+cache memory on the machine that was hosting the launcher and compute server fell to approximately 1.5 GB, the launcher failed. On this machine, that level represents approximately 5% of total memory.

Testing with SAS Viya Services on One Machine

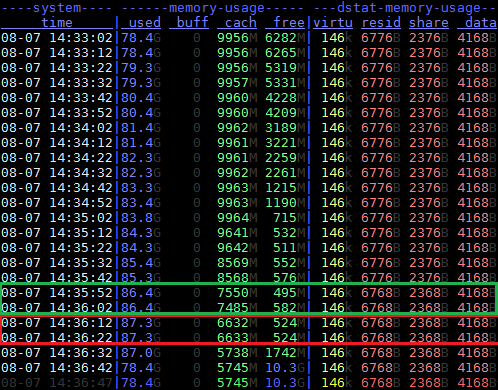

What about the shared environment where all Viya services were on one machine? Testing was completed there as well. This machine has 94 GB of RAM.

Here you can see from the dstat output that memory is steadily consumed until free memory falls to 524 MB.

When the free+cache memory on this machine fell to a little over 7 GB of RAM, the launcher server was not able to launch a compute server. This is approximately 7% of memory. But keep in mind that typically not all of the cache can be reclaimed. So in this case, where over 6GB of memory is used by the file cache, it is likely that the available memory is closer to 5%.

We conclude from these tests that when the 95% threshold is exceeded, the launcher server will no longer launch compute servers. It should be noted that Linux would be able to handle exceeding this value, but it might incur paging, which would result in performance degradation.

How to Manage this Scenario?

Although this scenario involved SAS Studio 5, it is expected that the launcher server will fail to launch compute servers for other applications. So what action can you take to provide both an immediate and a long-term fix?

Short Term

Although it is not ideal, you can take a similar action to the one taken above. Identify large consumers of memory and restart them to temporarily free memory. Of course this may not be an option in a production environment. Users won't be too happy if you pull the rug from underneath them. However, depending on application usage you may not have a choice. For example if the users are primarily using Model Studio and Visual Forecasting, both clients of the compute server, it may be necessary. Keep in mind that memory usage of restarted services will likely continue to grow and the environment may eventually return to the same state.

In the shared environment mentioned above, where the initial failure occurred, it was discovered that Anaconda's JupyterHub was not terminating user sessions. In addition to restarting SAS Viya services, many of the old unused Python sessions were terminated to free additional memory. If there are other non-SAS applications deployed on the same machine, terminating these may be another option to reduce and manage memory usage.

Long Term

Of course the long term solution is to add memory. With additional memory, there will be room for growth in the future. Adding memory is typically more challenging on physical machines than on virtual machines.

Future Deployments

If you are planning a SAS Viya deployment in the near future, ensure that the machine hosting the launcher and compute servers has adequate memory to accommodate some growth. The SAS Viya services in the shared SAS test environment consumed approximately 68 GB when services were first started. System memory usage grew to 85 GB over several months. But keep in mind that 85 GB includes the idle JupyterHub sessions that were never terminated. The "after" snapshot of the free command shown earlier does not include the memory that was freed by terminating these sessions. You can be sure memory consumption will vary depending on the products deployed and type of work being performed.

To assist with memory estimation, you can view this blog, which captured baseline memory usage by host group and service for a pre-production Viya 3.4 deployment (SAS Ship Event 18w30 - shipped in June 2018). We have since posted an updated blog on memory usage from a 19w21 deployment (shipped in May 2019).

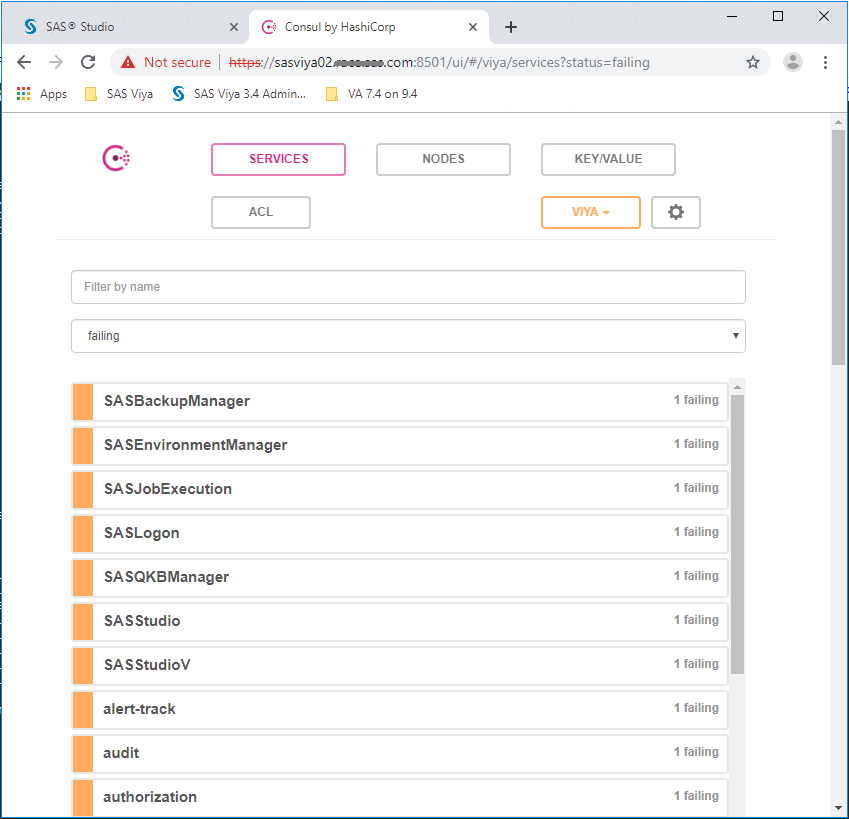

A Brief Consul Perspective

The SAS Viya service registry component, SAS Configuration Server, relies on HashiCorp Consul. While this testing was in progress the Consul web interface provided additional information. For example, if you use the Consul web interface to review the status of services once the memory threshold has been exceeded you will notice a large number of services with "Failed" status. Here is a partial view of the failing services:

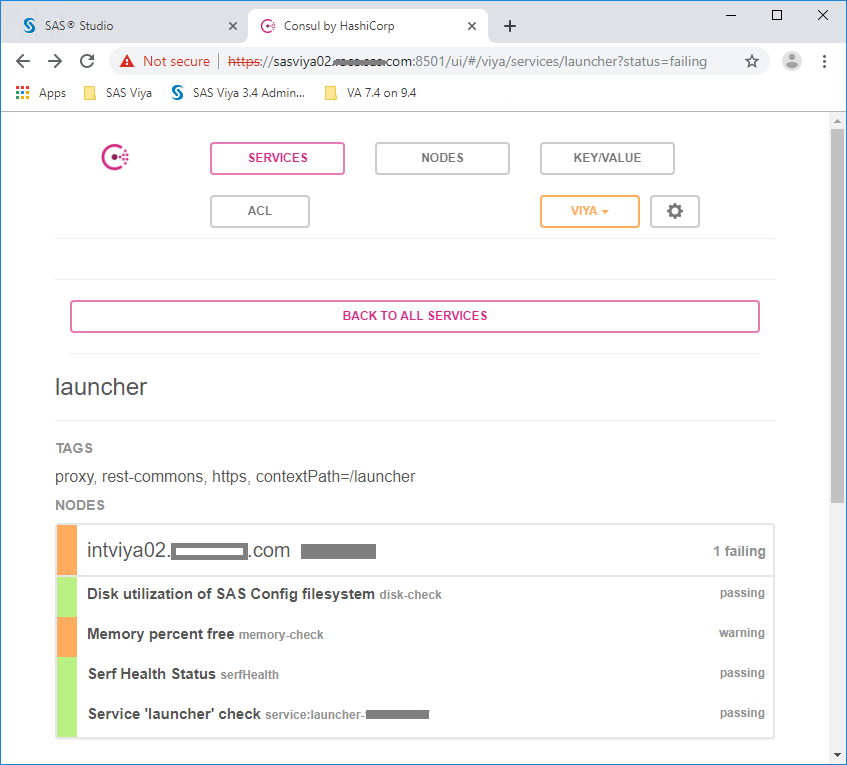

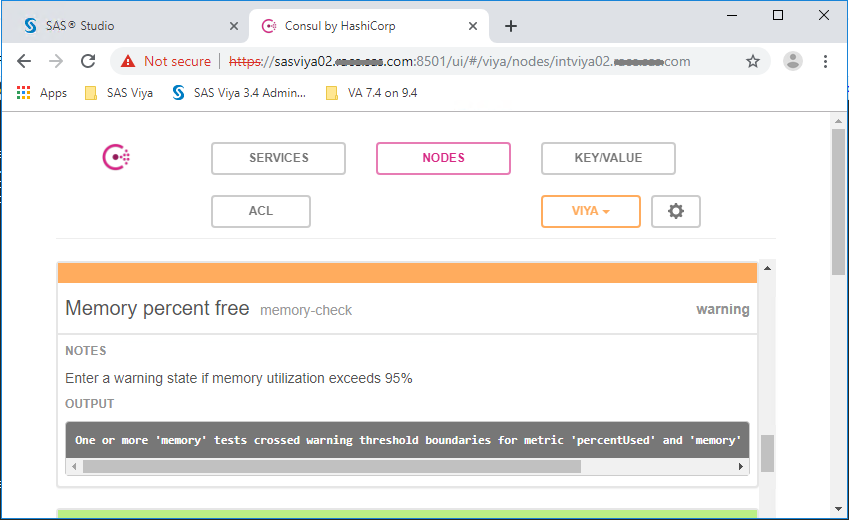

This status report is somewhat misleading because all of these services didn't truly fail. If we look at the launcher server, Consul reports that the Memory percent free (memory-check) is showing a Warning status. The failures indicate that each of thses services failed to pass a check, which in this case is a memory check.

And drilling into this warning shows that if memory utilization exceeds 95%, the warning is triggered. This confirms the requirement for 5% of free memory that we saw earlier. What Consul can't tell you is that this status will prevent the launching of a compute server.

So even though the Consul status of services provides a warning when the memory threshold is breached, it is not clear from a SAS Viya perspective what the implications are.

Ideally it would be worthwhile to set up a system alert notifying an admin when Used Memory approaches 95%. This would significantly reduce the possibility of encountering a situation where the launcher will not fire up a compute server.

Final Thoughts

The launcher server performs a memory check prior to launching a compute server. If 95% or more of the memory is in use at that moment, the launcher server will fail to launch a compute server. Although extensive testing has not been undertaken to see if other service failures occur, it is likely that the launcher will also fail for other clients.

For existing deployments the immediate options for environments that encounter this scenario are limited to trying to free memory by terminating or restarting existing processes. The longer-term option is to add memory, but this action will likely require an outage. With new deployments, you should therefore ensure that the environment is configured with adequate memory to start. The Deployment Guide that accompanies SAS Viya software orders provides memory guidelines in the System Requirements section.

Thanks for reading. And if you have additional information that you think others would find useful, please share.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

To me all this just shouts: "MEMORY LEAK!"

That should be fixed. One can't have server processes that consume RAM infinitely, without freeing it when no longer needed. Adding RAM would only delay the issue, not fix it.

This seems to be one of the inherent weaknesses of using JRE's.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Kurt. Thanks for reading.

Although the growth in memory usage may give the appearance of a memory leak, I have not observed any true memory leaks for any of the SAS Viya services (i.e. runaway). The growth is typical of pretty much any application where the initial memory usage of services is smaller than usage after they have been running for a while and users have been accessing them.

Many of the services cache and/or buffer content to improve responsiveness. When these services start there is little or nothing to cache. Over time as they are used the working set grows because they begin caching content.

A good example of this is the report data service. It caches CAS results so that users executing the same request do not have to issue a request to CAS again. When this service is started there is nothing in its cache. But as CAS activity picks up its memory footprint will grow.

It just so happens that the VM size for this machine is 94GB and most of the SAS Viya products are installed. And as noted it is not the only application using memory on the machine. The larger culprit was Jupyterhub, as it spawned multiple Python sessions which were not automatically terminated after inactivity. Once these were terminated and several services were restarted, which reduced their working set, memory utilization fell well under the threshold. The memory configuration for this host should be a little larger and automation put in place to terminate inactive Python sessions.

Hope that helps in clarifying the scenario.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

> Users won't be too happy if you pull the rug from underneath them. However, depending on application usage you may not have a choice.

That does not seem like an appropriate action does it? When SAS processes are hogging memory for caching, while other SAS processes run out and can't run, a better way to manage memory is needed. If the memory resource becomes scarce, these memory-hungry processes should release what they hold. They may become slower (or not: the OS also manages caching), but that's better than paging, or even killing, active processes.

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →SAS AI and Machine Learning Courses

The rapid growth of AI technologies is driving an AI skills gap and demand for AI talent. Ready to grow your AI literacy? SAS offers free ways to get started for beginners, business leaders, and analytics professionals of all skill levels. Your future self will thank you.

- Find more articles tagged with:

- GEL