- RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Hi everyone,

I often come across a situation where I have bunch of different addresses (input data in Lat Long) mapped all over the nation. What i need to do is use clsuter these locations in a way that allows me to specify "maximum distance netween any two points within a clsuter". In other words, specify maximum intra-cluster distance. For example, to cluster all my individual points in a way that -- maximum distance between any two points within a cluster is 70 miles.

I tired to search all the options online, using Proc Fastclus with Radius option etc, but nothing led me that allows me to specify intra cluster distance. Please let me know for any idea you may have,

-Thank you

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

After further thought, here's a better (and simpler) choice for a secondary objective that will now move nodes to higher weight clusters if possible:

/* secondary objective: maximize weighted sum of cluster node weights */

max MaxNodeWeights = sum {k in CLUSTERS} k * NodeWeightPerCluster[k];

/* call MILP solver to optimize secondary objective */

solve with MILP / primalin;

for {i in NODES} do;

for {k in CLUSTERS: AssignNodeToCluster[i,k].sol > 0.5} do;

assigned_cluster[i] = k;

leave;

end;

end;

In particular, it does assign Charleston to the "right" cluster.

Your suggested approach with cliques is interesting. Note that the network algorithms from PROC OPTNET are also available in PROC OPTMODEL via the SOLVE WITH NETWORK statement.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

As a workaround, you might consider setting the radius to 35 miles (half the desired distance). That is more restrictive than you want but, by the triangle inequality, will force the distance between each pair of points to be at most 70 miles.

Do you have code and data you can share?

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Hi there Rob,

Sorry for late reply, I perhaps missed your your answer coming through.

Data: May not be possible for me to get actual data here; but we can mock up any lat long locations.

Solution:

1) I agree with your workaround, this could be a possible solution. Here is current code-

Proc fastclus data= DATASET_NAME out=clust maxclusters=5;

var Lat Long;

run;

How should I use Radius option here with "Miles"? So if I want to give a radius of 35 "miles", what is necessary format to be used? My Lat and Long are in normal degrees right now.

2) Secondly- I agree that this option is more restrictive in nature. What could be possible pitfalls of using such an approach? Do you know any other methods of accomplishing the same?

Appreciate your help,

-Thank you,

Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

1. For help with the FASTCLUS procedure in SAS/STAT, please post in the SAS Statistical Procedures community.

2. The main pitfall of this workaround is that it overconstrains the problem and hence artifically increases the total distance from locations to clusters. An alternative that can capture exactly the constraint you really want is to use the MILP solver in SAS/OR. If you provide data, I'll take a look.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Hi Rob-

Sure, MILP procedure sounds closer and more accuratre. Do you mind sharing your email id or any other id, so I can share the dataset?

-Thank you,

-Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Please attach it to the discussion.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Okay, that sounds good too. Just that some internal policies don't allow me to post actual locations. So, let me create a small, but a good representative dataset and then I will post it here. Will try to upload by tomorrow or day after.

Thanks for help Rob, will work on getting the representative data,

-Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Hi Rob-

Here is my sample dataset attached (excel file). Let me know if you can't access the attachment.

1) So, the goal is to Cluster these locations, in a way- "To minimize the number of Clusters, with a maximum Intra-Cluster distance of 70 miles". Meaning, maximum distance between any two nodes should not exceed 70 miles.

2) Now, there could be some instances where the locations may tend to fall in more than one cluster. In such cases, tie should be broken using "Node_Weight" column- to maximize the "Total Sum of Node Weights" of a cluster. One such example is designed here with first 5 cities- Gassaway, Quinwood, Charleston, Kenova and Crum. In this case [Gassaway, Quinwood, Charleston] and [Charleston, Kenova and Crum]-- both can be possible clusters following 70 miles rule, but Charleston is common to both. In such cases, Charleston should be assigned to earlier cluster [Gassaway, Quinwood, Charleston] - since the sum total node weight of this Cluster (from attached excel sheet) gets maximized [ 30(Gassaway) + 40(Quinwood) + 55(Charleston) = 125 ] instead of other Cluster [Charleston, Kenova and Crum] where the sum could have been [ 55(Charleston) + 25(Kenova) +35(Crum) =115].

In other words, am using "Node_Weight" as a greedy metric to break ties. Since we are using MILP, I thought this could be somehow baked into the Objective function.

Let me know your thoughts on this,

This will be very helpful, thank you very much Rob,

-Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

The code below calls the MILP solver twice, once for each objective. The first solve uses the decomposition algorithm, which exploits the fact that cluster labels are arbitrary and hence the subproblem has identical blocks. (For an amusing application to wedding planning, see this blog post.) The second solve encourages clusters with high total node weights by maximizing the minimum total node weight per cluster.

proc optmodel;

/* declare parameters and read data */

num max_distance = 70;

set NODES;

str state {NODES};

str city {NODES};

num latitude {NODES};

num longitude {NODES};

num node_weight {NODES};

read data indata into NODES=[_N_] state city latitude longitude node_weight;

num distance {i in NODES, j in NODES: i < j} =

geodist(latitude[i], longitude[i], latitude[j], longitude[j], 'DM');

set CLUSTERS init NODES;

/* UseCluster[k] = 1 if cluster k is used, 0 otherwise */

var UseCluster {CLUSTERS} binary;

/* AssignNodeToCluster[i,k] = 1 if node i is assigned to cluster k, 0 otherwise */

var AssignNodeToCluster {NODES, CLUSTERS} binary;

/* primary objective: minimize number of used clusters */

min NumUsedClusters = sum {k in CLUSTERS} UseCluster[k];

/* assign each node to exactly one cluster */

con AssignOnce {i in NODES}:

sum {k in CLUSTERS} AssignNodeToCluster[i,k] = 1;

/* if AssignNodeToCluster[i,k] = 1 then UseCluster[k] = 1 */

con AssignToOpenCluster {i in NODES, k in CLUSTERS}:

AssignNodeToCluster[i,k] <= UseCluster[k];

/* cannot assign i and j to same cluster k if distance[i,j] > max_distance */

set CONFLICTS = {i in NODES, j in NODES: i < j and distance[i,j] > max_distance};

con Conflict {<i,j> in CONFLICTS, k in CLUSTERS}:

AssignNodeToCluster[i,k] + AssignNodeToCluster[j,k] <= UseCluster[k];

/* declare implicit variable to capture total node weight for each cluster */

impvar NodeWeightPerCluster {k in CLUSTERS} =

sum {i in NODES} node_weight[i] * AssignNodeToCluster[i,k];

/* call MILP solver to optimize primary objective */

solve with MILP / decomp=(method=set);

/* relabel clusters from 1 to the minimum number necessary */

num assigned_cluster {NODES};

for {i in NODES} do;

for {k in CLUSTERS: AssignNodeToCluster[i,k].sol > 0.5} do;

assigned_cluster[i] = k;

leave;

end;

end;

num new_cluster {1..card(NODES)};

num new_k init 0;

for {k in CLUSTERS: UseCluster[k].sol > 0.5} do;

new_k = new_k + 1;

new_cluster[k] = new_k;

end;

for {i in NODES, k in CLUSTERS} AssignNodeToCluster[i,k] = 0;

for {i in NODES} do;

assigned_cluster[i] = new_cluster[assigned_cluster[i]];

AssignNodeToCluster[i,assigned_cluster[i]] = 1;

end;

CLUSTERS = 1..new_k;

for {k in CLUSTERS} fix UseCluster[k] = 1;

/* secondary objective: maximize minimum node weight across clusters */

var MinNodeWeight >= 0 init min {k in CLUSTERS} NodeWeightPerCluster[k].sol;

max MaxMin = MinNodeWeight;

con MaxMinDef {k in CLUSTERS}:

MinNodeWeight <= NodeWeightPerCluster[k];

/* call MILP solver to optimize secondary objective */

solve with MILP / primalin;

for {i in NODES} do;

for {k in CLUSTERS: AssignNodeToCluster[i,k].sol > 0.5} do;

assigned_cluster[i] = k;

leave;

end;

end;

/* save solution to SAS data set */

create data outdata from [node] state city latitude longitude node_weight cluster=assigned_cluster;

quit;

/* print and plot solution by cluster */

proc sort data=outdata;

by cluster;

run;

proc print data=outdata;

by cluster;

sum node_weight;

run;

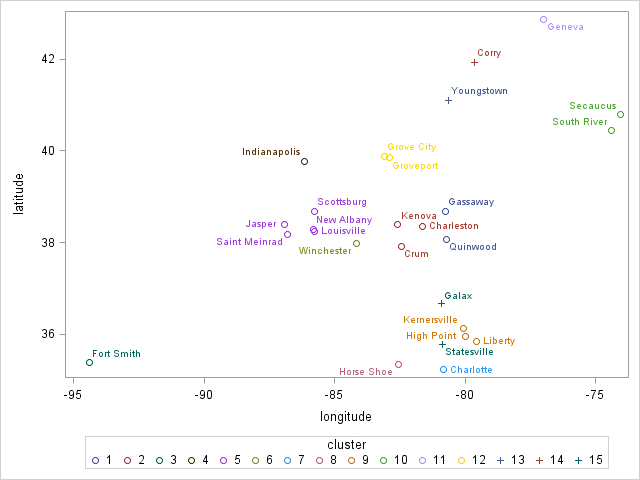

proc sgplot data=outdata;

scatter y=latitude x=longitude / datalabel=city group=cluster;

run;

On my machine running SAS/OR 14.2, I get the following 15 clusters in about 2 seconds:

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 1 | 1 | WV | Gassaway | 38.6732 | -80.7748 | 30 |

| 2 | 2 | WV | Quinwood | 38.0576 | -80.7068 | 40 |

| cluster | 70 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 3 | 3 | WV | Charleston | 38.3498 | -81.6326 | 55 |

| 4 | 4 | WV | Kenova | 38.3990 | -82.5782 | 25 |

| 5 | 5 | WV | Crum | 37.9057 | -82.4460 | 35 |

| cluster | 115 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 6 | 6 | AR | Fort Smith | 35.3859 | -94.3985 | 114 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 7 | 7 | IN | Indianapolis | 39.7684 | -86.1581 | 28 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 8 | 8 | IN | Jasper | 38.3914 | -86.9311 | 90 |

| 9 | 9 | IN | New Albany | 38.2856 | -85.8241 | 34 |

| 10 | 10 | IN | Saint Meinrad | 38.1711 | -86.8092 | 67 |

| 11 | 11 | IN | Scottsburg | 38.6856 | -85.7702 | 260 |

| 12 | 12 | KY | Louisville | 38.2527 | -85.7585 | 299 |

| cluster | 750 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 13 | 13 | KY | Winchester | 37.9901 | -84.1797 | 51 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 14 | 14 | NC | Charlotte | 35.2271 | -80.8431 | 294 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 15 | 16 | NC | Horse Shoe | 35.343 | -82.5567 | 49 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 16 | 15 | NC | High Point | 35.9557 | -80.0053 | 76 |

| 17 | 17 | NC | Kernersville | 36.1199 | -80.0737 | 150 |

| 18 | 18 | NC | Liberty | 35.8535 | -79.5717 | 89 |

| cluster | 315 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 19 | 20 | NJ | Secaucus | 40.7895 | -74.0565 | 202 |

| 20 | 21 | NJ | South River | 40.4465 | -74.3860 | 56 |

| cluster | 258 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 21 | 22 | NY | Geneva | 42.868 | -76.9856 | 51 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 22 | 23 | OH | Grove City | 39.8815 | -83.0930 | 46 |

| 23 | 24 | OH | Groveport | 39.8532 | -82.8883 | 63 |

| cluster | 109 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 24 | 25 | OH | Youngstown | 41.0998 | -80.6495 | 55 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 25 | 26 | PA | Corry | 41.9203 | -79.6403 | 35 |

| Obs | node | state | city | latitude | longitude | node_weight |

|---|---|---|---|---|---|---|

| 26 | 19 | NC | Statesville | 35.7826 | -80.8873 | 56 |

| 27 | 27 | VA | Galax | 36.6612 | -80.9240 | 43 |

| cluster | 99 | |||||

| 2393 |

You can see that Charleston ends up in the "wrong" cluster. The maximin approach essentially ignores all clusters whose total node weights are higher than the minimum, which is 28 in this case.

By the way, how many locations are in your real data?

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Hi Rob-

Thank you very much for sharing your idea. This helps to know the options.

But the way it works (maximin approach), I guess Charleston ends up in wrong cluster. Many such instances in a complex network and it might mean we lose out on potential opportunities to find find Clusters with maximized weights.

My real data currently has about 400-500. But this might go up in future.

As an alternative, was thinking perhaps to use PROC OPTNET. So given the nodes-

1) Calculate appropriate edges based on 70 mile distance. So, now we have a network where nodes are connected only if they are less than 70 miles apart.

2) Then use PROC OPTNET with Clique to find Cliques in above network.

3) Finally, eliminate common nodes programatically, starting with highest weight-- until no more common nodes are found.

I'm not sure if this will work, but will try to implement.

-Thanks for your approach Rob. It's an interesting appalication of OPTMODEL.

-Mike,

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

After further thought, here's a better (and simpler) choice for a secondary objective that will now move nodes to higher weight clusters if possible:

/* secondary objective: maximize weighted sum of cluster node weights */

max MaxNodeWeights = sum {k in CLUSTERS} k * NodeWeightPerCluster[k];

/* call MILP solver to optimize secondary objective */

solve with MILP / primalin;

for {i in NODES} do;

for {k in CLUSTERS: AssignNodeToCluster[i,k].sol > 0.5} do;

assigned_cluster[i] = k;

leave;

end;

end;

In particular, it does assign Charleston to the "right" cluster.

Your suggested approach with cliques is interesting. Note that the network algorithms from PROC OPTNET are also available in PROC OPTMODEL via the SOLVE WITH NETWORK statement.

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Awesome !! Yeah, didn't think about secondary objective putting it this way. It's simple and accurate.

Thank you Rob, this was fun exercise. Am halfway through Cliques approach, I think I'll implement both the approaches. It's great to exchange ideas.

-Thank you again for revising your code,

Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Glad to help. By the way, the new secondary objective also naturally minimizes the number of clusters. So here is an even simpler and shorter version that calls the solver only once. An optimal solution will have the clusters in nonincreasing order of node weight, with all the empty clusters at the end, so I removed the relabeling section. For your real data, it is still conceivable that the two-objective version solves faster, so please try both.

proc optmodel;

/* declare parameters and read data */

num max_distance = 70;

set NODES;

str state {NODES};

str city {NODES};

num latitude {NODES};

num longitude {NODES};

num node_weight {NODES};

read data indata into NODES=[_N_] state city latitude longitude node_weight;

num distance {i in NODES, j in NODES: i < j} =

geodist(latitude[i], longitude[i], latitude[j], longitude[j], 'DM');

set CLUSTERS init NODES;

/* UseCluster[k] = 1 if cluster k is used, 0 otherwise */

var UseCluster {CLUSTERS} binary;

/* AssignNodeToCluster[i,k] = 1 if node i is assigned to cluster k, 0 otherwise */

var AssignNodeToCluster {NODES, CLUSTERS} binary;

/* assign each node to exactly one cluster */

con AssignOnce {i in NODES}:

sum {k in CLUSTERS} AssignNodeToCluster[i,k] = 1;

/* if AssignNodeToCluster[i,k] = 1 then UseCluster[k] = 1 */

con AssignToOpenCluster {i in NODES, k in CLUSTERS}:

AssignNodeToCluster[i,k] <= UseCluster[k];

/* cannot assign i and j to same cluster k if distance[i,j] > max_distance */

set CONFLICTS = {i in NODES, j in NODES: i < j and distance[i,j] > max_distance};

con Conflict {<i,j> in CONFLICTS, k in CLUSTERS}:

AssignNodeToCluster[i,k] + AssignNodeToCluster[j,k] <= UseCluster[k];

/* declare implicit variable to capture total node weight for each cluster */

impvar NodeWeightPerCluster {k in CLUSTERS} =

sum {i in NODES} node_weight[i] * AssignNodeToCluster[i,k];

/* objective: move nodes to higher weight clusters if possible */

min MaxNodeWeights = sum {k in CLUSTERS} k * NodeWeightPerCluster[k];

/* call MILP solver */

solve with MILP / decomp=(method=set);

num assigned_cluster {NODES};

for {i in NODES} do;

for {k in CLUSTERS: AssignNodeToCluster[i,k].sol > 0.5} do;

assigned_cluster[i] = k;

leave;

end;

end;

/* save solution to SAS data set */

create data outdata from [node] state city latitude longitude node_weight cluster=assigned_cluster;

quit;

/* print solution by cluster */

proc sort data=outdata;

by cluster;

run;

proc print data=outdata;

by cluster;

sum node_weight;

run;

proc sgplot data=outdata;

scatter y=latitude x=longitude / datalabel=city group=cluster;

run;- Mark as New

- Bookmark

- Subscribe

- Mute

- RSS Feed

- Permalink

- Report Inappropriate Content

Sounds good !! This is interesting.

Thank you very much Rob, I'll implment both versions.

-Mike

Catch up on SAS Innovate 2026

Nearly 200 sessions are now available on demand with the SAS Innovate Digital Pass.

Explore Now →- WUSS Virtual Class: Asking Better Questions: The Art and Science of Survey Design | 19-May-2026

- Spring 2026 BC Health SAS User Group Meeting | 20-May-2026

- Ask the Expert: Where Does My SQL Process? | 21-May-2026

- Printemps 2026 - MONSUG | 26-May-2026

- Free Half-Day Michigan SAS User Group Conference | 27-May-2026

- SAS Bowl LXI - SAS at 50 and SAS Innovate Recap | 27-May-2026

- WUSS Virtual Class: SQL – The Grammar behind AI: AI depends on data – SQL makes that data usable | 27-May-2026